MongoDB creates the default _id index when creating a document. The _id index prevents users from inserting two documents with the same _id value. You cannot drop an index on an _id field.

The following syntax is used to create an index in MongoDB:

>db.collection.createIndex(<key and index type specification>, <options>);

The preceding method creates an index only if an index with the same specification does not exist. MongoDB indexes use the B-tree data structure.

The following are the different types of indexes:

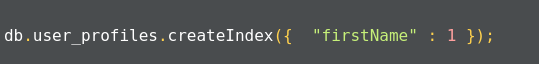

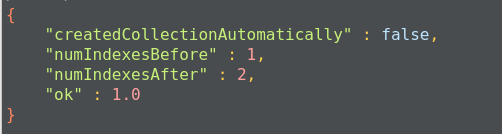

- Single field: In addition to the _id field index, MongoDB allows the creation of an index on any single field in ascending or descending order. For a single field index, the order of the index does not matter as MongoDB can traverse indexes in any order. The following is an example of creating an index on the single field where we are creating an index on the firstName field of the user_profiles collection:

The query gives acknowledgment after creating the index:

This will create an ascending index on the firstName field. To create a descending index, we have to provide -1 instead of 1.

- Compound index: MongoDB also supports user-defined indexes on multiple fields. The order of fields defined while creating an index has a significant effect. For example, a compound index defined as {firstName:1, age:-1} will sort data by firstName first and then each firstName with age.

- Multikey index: MongoDB uses multi-key indexes to index the content in the array. If you index the field that contains the array values, MongoDB creates an index for each field in the object of an array. These indexes allow queries to select the document by matching the element or set of elements of the array. MongoDB automatically decides whether to create multi-key indexes or not.

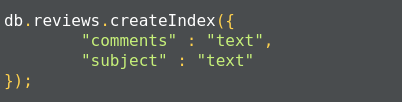

- Text indexes: MongoDB provides text indexes that support the searching of string contents in the MongoDB collection. To create text indexes, we have to use the db.collection.createIndex() method, but we need to pass a text string literal in the query:

You can also create text indexes on multiple fields, for example:

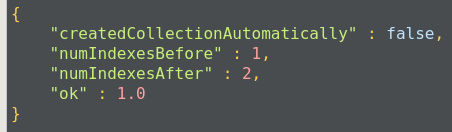

Once the index is created, we get an acknowledgment:

Compound indexes can be used with text indexes to define an ascending or descending order of the index.

- Hashed index: To support hash-based sharding, MongoDB supports hashed indexes. In this approach, indexes store the hash value and query, and the select operation checks the hashed indexes. Hashed indexes can support only equality-based operations. They are limited in their performance of range-based operations.

Indexes have the following properties:

- Unique indexes: Indexes should maintain uniqueness. This makes MongoDB drop the duplicate value from indexes.

- Partial Indexes: Partial indexes apply the index on documents of a collection that match a specified condition. By applying an index on the subset of documents in the collection, partial indexes have a lower storage requirement as well as a reduced performance cost.

- Sparse index: In the sparse index, MongoDB includes only those documents in the index in which the index field is present, other documents are discarded. We can combine unique indexes with a sparse index to reject documents that have duplicate values but ignore documents that have an indexed key.

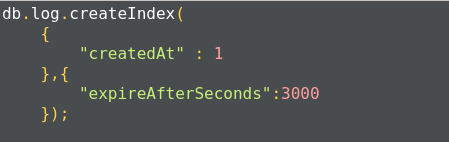

- TTL index: TTL indexes are a special type of indexes where MongoDB will automatically remove the document from the collection after a certain amount of time. Such indexes are ideal to remove machine-generated data, logs, and session information that we need for a finite duration. The following TTL index will automatically delete data from the log table after 3000 seconds:

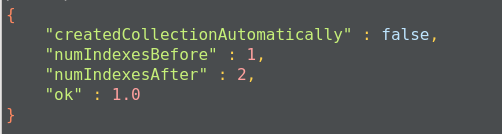

Once the index is created, we get an acknowledgment message:

The limitations of indexes:

- A single collection can have up to 64 indexes only.

- The qualified index name is <database-name>.<collection-name>.$<index-name> and cannot have more than 128 characters. By default, the index name is a combination of index type and field name. You can specify an index name while using the createIndex() method to ensure that the fully-qualified name does not exceed the limit.

- There can be no more than 31 fields in the compound index.

- The query cannot use both text and geospatial indexes. You cannot combine the $text operator, which requires text indexes, with some other query operator required for special indexes. For example, you cannot combine the $text operator with the $near operator.

- Fields with 2d sphere indexes can only hold geometry data. 2d sphere indexes are specially provided for geometric data operations. For example, to perform operations on co-ordinate, we have to provide data as points on a planer co-ordinate system, [x, y]. For non-geometries, the data query operation will fail.

The limitation on data:

- The maximum number of documents in a capped collection must be less than 2^32. We should define it by the max parameter while creating it. If you do not specify, the capped collection can have any number of documents, which will slow down the queries.

- The MMAPv1 storage engine will allow 16,000 data files per database, which means it provides the maximum size of 32 TB.

We can set the storage.mmapv1.smallfile parameter to reduce the size of the database to 8 TB only. - Replica sets can have up to 50 members.

- Shard keys cannot exceed 512 bytes.