From the first edition of Unity in Action by Joseph Hocking

“The text is clear and concise, and the examples are outstanding. As a new user, I found this book to be an invaluable resource.”

—Dan Kacenjar Sr., Cornerstone Software

“All the roadblocks evaporated, and I took my game from concept to build in short order.”

—Philip Taffet, SOHOsoft LLC

“Gets you up and running in no time.”

—Sergio Arbeo, codecantor

“Covers all the key elements for using Unity effectively.”

—Shiloh Morris, Southern Nevada Water Authority

“Useful and to the point! You will learn the basics and more to start developing your own games”

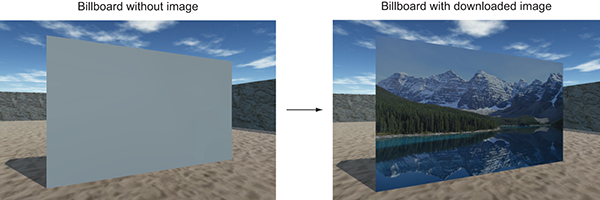

—Victor M. Perez, Software developer

“Recommended for anyone starting out with Unity.”

—Alex Lucas, Independent Contractor

“Teaches with good clean code and gives ideas on how to alter it for more interesting results.”

—Amazon reader

Second Edition

MANNING

Shelter Island

For online information and ordering of this and other Manning books, please visit www.manning.com. The publisher offers discounts on this book when ordered in quantity.

For more information, please contact

Special Sales Department

Manning Publications Co.

20 Baldwin Road

PO Box 761

Shelter Island, NY 11964

Email: orders@manning.com

©2018 by Manning Publications Co. All rights reserved.

No part of this publication may be reproduced, stored in a retrieval system, or transmitted, in any form or by means electronic, mechanical, photocopying, or otherwise, without prior written permission of the publisher.

Many of the designations used by manufacturers and sellers to distinguish their products are claimed as trademarks. Where those designations appear in the book, and Manning Publications was aware of a trademark claim, the designations have been printed in initial caps or all caps.

Recognizing the importance of preserving what has been written, it is Manning’s policy to have the books we publish printed on acid-free paper, and we exert our best efforts to that end. Recognizing also our responsibility to conserve the resources of our planet, Manning books are printed on paper that is at least 15 percent recycled and processed without the use of elemental chlorine.

Manning Publications Co.

20 Baldwin Road

PO Box 761

Shelter Island, NY 11964

Development editor: Candace West

Technical development editor: René van den Berg

Review editor: Ivan Martinovic

Project editor: David Novak

Copy editor: Safis Editing

Proofreader: Elizabeth Martin

Technical proofreader: Shiloh Morris

Typesetter: Happenstance Type-O-Rama

Cover designer: Marija Tudor

ISBN 9781617294969

Printed in the United States of America

1 2 3 4 5 6 7 8 9 10 - EBM - 22 21 20 19 18

I started programming games in 1982. It wasn’t easy. We had no internet. Resources were limited to a handful of mostly terrible books and magazines that offered fascinating but confusing code fragments, and as for game engines—well, there weren’t any! Coding games was a massive uphill battle.

How I envy you, reader, holding the power of this book in your hands. The Unity engine has done so much to open game programming up to so many people. Unity has managed to strike an excellent balance by being a powerful, professional game engine that’s still affordable and approachable for someone just getting started.

Approachable, that is, with the right guidance. I once spent time in a circus troupe run by a magician. He was kind enough to take me in and helped guide me toward becoming a good performer. “When you stand on a stage,” he pronounced, “you make a promise. And that promise is ‘I will not waste your time.’”

What I love most about Unity in Action is the “action” part. Joe Hocking wastes none of your time and gets you coding fast—and not just nonsense code, but interesting code that you can understand and build from, because he knows you don’t just want to read his book, and you don’t just want to program his examples—you want to be coding your own game.

And with his guidance, you’ll be able to do that sooner than you might expect. Follow Joe’s steps, but when you feel ready, don’t be shy about diverging from his path and breaking out on your own. Skip to what interests you most—try experiments, be bold and brave! You can always return to the text if you get too lost.

But let’s not dally in this foreword—the entire future of game development is impatiently waiting for you to begin! Mark this day on your calendar, for today is the day that everything changed. It will be forever remembered as the day you started making games.

From the First Edition

Jesse Schell

CEO of Schell Games

Author of The Art of Game Design

https://www.amazon.com/Art-Game-Design-Lenses-Second/dp/1466598646/

I’ve been programming games for quite some time, but only started using Unity relatively recently. Unity didn’t exist when I first started developing games; the first version was released in 2005. Right from the start, it had a lot of promise as a game development tool, but it didn’t come into its own until several versions later. In particular, platforms like iOS and Android (collectively referred to as “mobile”) didn’t emerge until later, and those platforms factor heavily into Unity’s growing prominence.

Initially, I viewed Unity as a curiosity, an interesting development tool to keep an eye on but not actually use. During that time, I was programming games for both desktop computers and websites and doing projects for a range of clients. I was using tools like Blitz3D and Flash, which were great to program in but were limiting in a lot of ways. As those tools started to show their age, I kept looking for better ways to develop games.

I started experimenting with Unity around version 3, and then completely switched to it for my development work at Synapse Games. At first, I worked for Synapse on web games, but we eventually moved over to mobile games. And then we came full circle because Unity enabled us to deploy to the web in addition to mobile, all from one codebase!

I’ve always seen sharing knowledge as important, and I’ve taught game development for several years. A large part of why I do this is the example set for me by the many mentors and teachers I’ve had. (Incidentally, you may even have heard of one of my teachers because he was such an inspiring person: Randy Pausch delivered The Last Lecture shortly before he passed away in 2008.) I’ve taught classes at several schools, and I’ve always wanted to write a book about game development.

In many ways, what I’ve written here is the book I wish had existed back when I was first learning Unity. Among Unity’s many virtues is a huge treasure trove of learning resources, but those resources tend to take the form of unfocused fragments (like the script reference or isolated tutorials) and require much digging to find what you need. Ideally, I’d have a book that wrapped up everything I needed to know in one place and presented it in a clear and logical manner, so now I’m writing such a book for you. I’m targeting people who already know how to program but who are newcomers to Unity, and possibly new to game development in general. The choice of projects reflects my experience of gaining skills and confidence by doing a variety of freelance projects in rapid succession.

In learning to develop games using Unity, you’re setting out on an exciting adventure. For me, learning how to develop games meant putting up with a lot of hassle. You, on the other hand, have the advantage of a single coherent resource to learn from: this book!

I would like to thank Manning Publications for giving me the opportunity to write this book. The editors I worked with, including Robin de Jongh and especially Dan Maharry, helped me throughout this undertaking, and the book is much stronger for their feedback. Candace West took over as primary editor for the second edition. My sincere thanks also to the many others who worked with me during the development and production of the book.

My writing benefited from the scrutiny of reviewers every step of the way. Thanks to Alex Lucas, Craig Hoffman, Dan Kacenjar, Joshua Frederick, Luca Campobasso, Mark Elston, Philip Taffet, René van den Berg, Sergio Arbeo Rodríguez, Shiloh Morris, Victor M. Perez, Christopher Haupt, Claudio Caseiro, David Torribia Iñigo, Dean Tsaltas, Eric Williams, Nickie Buckner, Robin Dewson, Sergey Evsikov, and Tanya Wilke. Special thanks to the notable review work by technical development editor Scott Chaussee and by technical proofreader Christopher Haupt, with René van den Berg and Shiloh Morris stepping into those roles for the 2nd edition. And I also want to thank Jesse Schell for writing the foreword to my book.

Next, I’d like to recognize the people who’ve made my experience with Unity a fruitful one. That, of course, starts with Unity Technologies, the company that makes Unity (the game engine). I am indebted to the community at gamedev.stackexchange.com/; I visit that QA site almost daily to learn from others and to answer questions. And the biggest push for me to use Unity came from Alex Reeve, my boss at Synapse Games. Similarly, I’ve picked up tricks and techniques from my coworkers, and they all show up in the code I write.

Finally, I want to thank my wife, Virginia, for her support during the time I was writing the book. Until I started working on it, I never really understood how much a book project takes over your life and affects everyone around you. Thank you so much for your love and encouragement.

Unity in Action, Second Edition is a book about programming games in Unity. Think of it as an intro to Unity for experienced programmers. The goal of this book is straightforward: to take people who have some programming experience but no experience with Unity and teach them how to develop a game using Unity.

The best way of teaching development is through example projects, with students learning by doing, and that’s the approach this book takes. I’ll present topics as steps toward building sample games, and you’ll be encouraged to build these games in Unity while exploring the book. We’ll go through a selection of different projects every few chapters, rather than one monolithic project developed over the entire book. (Sometimes other books take the “one monolithic project” approach, but that can make it hard to jump into the middle if the early chapters aren’t relevant to you.)

This book will have more rigorous programming content than most Unity books (especially beginners’ books). Unity is often portrayed as a list of features with no programming required, which is a misleading view that won’t teach people what they need to know in order to produce commercial titles. If you don’t already know how to program a computer, I suggest going to a resource like Codecademy first (the computer programming lessons at Khan Academy work well, too) and then come back to this book after learning how to program.

Don’t worry about the exact programming language; C# is used throughout this book, but skills from other languages will transfer quite well. Although the first part of the book will take its time introducing new concepts and will carefully and deliberately step you through developing your first game in Unity, the remaining chapters will move a lot faster in order to take readers through projects in multiple game genres. The book will end with a chapter describing deployment to various platforms like the web and mobile, but the main thrust of the book won’t make any reference to the ultimate deployment target because Unity is wonderfully platform-agnostic.

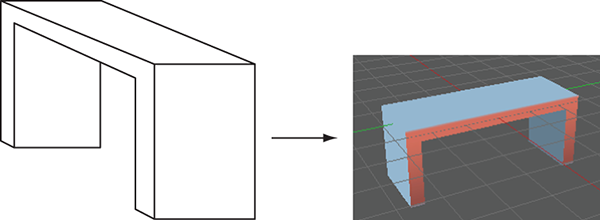

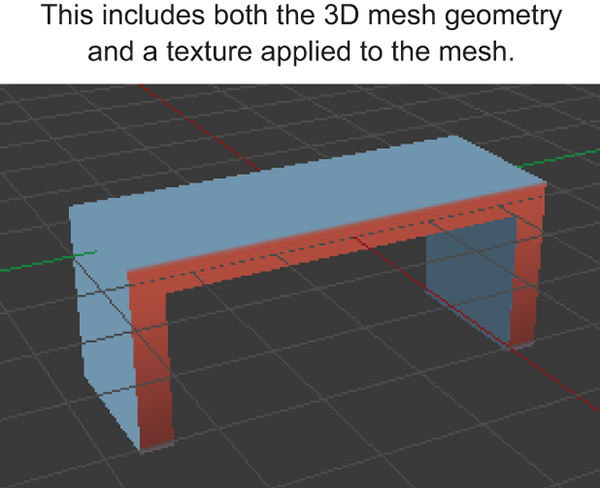

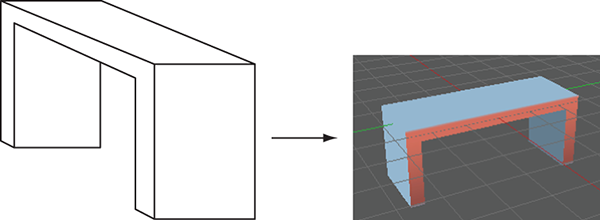

As for other aspects of game development, extensive coverage of art disciplines would water down how much the book can cover and would be largely about software external to Unity (for example, the animation software used). Discussion of art tasks will be limited to aspects specific to Unity or that all game developers should know. (Note, though, that there is an appendix about modeling custom objects.)

Chapter 1 introduces you to Unity, the cross-platform game development environment. You’ll learn about the fundamental component system underlying everything in Unity, as well as how to write and execute basic scripts.

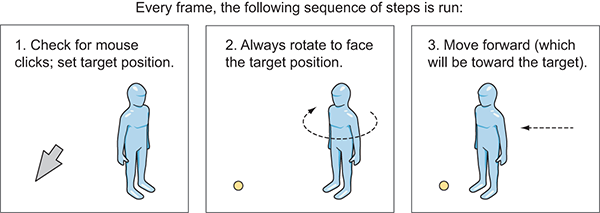

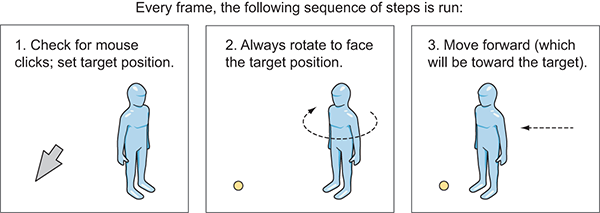

Chapter 2 progresses to writing a demo of movement in 3D, covering topics like mouse and keyboard input. Defining and manipulating both 3D positions and rotations are thoroughly explained.

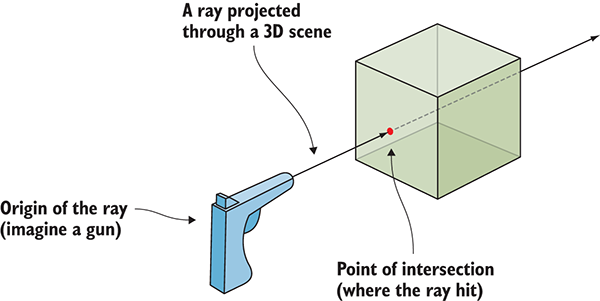

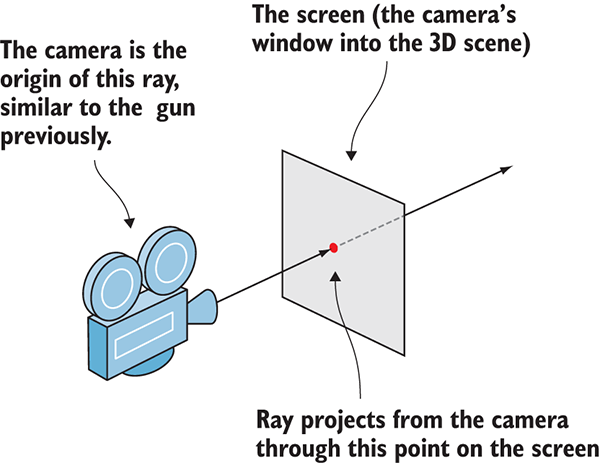

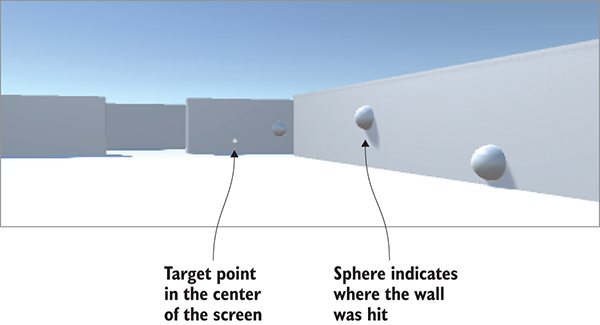

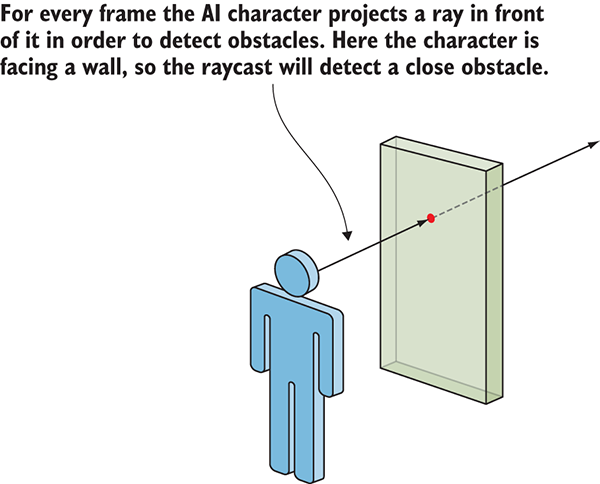

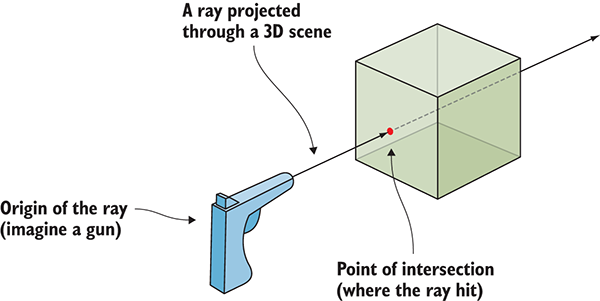

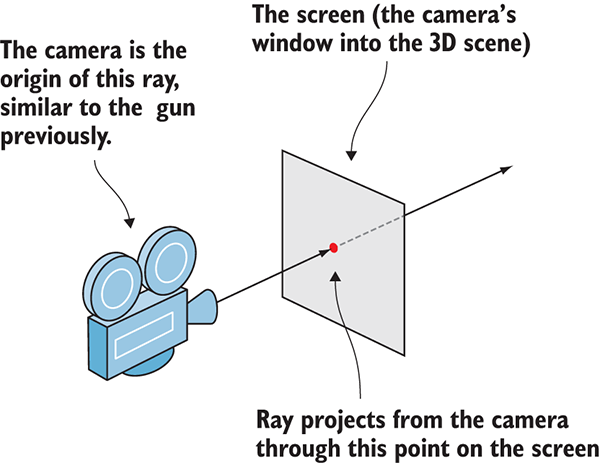

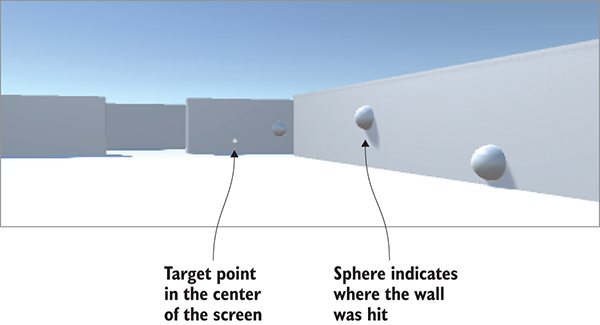

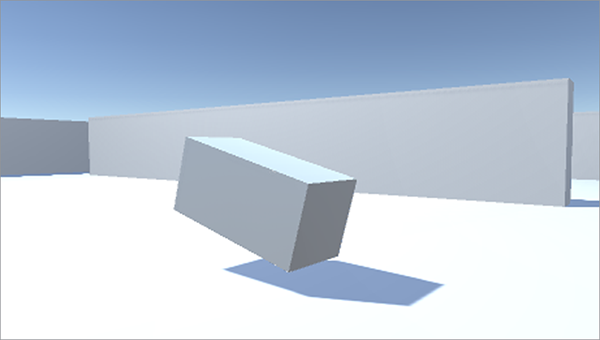

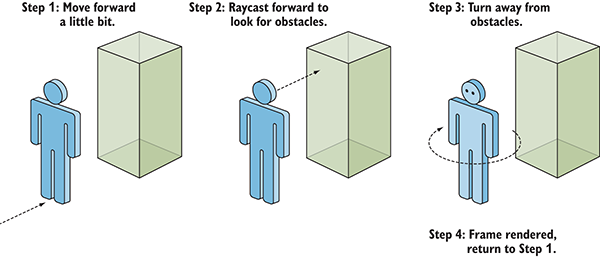

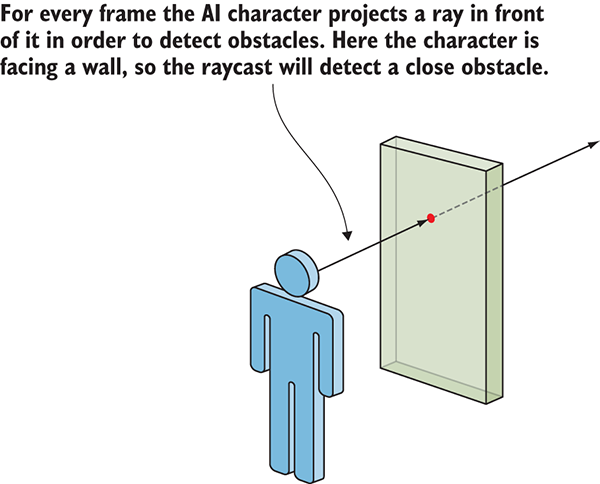

Chapter 3 turns the movement demo into a first-person shooter, teaching you raycasting and basic AI. Raycasting (shooting a line into the scene and seeing what it intersects) is a useful operation for all sorts of games.

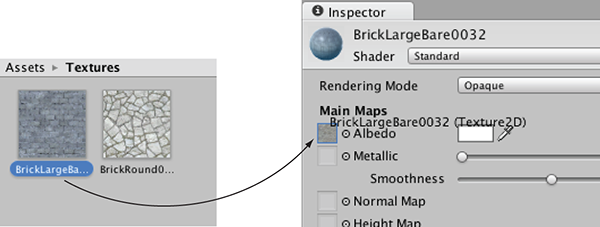

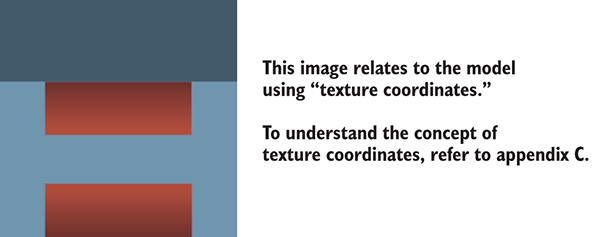

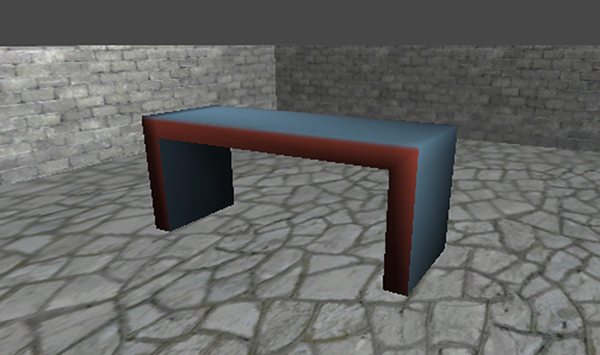

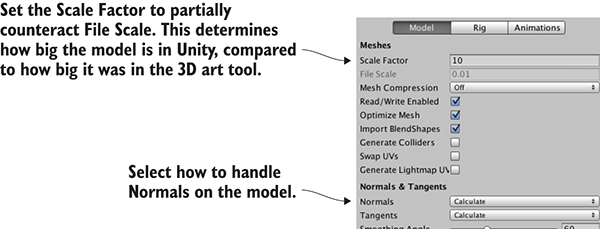

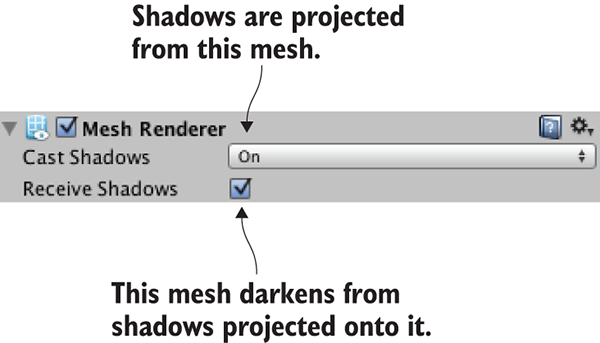

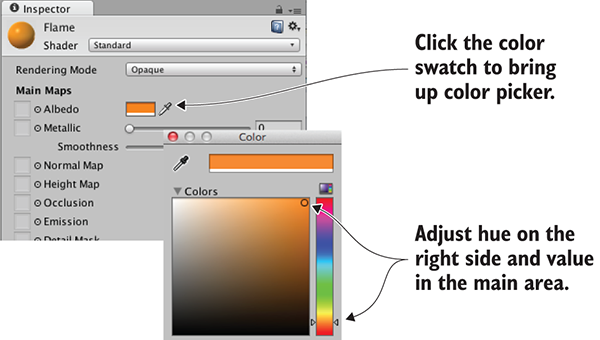

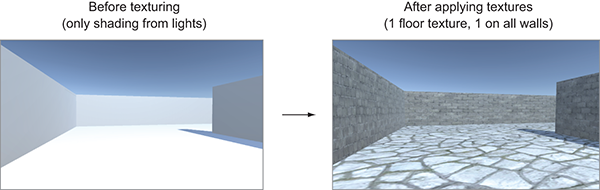

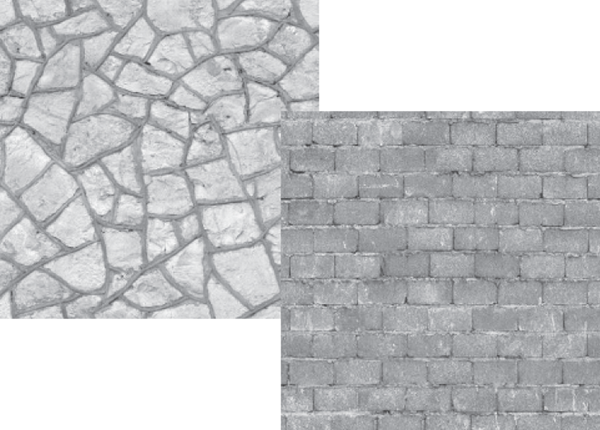

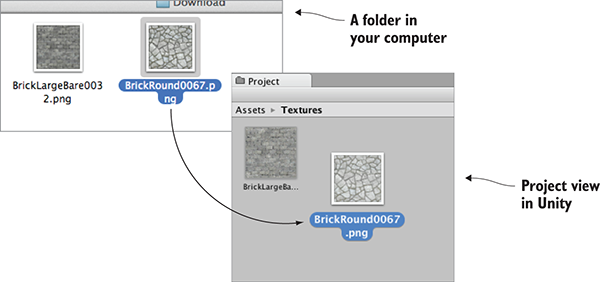

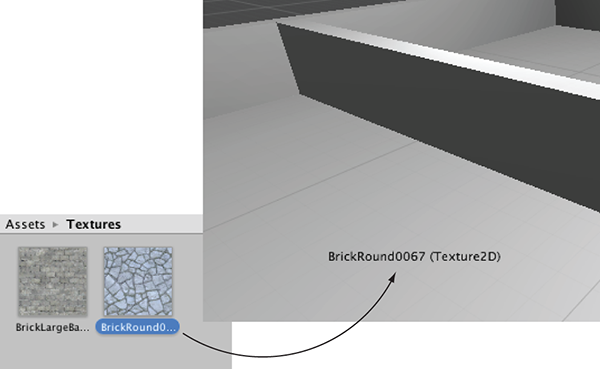

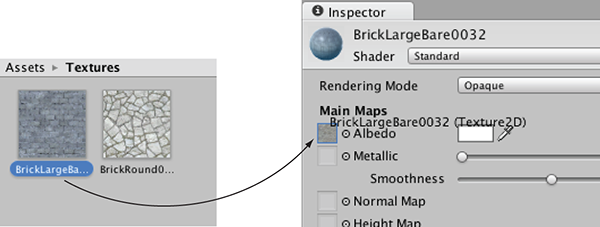

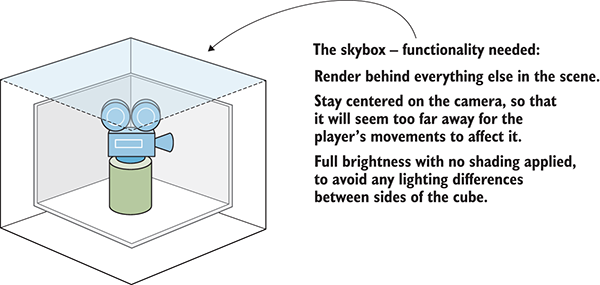

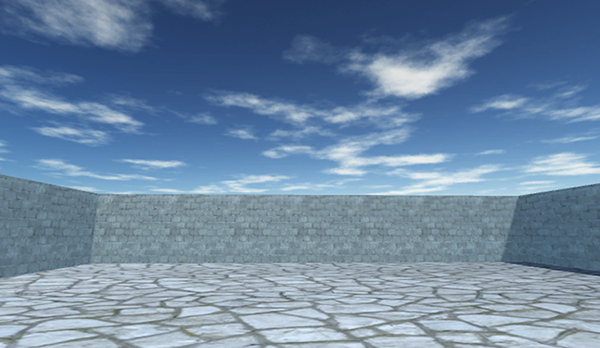

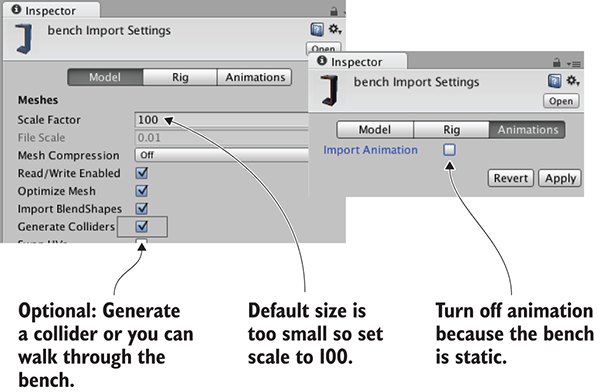

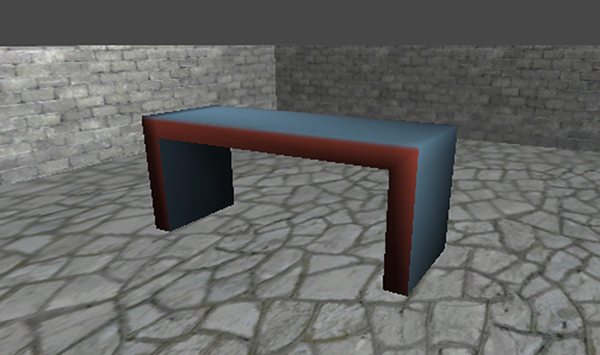

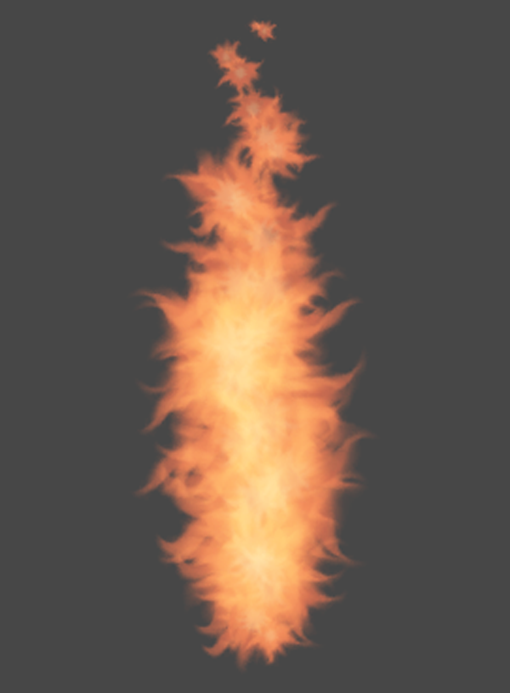

Chapter 4 covers art asset importing and creation. This is the one chapter of the book that does not focus on code, because every project needs (basic) models and textures.

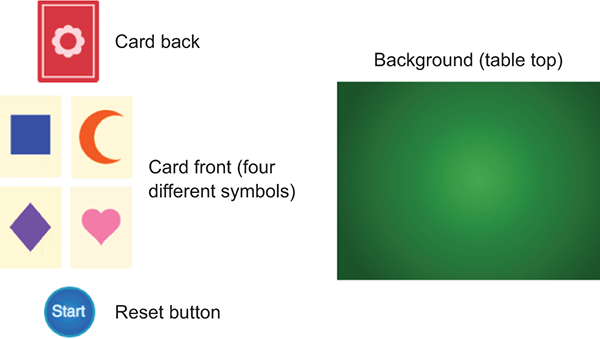

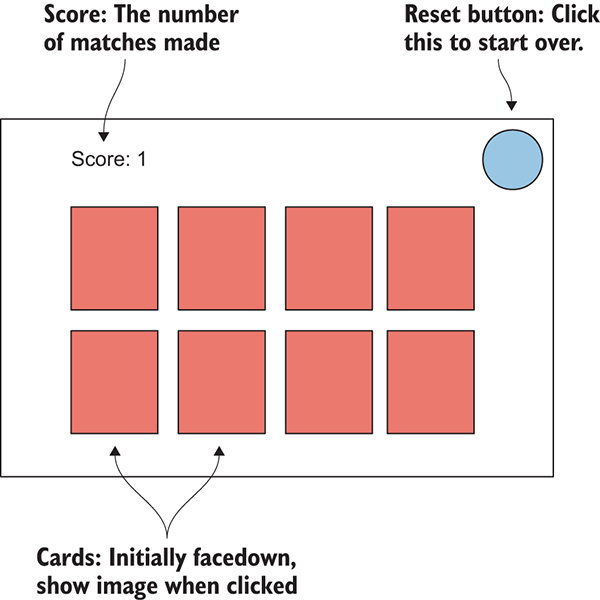

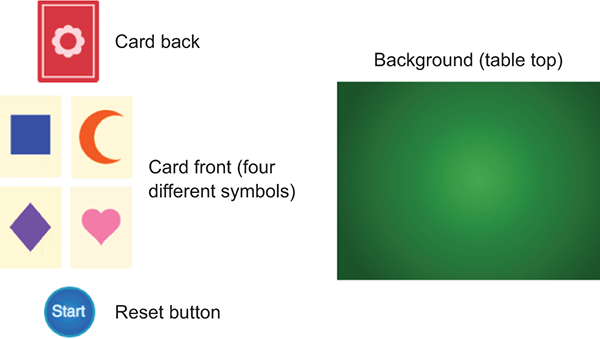

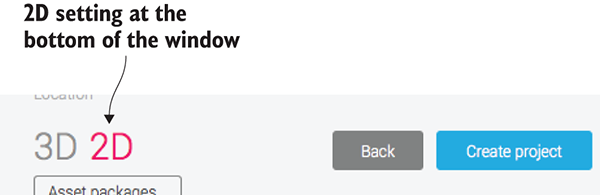

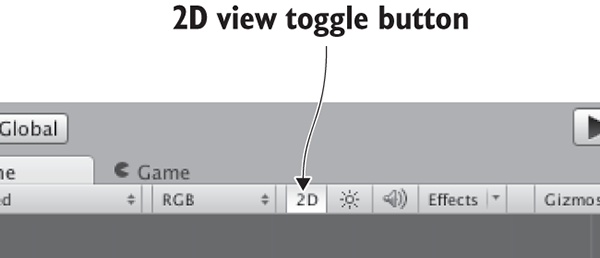

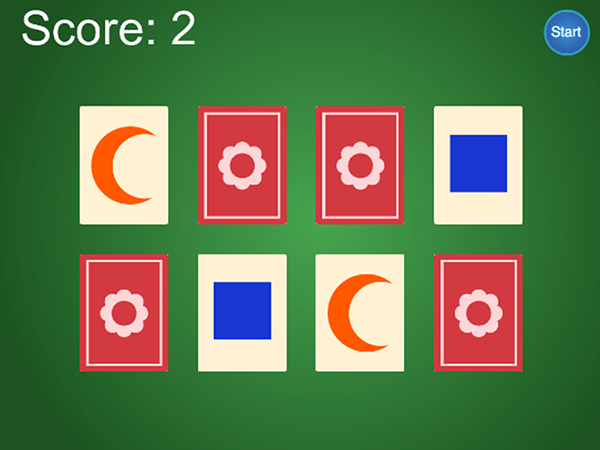

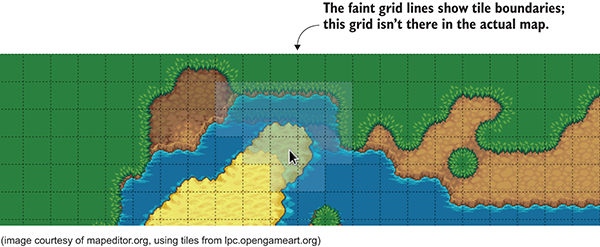

Chapter 5 teaches you how to create a 2D puzzle game in Unity. Although Unity started exclusively for 3D graphics, there’s now excellent support for 2D graphics.

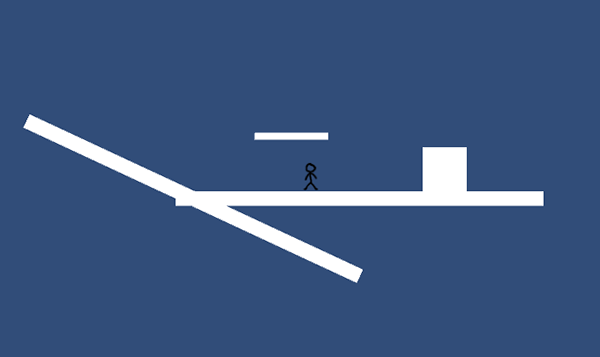

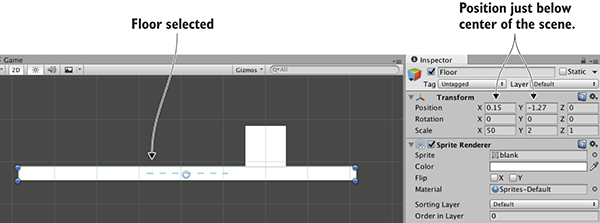

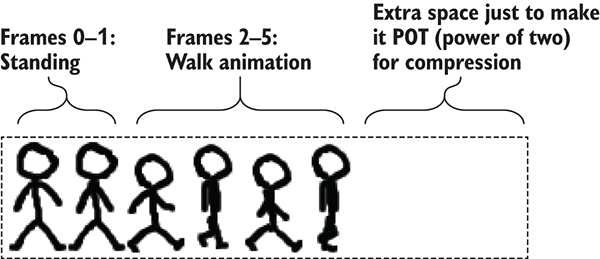

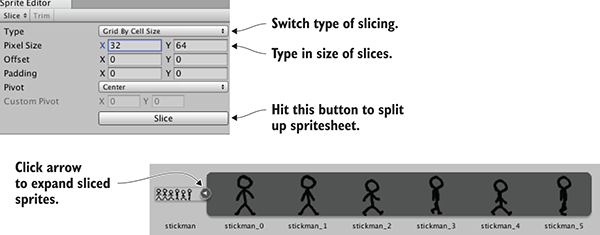

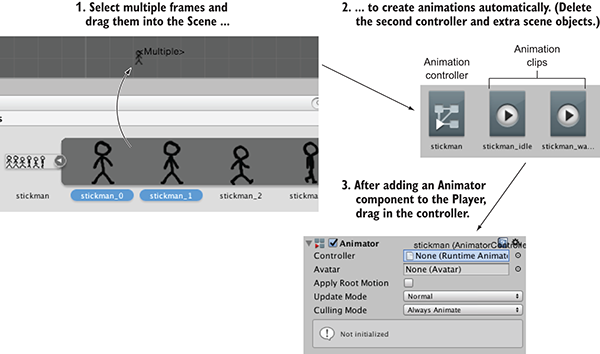

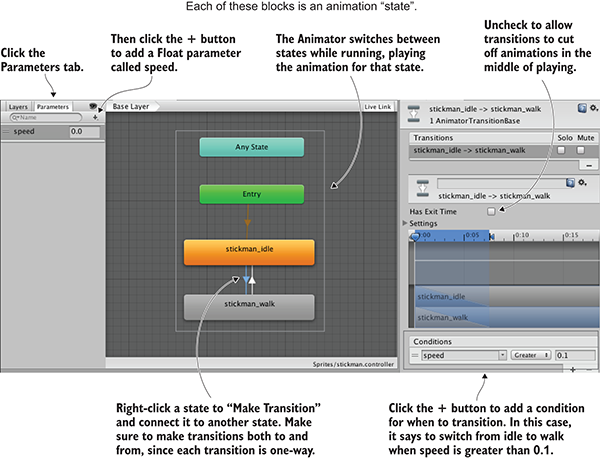

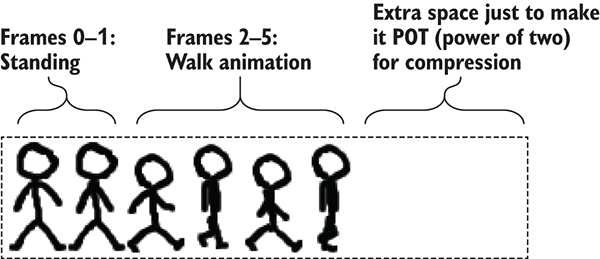

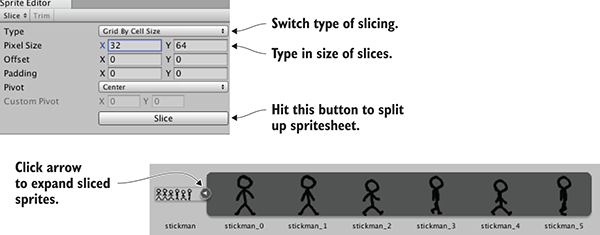

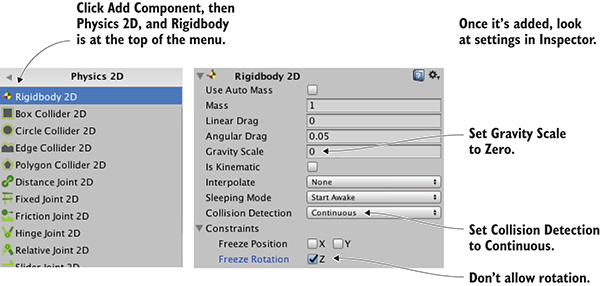

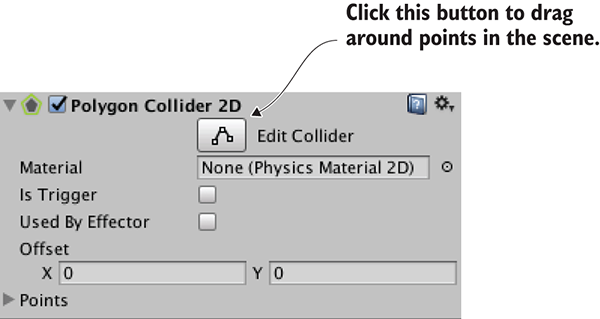

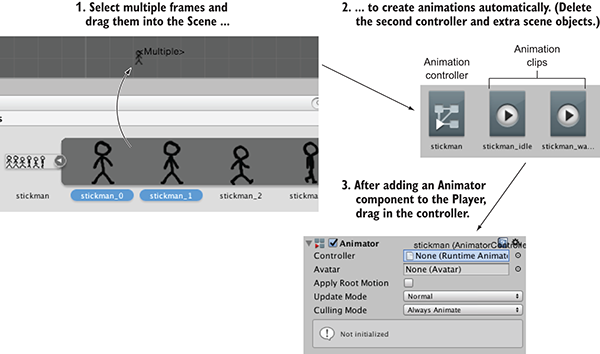

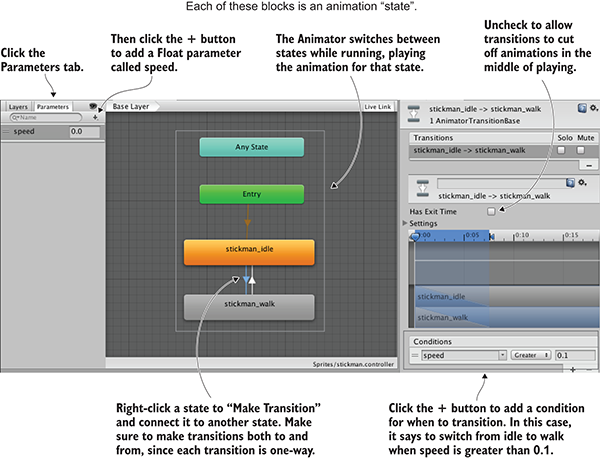

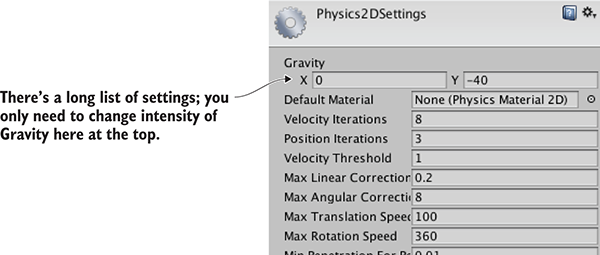

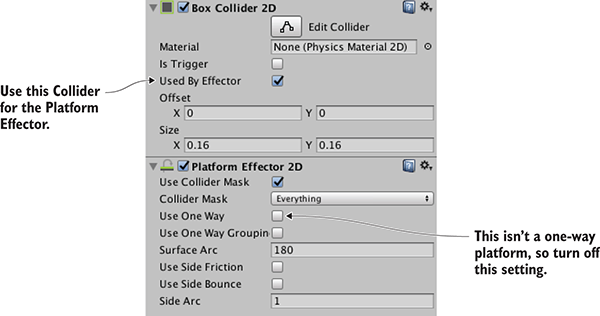

Chapter 6 expands the 2D game explanations with platform game mechanics. In particular, we’ll implement controls, physics, and animation for the player.

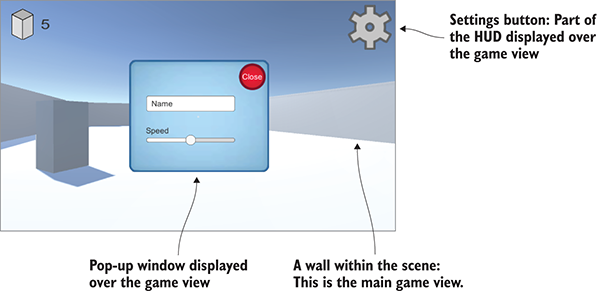

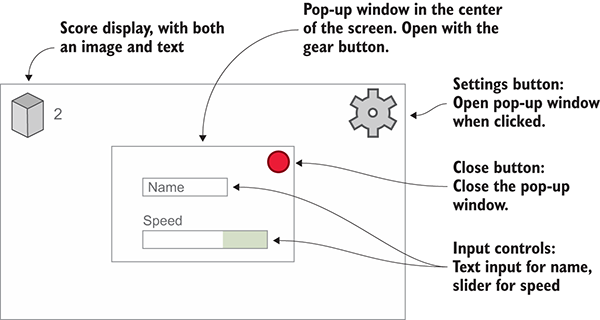

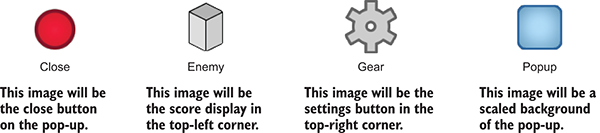

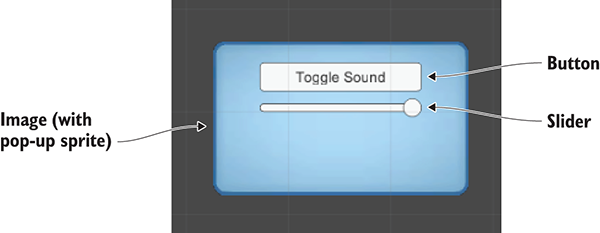

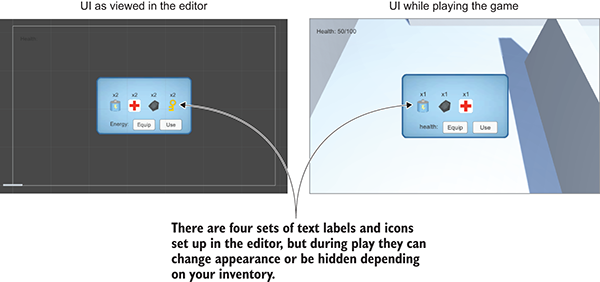

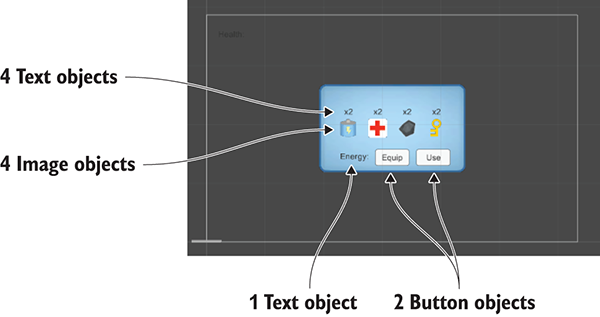

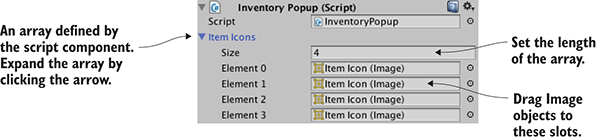

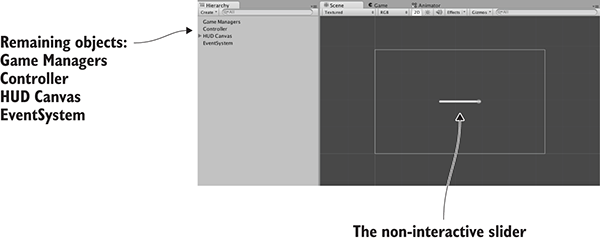

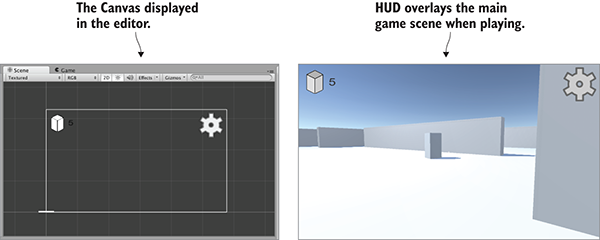

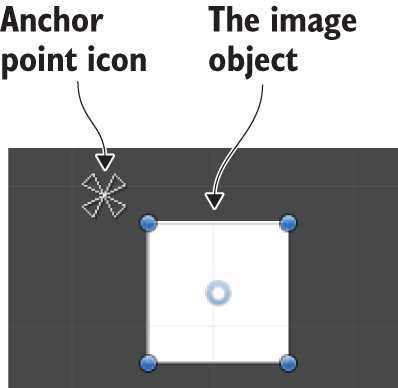

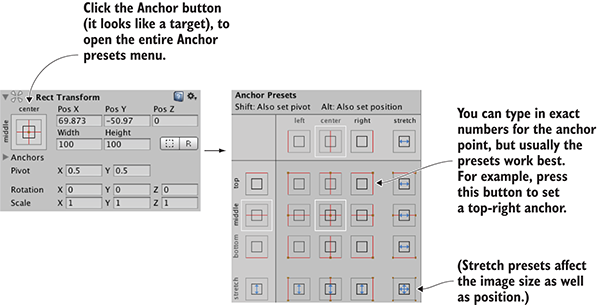

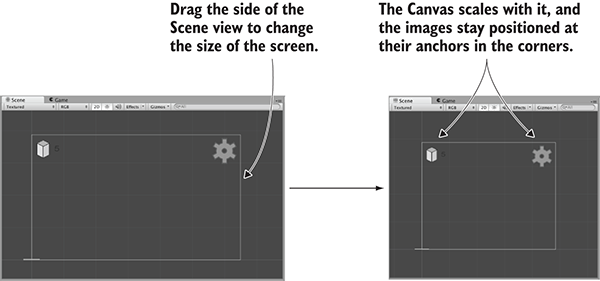

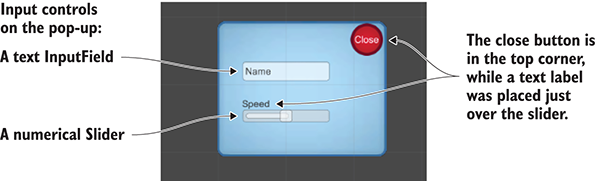

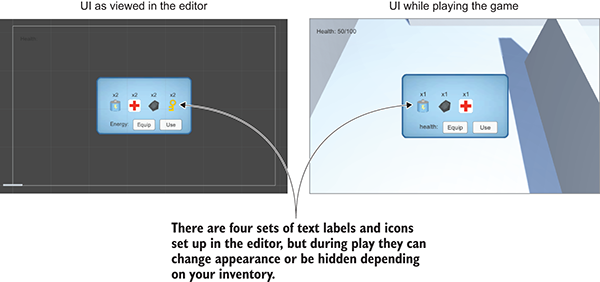

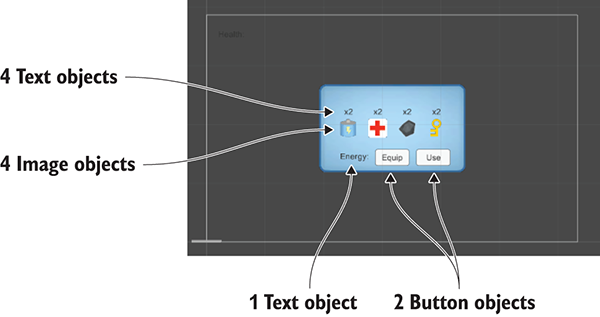

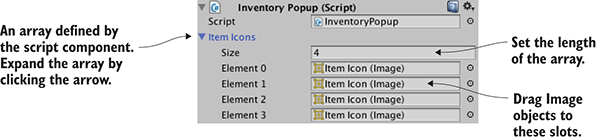

Chapter 7 introduces you to the latest GUI functionality in Unity. Every game needs a UI, and the latest versions of Unity feature an improved system for creating UIs.

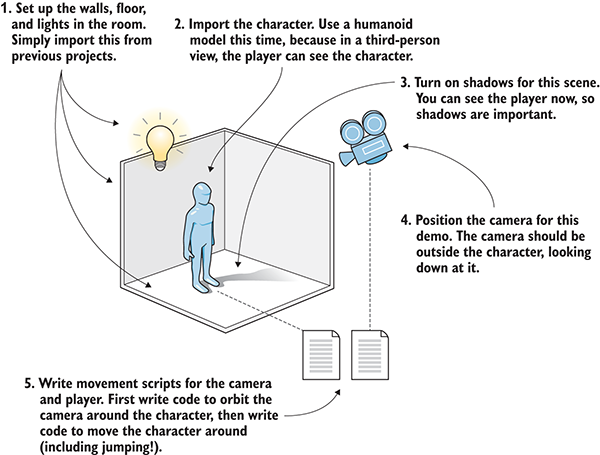

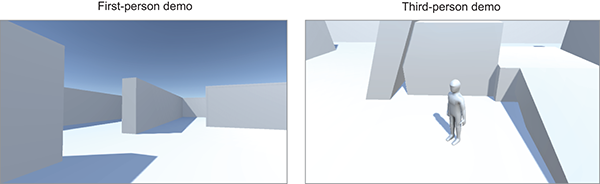

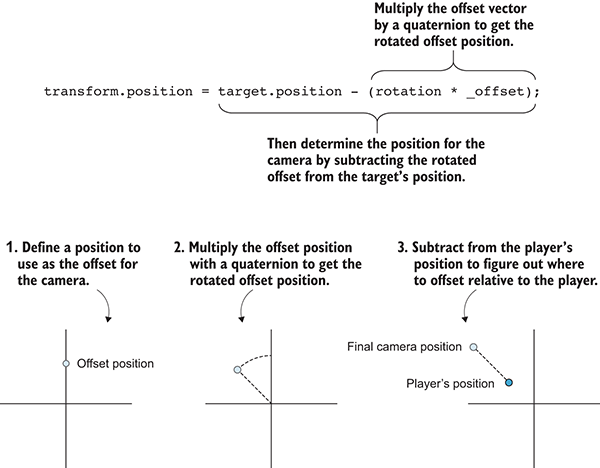

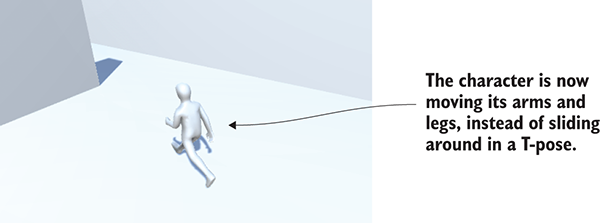

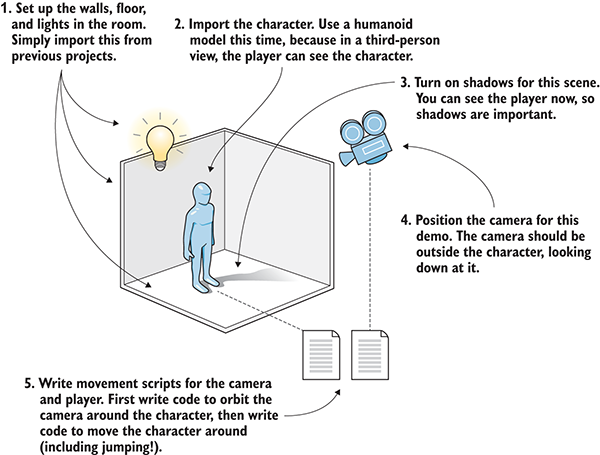

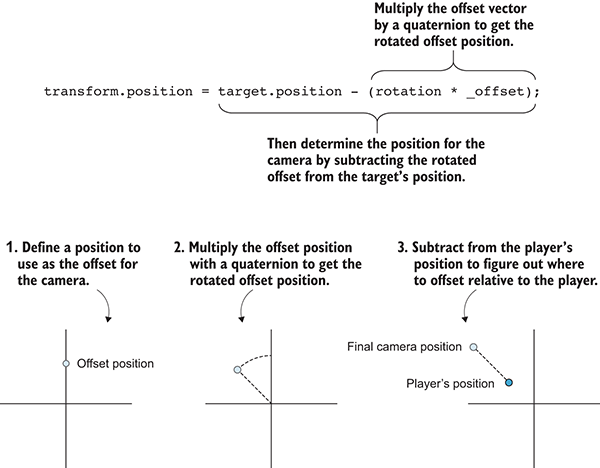

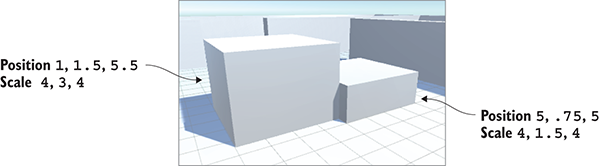

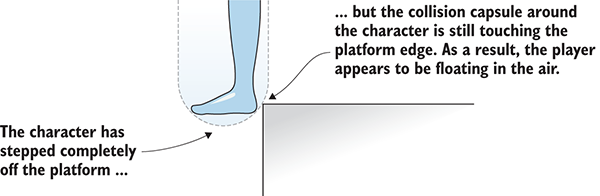

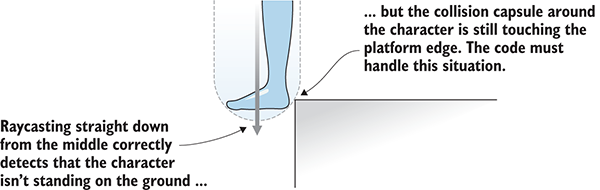

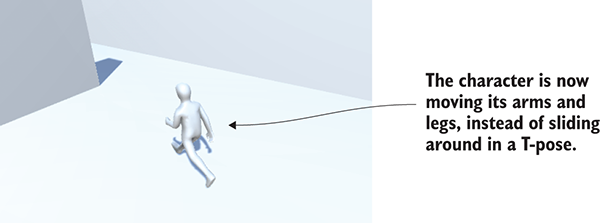

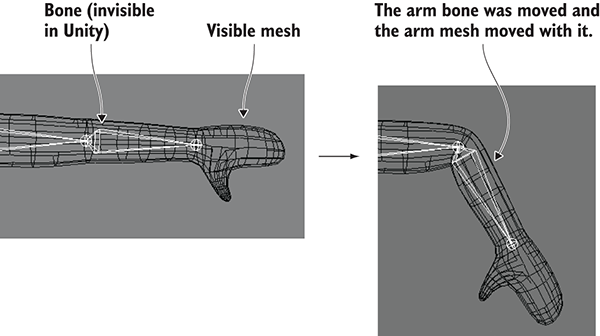

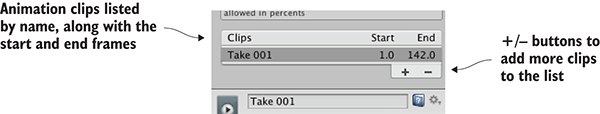

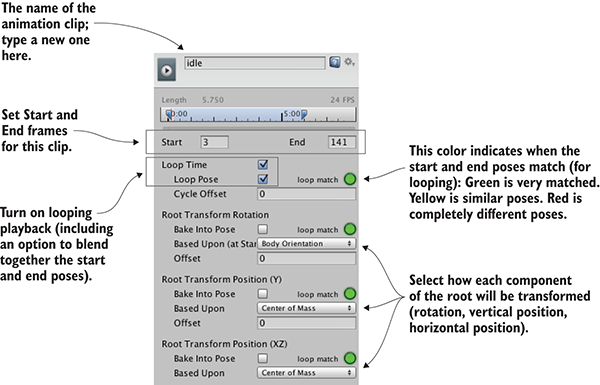

Chapter 8 shows how to create another movement demo in 3D, only seen from third-person perspective this time. Implementing third-person controls will demonstrate a number of key 3D math operations, and you’ll learn how to work with an animated character.

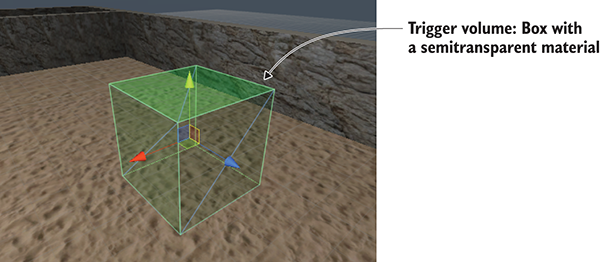

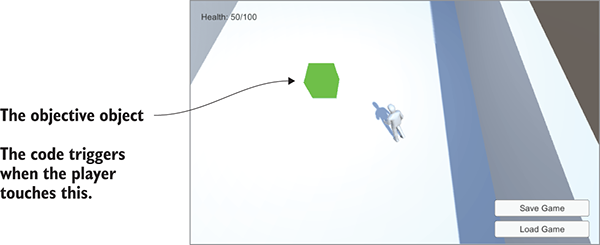

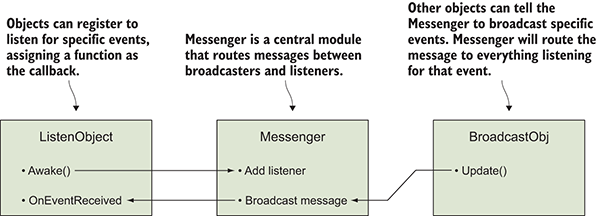

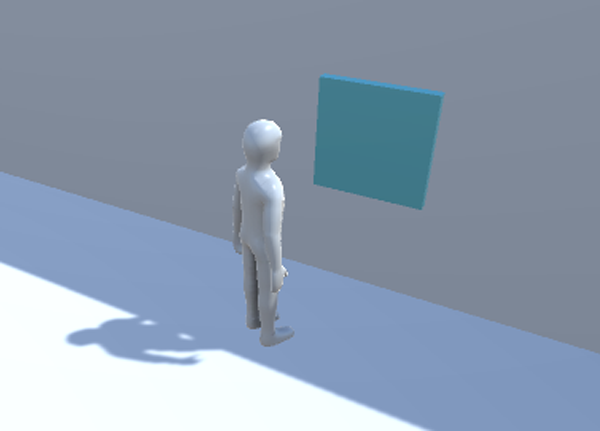

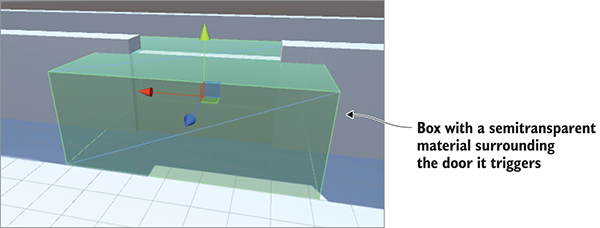

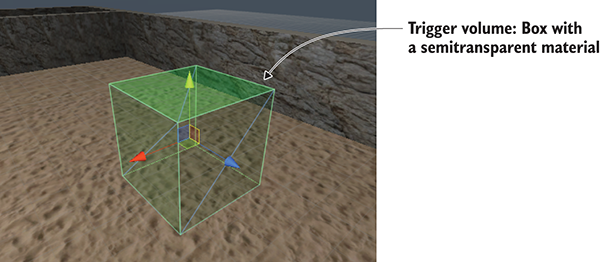

Chapter 9 goes over how to implement interactive devices and items within your game. The player will have a number of ways of operating these devices, including touching them directly, touching triggers within the game, or pressing a button on the controller.

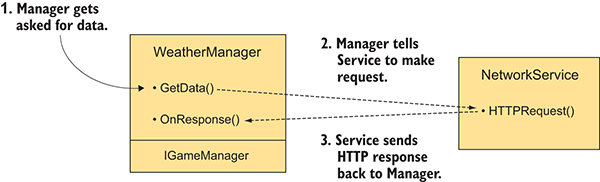

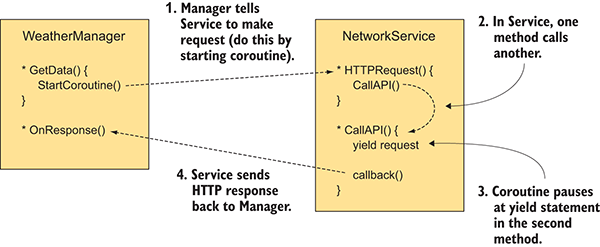

Chapter 10 covers how to communicate with the internet. You’ll learn how to send and receive data using standard internet technologies, like HTTP requests to get XML data from a server.

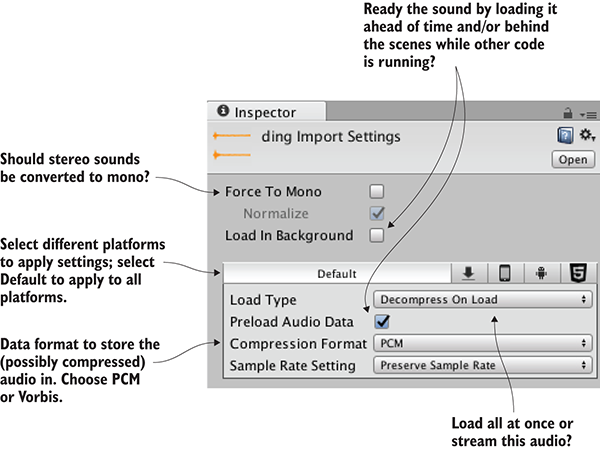

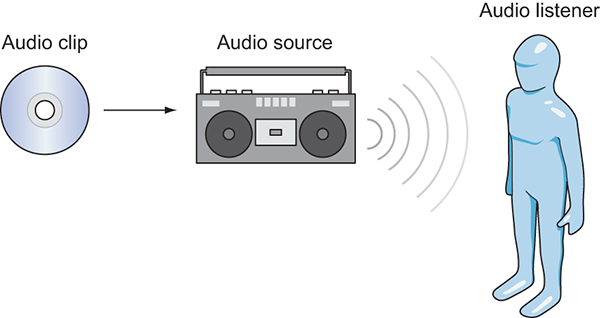

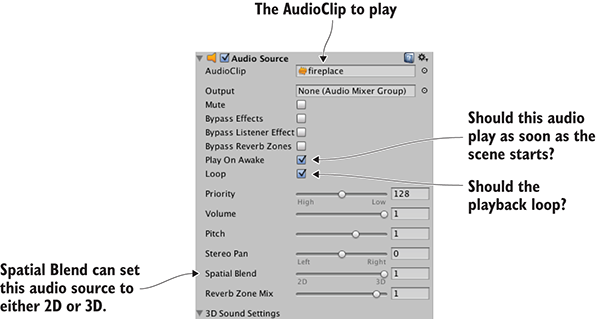

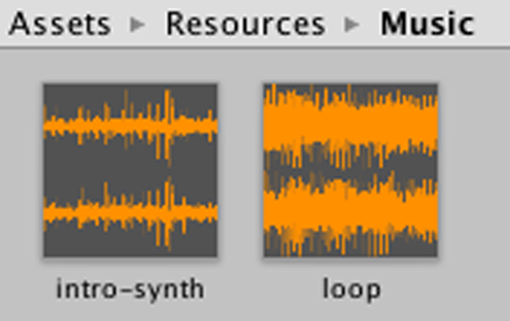

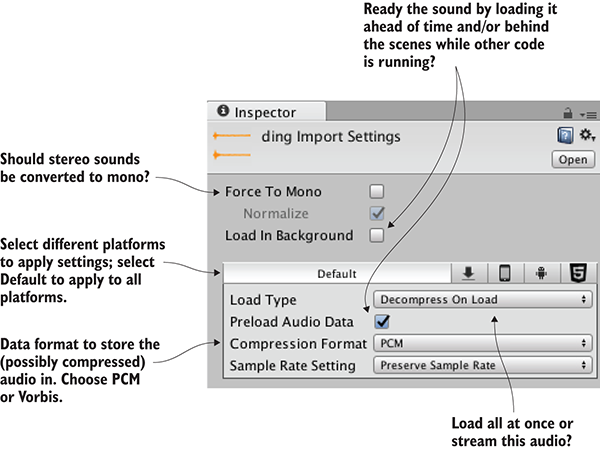

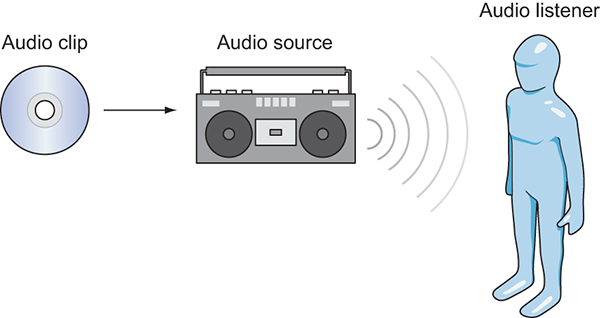

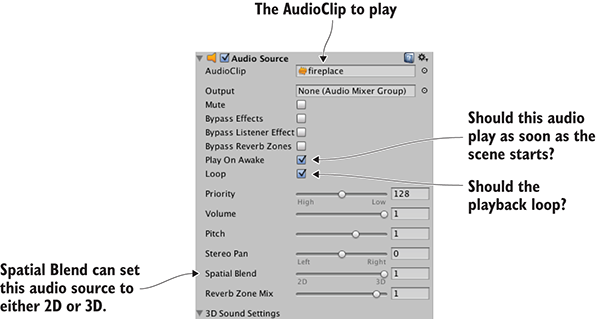

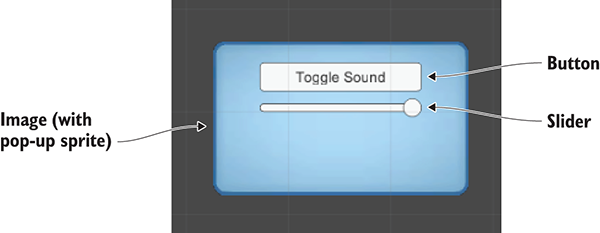

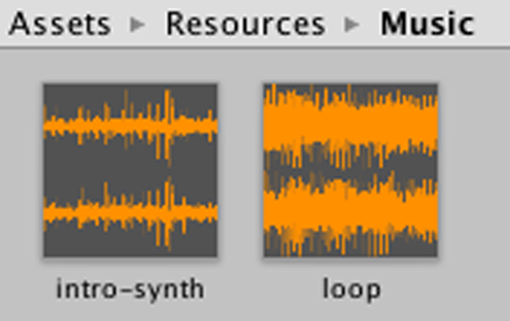

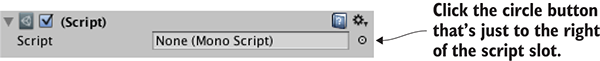

Chapter 11 teaches how to program audio functionality. Unity has great support for both short sound effects and long music tracks; both sorts of audio are crucial for almost all video games.

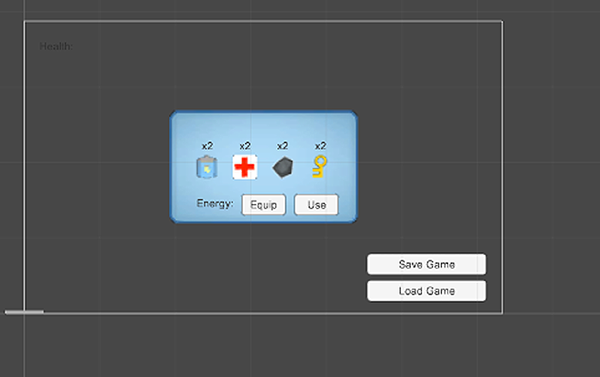

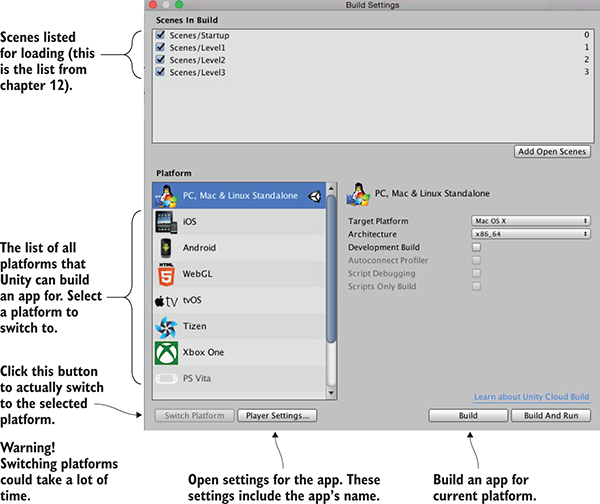

Chapter 12 walks you through bringing together pieces from different chapters into a single game. In addition, you’ll learn how to program point-and-click controls and how to save the player’s game.

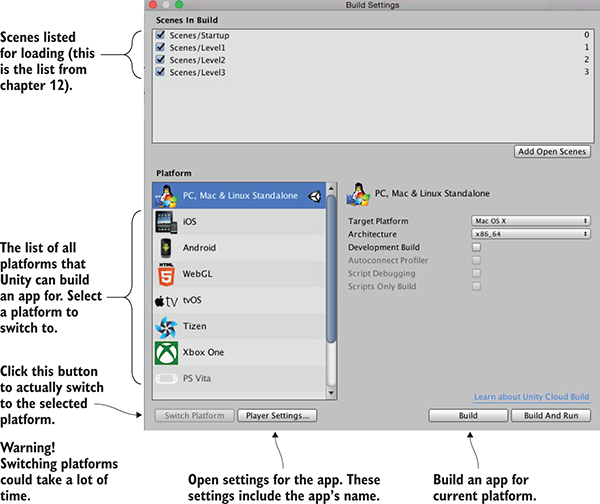

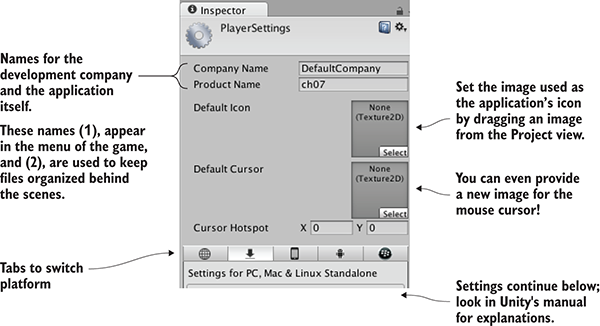

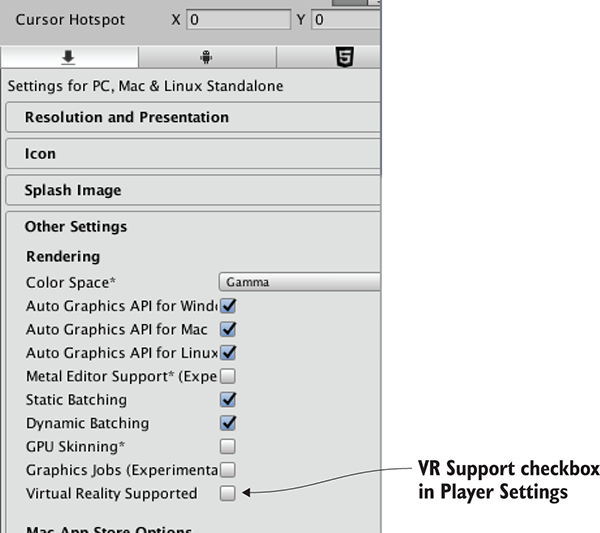

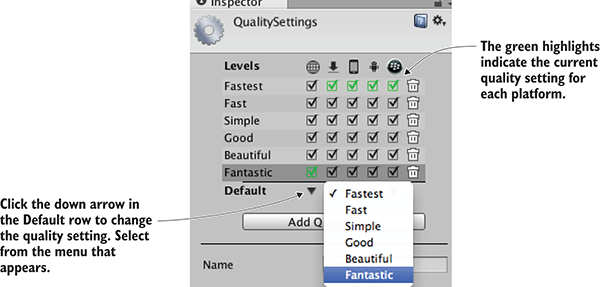

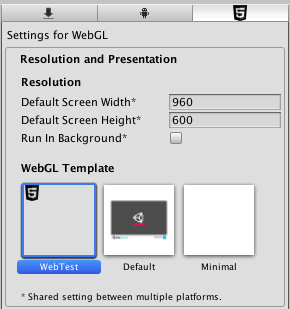

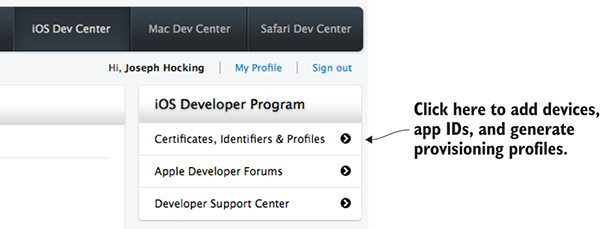

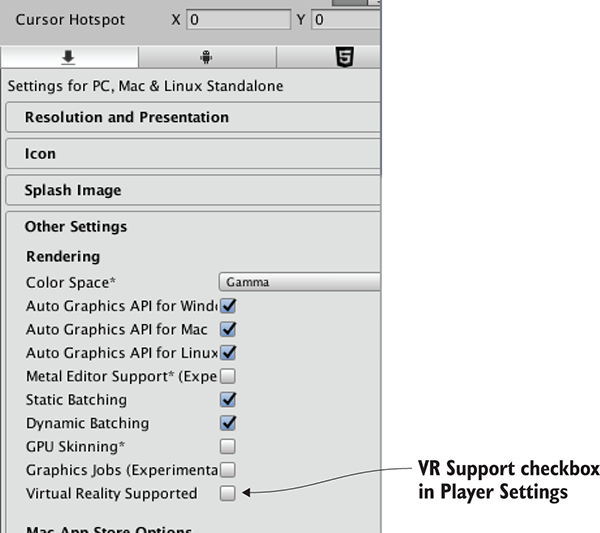

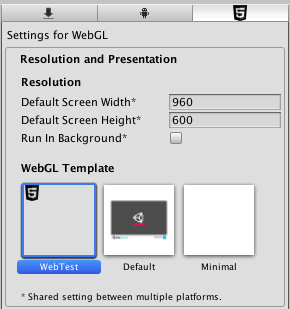

Chapter 13 goes over building the final app, with deployment to multiple platforms like desktop, web, mobile, and even VR. Unity is wonderfully platform-agnostic, enabling you to create games for every major gaming platform!

There are also four appendixes with additional information about scene navigation, external tools, Blender, and learning resources.

All the source code in the book, whether in code listings or snippets, is in a fixed-width font like this, which sets it off from the surrounding text. In most listings, the code is annotated to point out key concepts, and numbered bullets are sometimes used in the text to provide additional information about the code. The code is formatted so that it fits within the available page space in the book by adding line breaks and using indentation carefully.

The only software required is Unity; this book uses Unity 2017.1, which is the latest version as I write this. Certain chapters do occasionally discuss other pieces of software, but those are treated as optional extras and not core to what you’re learning.

The code listings sprinkled throughout the book generally show what to add or change in existing code files; unless it’s the first appearance of a given code file, don’t replace the entire file with subsequent listings. Although you can download complete working sample projects to refer to, you’ll learn best by typing out the code listings and only looking at the working samples for reference. Those downloads are available from the publisher’s website (www.manning.com/books/unity-in-action-second-edition) and on GitHub (https://github.com/jhocking/uia-2e).

Purchase of Unity in Action, Second Edition includes free access to a private web forum run by Manning Publications where you can make comments about the book, ask technical questions, and receive help from the author and from other users. To access the forum, go to https://forums.manning.com/forums/unity-in-action-second-edition. You can also learn more about Manning's forums and the rules of conduct at https://forums.manning.com/forums/about.

Manning’s commitment to our readers is to provide a venue where a meaningful dialogue between individual readers and between readers and the author can take place. It is not a commitment to any specific amount of participation on the part of the author, whose contribution to the forum remains voluntary (and unpaid). We suggest you try asking the author some challenging questions lest his interest stray! The forum and the archives of previous discussions will be accessible from the publisher’s website as long as the book is in print.

Joseph Hocking is a software engineer who specializes in interactive media development. He currently works for InContext Solutions and wrote the first edition while working for Synapse Games. He has also taught classes at the University of Illinois at Chicago, the School of the Art Institute of Chicago, and Columbia College Chicago. His website is www.newarteest.com.

The figure on the cover of Unity in Action, Second Edition is captioned “Habit of the Master of Ceremonies of the Grand Signior.” The Grand Signior was another name for a sultan of the Ottoman Empire. The illustration is taken from Thomas Jefferys’ A Collection of the Dresses of Different Nations, Ancient and Modern (4 volumes), London, published between 1757 and 1772. The title page states that these are hand-colored copperplate engravings, heightened with gum arabic. Thomas Jefferys (1719–1771), was called “Geographer to King George III.” An English cartographer who was the leading map supplier of his day, Jeffreys engraved and printed maps for government and other official bodies and produced a wide range of commercial maps and atlases, especially of North America. His work as a mapmaker sparked an interest in local dress customs of the lands he surveyed, which are brilliantly displayed in this four-volume collection.

Fascination with faraway lands and travel for pleasure were relatively new phenomena in the late eighteenth century and collections such as this one were popular, introducing both the tourist as well as the armchair traveler to the inhabitants of other countries. The diversity of the drawings in Jeffreys’ volumes speaks vividly of the uniqueness and individuality of the world's nations some 200 years ago. Dress codes have changed since then and the diversity by region and country, so rich at the time, has faded away. It is now hard to tell the inhabitant of one continent apart from another. Perhaps, trying to view it optimistically, we have traded a cultural and visual diversity for a more varied personal life, or a more varied and interesting intellectual and technical life.

At a time when it is hard to tell one computer book from another, Manning celebrates the inventiveness and initiative of the computer business with book covers based on the rich diversity of regional life of two centuries ago, brought back to life by Jeffreys’ pictures.

It’s time to take your first steps in using Unity. If you don’t know anything about Unity, that’s okay! I’m going to start by explaining what Unity is, including the fundamentals of how to program games in it. Then we’ll walk through a tutorial about developing a simple game in Unity. This first project will teach you a number of specific game development techniques, as well as giving you a good overview of how the process works.

Onward to chapter 1!

If you’re anything like me, you’ve had developing a video game on your mind for a long time. But it’s a big jump from playing games to making them. Numerous game development tools have appeared over the years, and we’re going to discuss one of the most recent and most powerful of these tools. Unity is a professional-quality game engine used to create video games targeting a variety of platforms. Not only is it a professional development tool used daily by thousands of seasoned game developers, it’s also one of the most accessible modern tools for novice game developers. Until recently, a newcomer to game development would face lots of imposing barriers right from the start, but Unity makes it easy to start learning these skills.

Because you’re reading this book, chances are you’re curious about computer technology and you’ve either developed games with other tools or built other kinds of software, such as desktop applications or websites. Creating a video game isn’t fundamentally different from writing any other kind of software; it’s mostly a difference of degree. For example, a video game is a lot more interactive than most websites, and thus involves very different sorts of code, but the skills and processes involved in creating both are similar. If you’ve already cleared the first hurdle on your path to learning game development, having learned the fundamentals of programming software, then your next step is to pick up some game development tools and translate that programming knowledge into the realm of gaming. Unity is a great choice of game development environment to work with.

To start, go to www.unity3d.com to download the software. This book uses Unity 2017.1, which is the latest version as of this writing. The URL is a leftover from Unity’s original focus on 3D games; support for 3D games remains strong, but Unity works great for 2D games as well, and this book covers both. Indeed, even when demonstrated on a 3D demo, many topics (saving data, playing audio, and so on) apply to both. Meanwhile, although there are also paid versions, the base version is free. Everything in this book works in the free version, and none of it requires the paid versions of Unity, which differ mainly in commercial licensing terms.

Let’s take a closer look at that description from the beginning of the chapter: Unity is a professional-quality game engine used to create video games targeting a variety of platforms. That’s a fairly straightforward answer to the straightforward question: “What is Unity?” But, what exactly does that answer mean, and why is Unity so great?

Game engines provide a plethora of features that are useful across many different games. A game implemented using a particular engine will get all those features, while adding custom art assets and gameplay code specific to that game. Unity has physics simulation, normal maps, screen space ambient occlusion (SSAO), dynamic shadows . . . and the list goes on. Many game engines boast such features, but Unity has two main advantages over similar cutting-edge game development tools: an extremely productive visual workflow and a high degree of cross-platform support.

The visual workflow is a fairly unique design, different from most other game development environments. Whereas other game development tools are often a complicated mishmash of disparate parts that must be wrangled, or perhaps a programming library that requires you to set up your own integrated development environment (IDE), build-chain, and whatnot, the development workflow in Unity is anchored by a sophisticated visual editor. The editor is used to lay out the scenes in your game and to tie together art assets and code into interactive objects. The beauty of this editor is that it enables professional-quality games to be built quickly and efficiently, giving developers tools to be incredibly productive, while still using an extensive list of the latest technologies in video gaming.

The editor is especially helpful for doing rapid iteration, honing the game through cycles of prototyping and testing. You can adjust objects in the editor and move things around even while the game is running. Plus, Unity allows you to customize the editor itself by writing scripts that add new features and menus to the interface.

Besides the editor’s significant productivity advantages, the other main strength of Unity’s toolset is a high degree of cross-platform support. Not only is Unity multiplatform in terms of deployment targets (you can deploy to PC, web, mobile, or consoles), but it’s also multiplatform in terms of development tools (you can develop a game on Windows or macOS). This platform-agnostic nature is largely because Unity started as Mac-only software and was later ported to Windows. The first version launched in 2005, but now Unity is past its fifth major version (with lots of minor updates released frequently). Initially, Unity supported only Mac for both development and deployment, but within months Unity had been updated to work on Windows as well. Successive versions gradually added more deployment platforms, such as a cross-platform web player in 2006, iPhone in 2008, Android in 2010, and even game consoles like Xbox and PlayStation. More recently, they’ve added deployment to WebGL, the new framework for 3D graphics in web browsers, and there’s even support for VR platforms like Oculus Rift and Vive. Few game engines support as many deployment targets as Unity, and none make deploying to multiple platforms so simple.

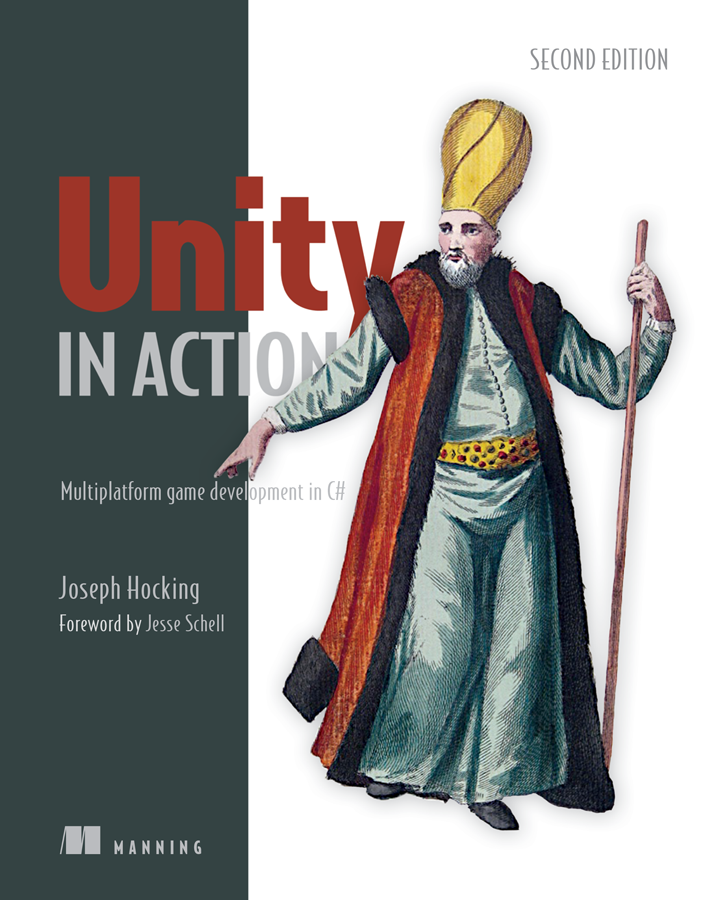

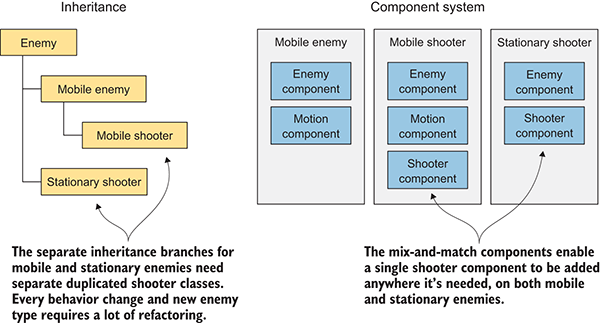

In addition to these main strengths, a third, more subtle, benefit comes from the modular component system used to construct game objects. In a component system, components are mix-and-match packets of functionality, and objects are built up as a collection of components, rather than as a strict hierarchy of classes. A component system is a different (and usually more flexible) approach to doing object-oriented programming (OOP), where game objects are constructed through composition rather than inheritance. Figure 1.1 diagrams an example comparison.

Figure 1.1 Inheritance vs. components

In a component system, objects exist on a flat hierarchy and different objects have different collections of components, rather than an inheritance structure where different objects are on completely different branches of the tree. This arrangement facilitates rapid prototyping, because you can quickly mix and match different components rather than having to refactor the inheritance chain when objects change.

Although you could write code to implement a custom component system if one didn’t exist, Unity already has a robust component system, and this system is even integrated with the visual editor. Rather than only being able to manipulate components in code, you can attach and detach components within the visual editor. Meanwhile, you aren’t limited to only building objects through composition; you still have the option of using inheritance in your code, including all the best-practice design patterns that have emerged based on inheritance.

Unity has many advantages that make it a great choice for developing games, and I highly recommend it, but I’d be remiss if I didn’t mention its weaknesses. In particular, the combination of the visual editor and sophisticated coding, though very effective with Unity’s component system, is unusual and can create difficulties. In complex scenes, you can lose track of which objects in the scene have specific components attached. Unity does provide a search feature for finding attached scripts, but it could be more robust; sometimes you still encounter situations where you need to manually inspect everything in the scene in order to find script linkages. This doesn’t happen often, but when it does happen, it can be tedious.

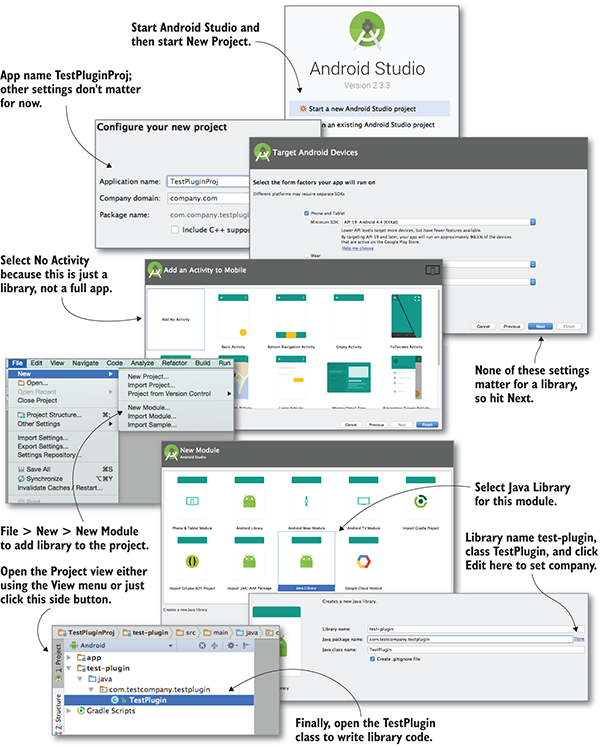

Another disadvantage that can be surprising and frustrating for experienced programmers is that Unity doesn’t support linking in external code libraries. Necessary libraries must be manually copied into every project that they’ll be used in, as opposed to referencing one central shared location. The lack of a central location for libraries can make it awkward to share functionality between multiple projects. This disadvantage can be worked around with clever use of version control systems, but Unity doesn’t support external code libraries out of the box.

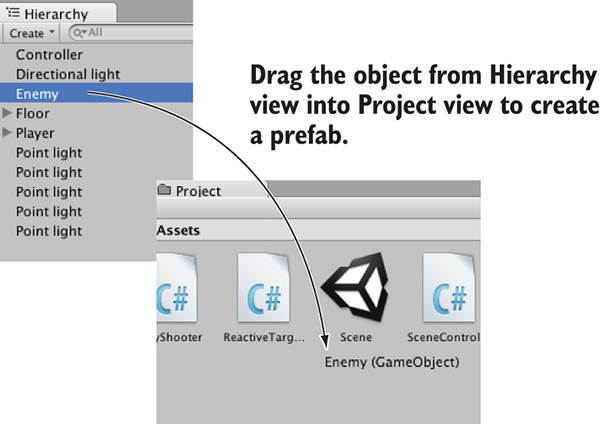

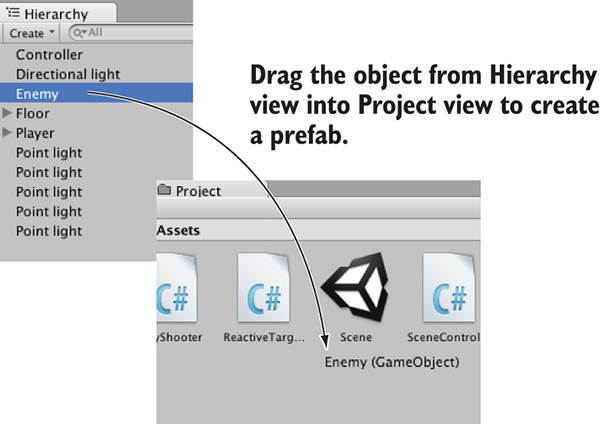

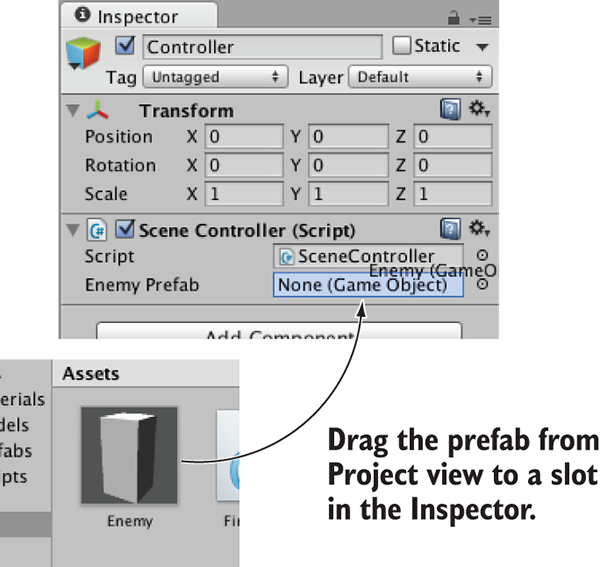

A third weakness has to do with working with prefabs. Prefabs are a concept specific to Unity and are explained in chapter 3; for now, all you need to know is that prefabs are a flexible approach to visually defining interactive objects. The concept of prefabs is both powerful and unique to Unity (and yes, the concept of prefabs only makes sense in the context of Unity’s component system), but it can be surprisingly awkward to edit prefabs. Considering that prefabs are such a useful and central part of working with Unity, I hope that future versions improve the workflow for editing them.

You’ve heard about the pros and cons of Unity, but you might still need convincing that the development tools in Unity can give first-rate results. Visit the Unity gallery at http://unity3d.com/showcase/gallery to see a constantly updated list of hundreds of games and simulations developed using Unity. This section explores a handful of games, showcasing a number of genres and deployment platforms.

Because the Unity editor runs on the same platform, deployment to Windows or Mac is often the most straightforward target platform. Here are a couple of examples of desktop games in different genres:

Figure 1.2 Guns of Icarus Alliance

Figure 1.3 Gone Home

Unity can also deploy games to mobile platforms like iOS (iPhones and iPads) and Android (phones and tablets). Here are three examples of mobile games in different genres:

Figure 1.4 Lara Croft GO

Figure 1.5 INKS

Figure 1.6 Tyrant Unleashed

Unity can even deploy to game consoles, although the developer must obtain licensing from Sony, Microsoft, or Nintendo. Because of this requirement and Unity’s easy cross-platform deployment, console games are often available on desktop computers as well. Here are a couple of examples of console games in different genres:

Figure 1.7 Yooka-Laylee

Figure 1.8 Shadow Tactics

As you can see from these examples, Unity’s strengths can definitely translate into commercial-quality games. But even with Unity’s significant advantages over other game development tools, newcomers may misunderstand the involvement of programming in the development process. Unity is often portrayed as a list of features with no programming required, which is a misleading view that won’t teach people what they need to know in order to produce commercial titles. Though it’s true that you can click together a fairly elaborate prototype using pre-existing components even without a programmer being involved (which is itself a pretty big feat), rigorous programming is required to move beyond an interesting prototype to a polished game ready for release.

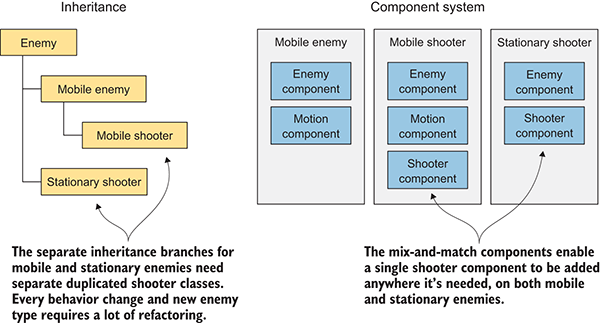

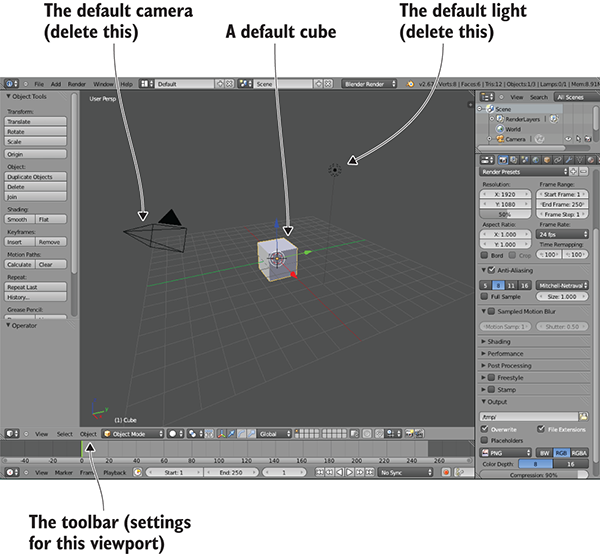

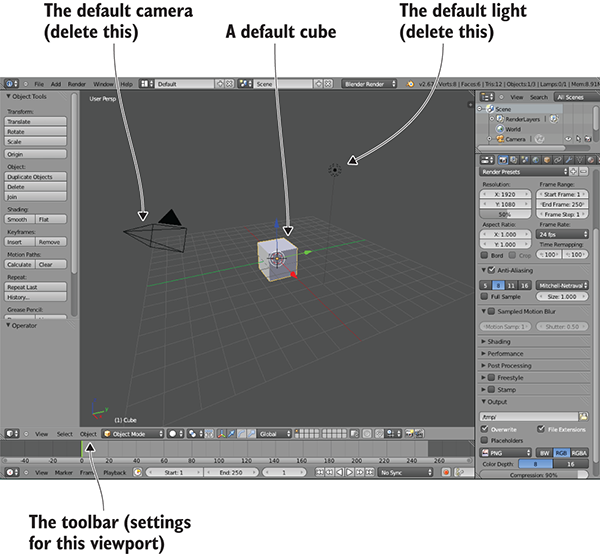

The previous section talked a lot about the productivity benefits of Unity’s visual editor, so let’s go over what the interface looks like and how it operates. If you haven’t done so, download the program from www.unity3d.com/ and install it on your computer (be sure to include Example Project if that’s unchecked in the installer). After you install it, launch Unity to start exploring the interface.

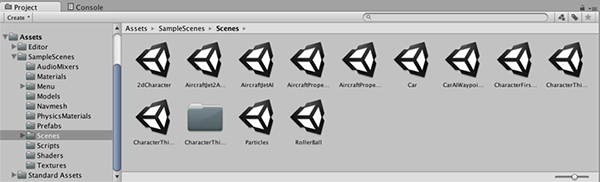

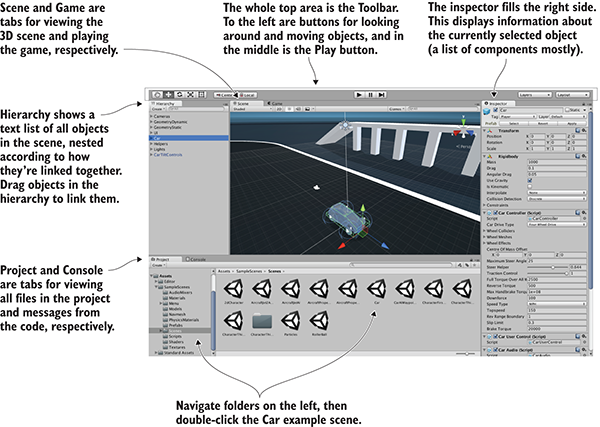

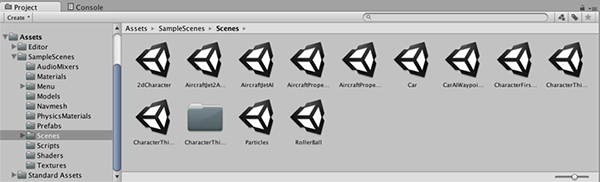

You probably want an example to look at, so open the included example project; a new installation should open the example project automatically, but you can also select File > Open Project to open it manually. The example project is installed in the shared user directory, which is something like C:\Users\Public\Documents\Unity Projects\ on Windows, or Users/Shared/Unity/ on macOS. You may also need to open the example scene, so double-click the Car scene file (highlighted in figure 1.9; scene files have the Unity cube icon), which is found by going to SampleScenes/Scenes/ in the file browser at the bottom of the editor. You should see a screen similar to figure 1.9.

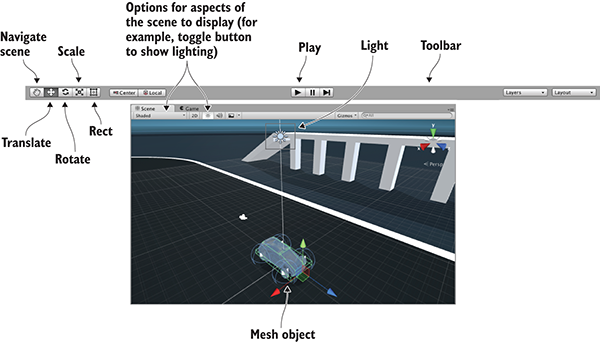

Figure 1.9 Parts of the interface in Unity

The interface in Unity is split into sections: the Scene tab, the Game tab, the Toolbar, the Hierarchy tab, the Inspector, the Project tab, and the Console tab. Each section has a different purpose, but all are crucial to the game building lifecycle.

This is the default layout in Unity; all of the views are in tabs and can be moved around or resized, docking in different places on the screen. Later, you can play around with customizing the layout, but for now, the default layout is the best way to understand what all the views do.

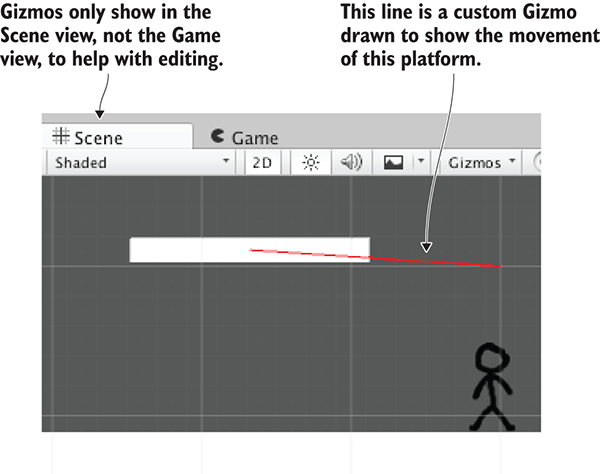

The most prominent part of the interface is the Scene view in the middle. This is where you can see what the game world looks like and move objects around. Mesh objects in the scene appear as, well, their mesh (defined in a moment). You can also see a number of other objects in the scene, represented by icons and colored lines: cameras, lights, audio sources, collision regions, and so forth. Note that the view you’re seeing here isn’t the same as the view in the running game—you’re able to look around the scene at will without being constrained to the game’s view.

The Game view isn’t a separate part of the screen but rather another tab located right next to Scene (look for tabs at the top left of views). A couple of places in the interface have multiple tabs like this; if you click a different tab, the view is replaced by the new active tab. When the game is running, what you see in this view is the game. It isn’t necessary to manually switch tabs every time you run the game, because the view automatically switches to Game when the game starts.

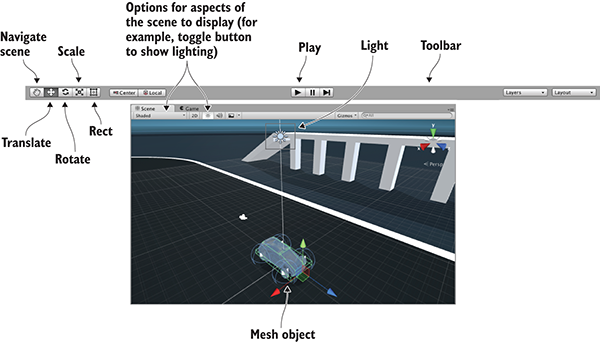

Speaking of running the game, that’s as simple as hitting the Play button just above the Scene view. That whole top section of the interface is referred to as the Toolbar, and Play is located right in the middle. Figure 1.10 breaks apart the full editor interface to show only the Toolbar at the top, as well as the Scene/Game tabs right underneath.

Figure 1.10 Editor screenshot cropped to show Toolbar, Scene, and Game

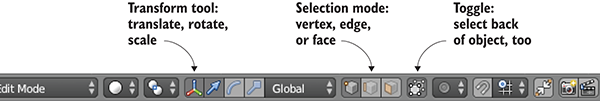

At the left-hand side of the Toolbar are buttons for scene navigation and transforming objects—how to look around the scene and how to move objects. I suggest you spend time practicing these, because they are two of the most important activities you’ll do in Unity’s visual editor. (They’re so important that they get their own section following this one.) The right-hand side of the Toolbar is where you’ll find drop-down menus for layouts and layers. As mentioned earlier, the layout of Unity’s interface is flexible, so the Layouts menu allows you to switch between layouts. As for the Layers menu, that’s advanced functionality that you can ignore for now (layers will be mentioned in future chapters).

Scene navigation is primarily done using the mouse, along with a few modifier keys used to modify what the mouse is doing. The three main navigation maneuvers are Move, Orbit, and Zoom. The specific mouse movements, because they vary depending on which mouse you’re using, are described in appendix A. The three different movements involve clicking-and-dragging while holding down a combination of Alt (or Option on Mac) and Ctrl. Spend a few minutes moving around in the scene to understand what Move, Orbit, and Zoom do.

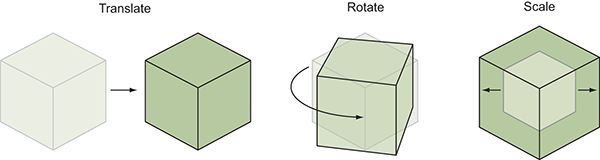

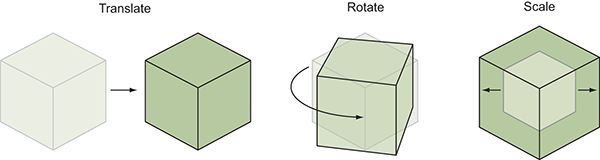

Transforming objects is also done through three main maneuvers, and the three scene navigation moves are analogous to the three transforms: Translate, Rotate, and Scale (figure 1.11 demonstrates the transforms on a cube).

Figure 1.11 Applying the three transforms: Translate, Rotate, and Scale. (The lighter lines are the previous state of the object before it was transformed.)

When you select an object in the scene, you can then move it around (the mathematically accurate technical term is translate), rotate it, or scale how big it is. Relating back to scene navigation maneuvers, Move is when you Translate the camera, Orbit is when you Rotate the camera, and Zoom is when you Scale the camera. Besides the buttons on the Toolbar, you can switch between these functions by pressing W, E, or R on the keyboard. When you activate a transform, you’ll notice that a set of color-coded arrows or circles appears over the object in the scene; this is the Transform gizmo, and you can click-and-drag this gizmo to apply the transformation.

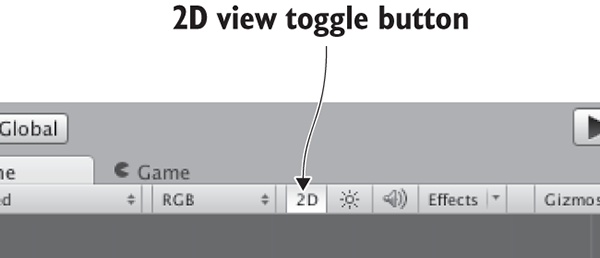

There’s a fourth tool next to the transform buttons. Called the Rect tool, it’s designed for use with 2D graphics. This one tool combines movement, rotation, and scaling. These operations have to be separate tools in 3D but are combined in 2D because there’s one less dimension to worry about. Unity has a host of other keyboard shortcuts for speeding up a variety of tasks. Refer to appendix A to learn about them. And with that, on to the remaining sections of the interface!

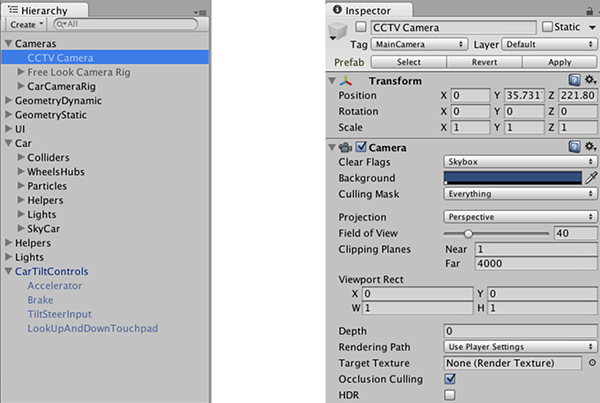

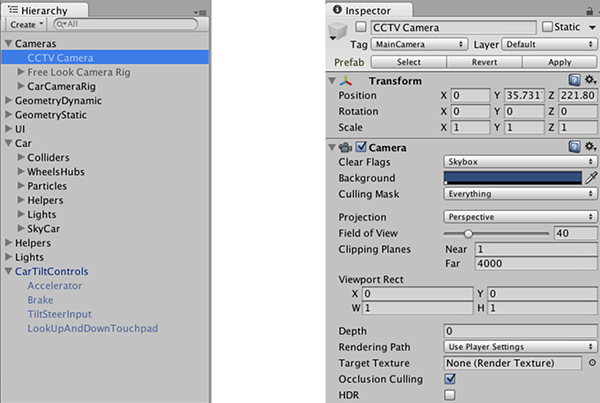

Looking at either side of the screen, you’ll see the Hierarchy tab on the left and the Inspector tab on the right (see figure 1.12). Hierarchy is a list view with the name of every object in the scene listed, with the names nested together according to their hierarchy linkages in the scene. Basically, it’s a way of selecting objects by name instead of hunting them down and clicking them within Scene. The Hierarchy linkages group objects together, visually grouping them like folders and allowing you to move the entire group as one.

Figure 1.12 Editor screenshot cropped to show the Hierarchy and Inspector tabs

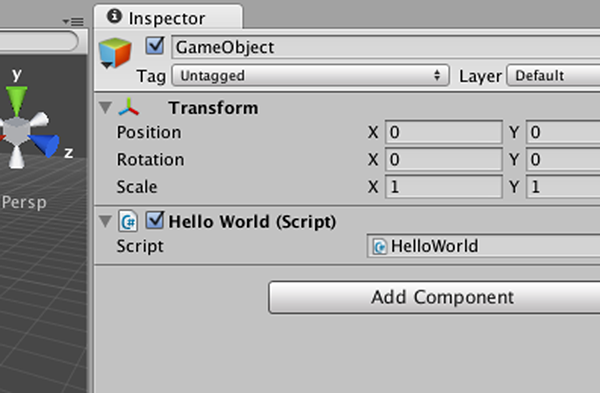

The Inspector shows you information about the currently selected object. Select an object and the Inspector is then filled with information about that object. The information shown is pretty much a list of components, and you can even attach or remove components from objects. All game objects have at least one component, Transform, so you’ll always at least see information about positioning and rotation in the Inspector. Often, objects will have several components listed here, including scripts attached to them.

At the bottom of the screen, you’ll see Project and Console (see figure 1.13). As with Scene and Game, these aren’t two separate portions of the screen, but rather tabs that you can switch between. Project shows all the assets (art, code, and so on) in the project. Specifically, on the left-hand side of the view is a listing of the directories in the project; when you select a directory, the right side of the view shows the individual files in that directory. The directory listing in Project is similar to the list view in Hierarchy, but Hierarchy shows objects in the scene; Project shows files that may not be contained within any specific scene (including scene files—when you save a scene, it shows up in Project!).

Figure 1.13 Editor screenshot cropped to show the Project and Console tabs

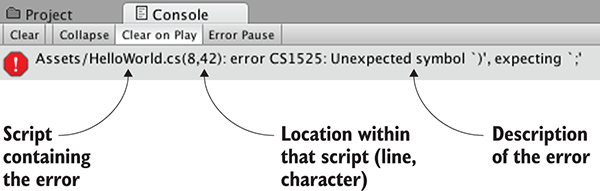

The Console is the place where messages from the code show up. Some of these messages will be debug output that you placed deliberately, but Unity also emits error messages if it encounters problems in the script you wrote.

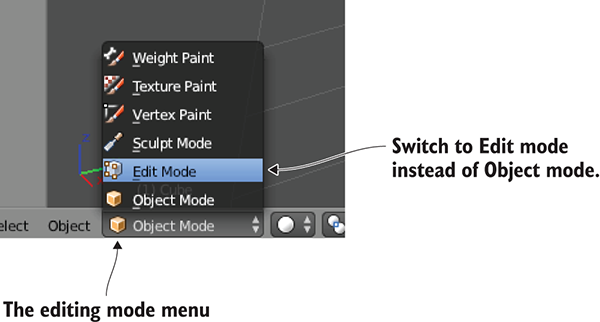

Now let’s look at how the process of programming works in Unity. Although art assets can be laid out in the visual editor, you need to write code to control them and make the game interactive. Unity supports multiple programming languages, in particular JavaScript and C#. Both have their pros and cons, but you’ll be using C# throughout this book.

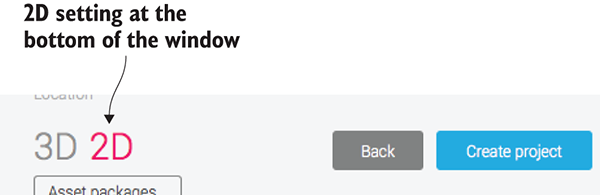

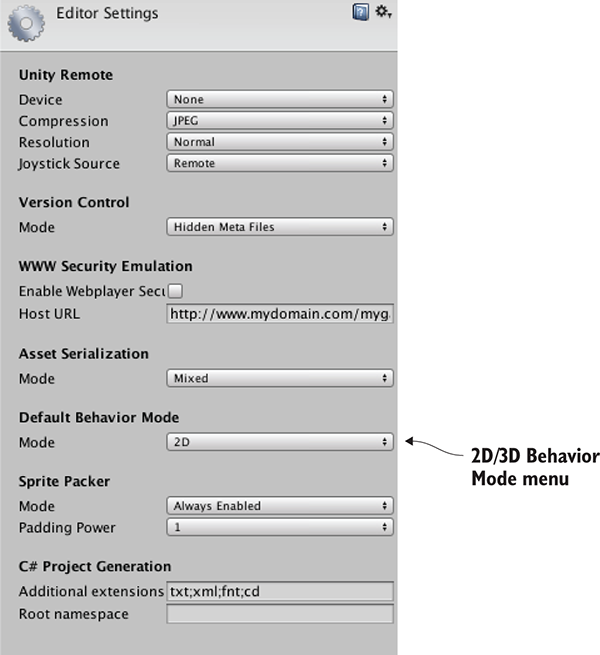

Let’s walk through an example of writing and running some code. Launch Unity and create a new project; choose New in Unity’s starting window, or File > New Project if Unity was already running. Type a name for the project, leave the default 3D setting (future chapters will mention 2D), and then choose where you want to save it. A Unity project is simply a directory full of various asset and settings files, so save the project anywhere on your computer. Click Create Project and then Unity will briefly disappear while it sets up the project directory.

When Unity reappears, you’ll be looking at a blank project. Next, let’s discuss how programs get executed in Unity.

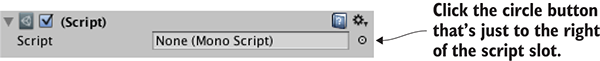

All code execution in Unity starts from code files linked to an object in the scene. Ultimately, it’s all part of the component system described earlier; game objects are built up as a collection of components, and that collection can include scripts to execute.

As you’ve probably surmised from this description, in Unity, scripts are components—not all scripts, mind you, only scripts that inherit from MonoBehaviour, the base class for script components. MonoBehaviour defines the invisible groundwork for how components attach to game objects, and (as shown in Listing 1.1) inheriting from it provides a couple of automatically run methods that you can implement. Those methods include Start(), called once when the object becomes active (which is generally as soon as the level with that object has loaded), and Update(), which is called every frame. Your code is run when you put it inside these predefined methods.

Listing 1.1 Code template for a basic script component

using UnityEngine;

using System.Collections;

using System.Collections.Generic;

public class HelloWorld : MonoBehaviour {

void Start() {

// do something once

}

void Update() {

// do something every frame

}

}

This is what the file contains when you create a new C# script: the minimal boilerplate code that defines a valid Unity component. Unity has a script template tucked away in the bowels of the application, and when you create a new script it copies that template and renames the class to match the name of the file (which is HelloWorld.cs in my case). There are also empty shells for Start() and Update(), because those are the two most common places to call your custom code from.

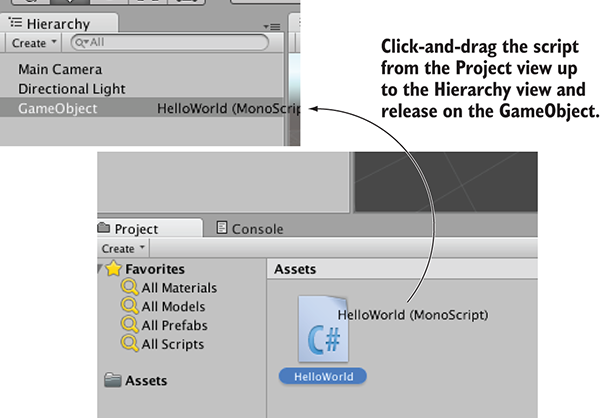

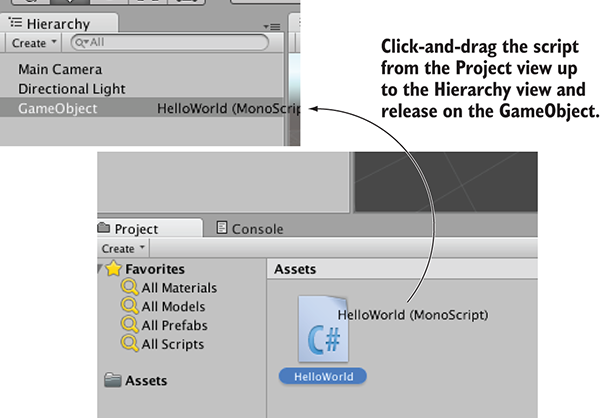

To create a script, select C# Script from the Create menu, which you access either under the Assets menu (note that Assets and GameObjects both have listings for Create but they’re different menus) or by right-clicking in the Project view. Type in a name for the new script, such as HelloWorld. As explained later in the chapter (see figure 1.15), you’ll click-and-drag this script file onto an object in the scene. Double-click the script and it’ll automatically be opened in another program for editing, as discussed next.

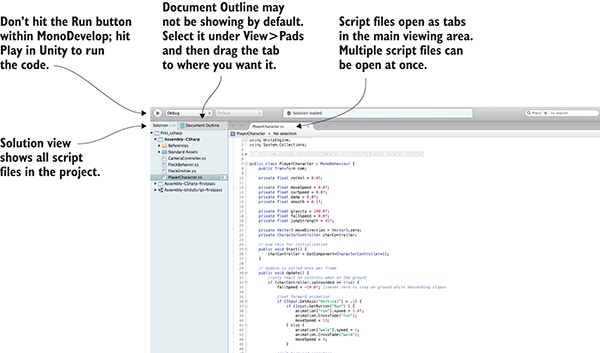

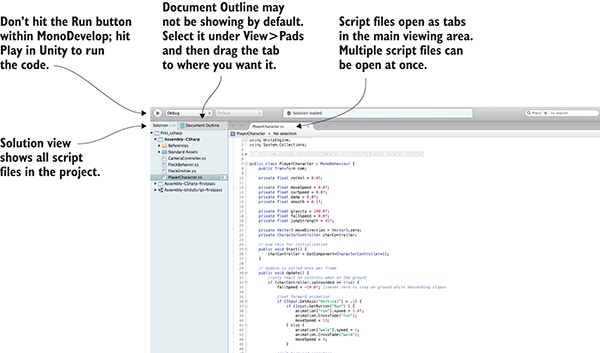

Programming isn’t done within Unity exactly, but rather code exists as separate files that you point Unity to. Script files can be created within Unity, but you still need to use a text editor or IDE to write all the code within those initially empty files. Unity comes bundled with MonoDevelop, an open source, cross-platform IDE for C# (figure 1.14 shows what it looks like). You can visit www.monodevelop.com/ to learn more about this software, but the version to use is the version bundled along with Unity, rather than a version downloaded from its website, because some modifications were made to the base software in order to better integrate it with Unity.

Figure 1.14 Parts of the interface in MonoDevelop

Because C# originated as a Microsoft product, you may be wondering whether you can use Visual Studio to do programming for Unity. The short answer is yes, you can. Support tools are available for using Visual Studio with Unity (particularly so that debugging and breakpoints work properly). To see if this support is already installed, check the Debug menu for the option Attach Unity Debugger. If it isn’t installed, simply run the Visual Studio Installer to modify your install and look for the Unity game development module.

I generally use MonoDevelop, but if you’re already using Visual Studio to do programming, then you could keep using it and not have any problems following along with this book. (Beyond this introductory chapter, I’m not going to talk about the IDE.) Tying your workflow to Windows, though, would run counter to one of the biggest advantages of using Unity. Although C# originated as a Microsoft product, and thus only worked on Windows with the .NET Framework, C# has now become an open language standard and there’s a significant cross-platform framework: Mono. Unity uses Mono for its programming backbone, and using MonoDevelop allows you to keep the entire development workflow cross-platform.

Always keep in mind that, although the code is written in Visual Studio or MonoDevelop, the code isn’t run there. The IDE is pretty much a fancy text editor, and the code is run when you hit Play within Unity.

All right, you already have an empty script in the project, but you also need an object in the scene to attach the script to. Recall figure 1.1 depicting how a component system works; a script is a component, so it needs to be set as one of the components on an object.

Select GameObject > Create Empty, and a blank GameObject will appear in the Hierarchy list. Now drag the script from the Project view over to the Hierarchy view and drop it on the empty GameObject. As shown in figure 1.15, Unity will highlight valid places to drop the script, and dropping it on the GameObject will attach the script to that object. To verify that the script is attached to the object, select the object and look at the Inspector view. You should see two components listed: the Transform component, which is the basic position/rotation/scale component all objects have and which can’t be removed, and below that, your script.

Figure 1.15 How to link a script to a GameObject

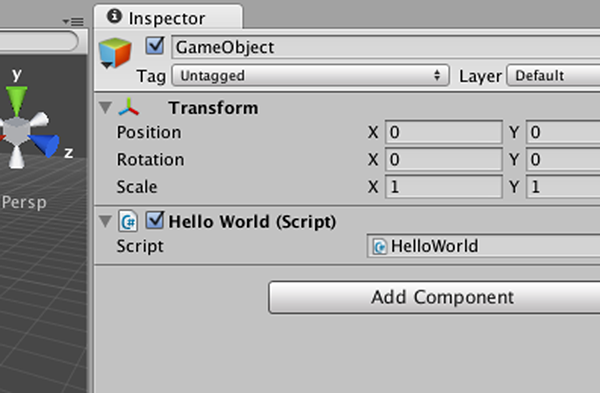

When a script is linked to an object, you’ll see something like figure 1.16, with the script showing up as a component in the Inspector. Now the script will execute when you play the scene, although nothing is going to happen yet, because you haven’t written any code. Let’s do that next!

Figure 1.16 Linked script being displayed in the Inspector

Open the script in MonoDevelop to get back to Listing 1.1. The classic place to start when learning a new programming environment is having it print the text “Hello World!”, so add this line inside the Start() method.

Listing 1.2 Adding a console message

...

void Start() {

Debug.Log("Hello World!");

}

...

What the Debug.Log() command does is print a message to the Console view in Unity. Meanwhile, that line goes in the Start() method because, as was explained earlier, that method is called as soon as the object becomes active. Start() will be called once, as soon as you hit Play in the editor. Once you’ve added the log command to your script (be sure to save the script), hit Play in Unity and switch to the Console view. You’ll see the message “Hello World!” appear. Congratulations, you’ve written your first Unity script! Of course, the code will be more elaborate in later chapters, but this is an important first step.

You could now save the scene; that would create a .unity file with the Unity icon. The scene file is a snapshot of everything currently loaded in the game so that you can reload this scene later. It’s hardly worth saving this scene because it’s so simple (a single empty GameObject), but if you don’t save the scene, then you’ll find it empty again when you come back to the project after quitting Unity.

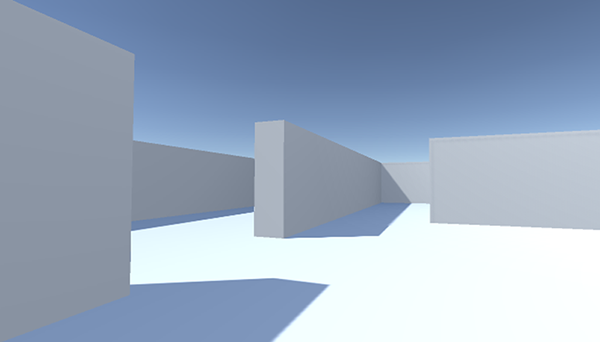

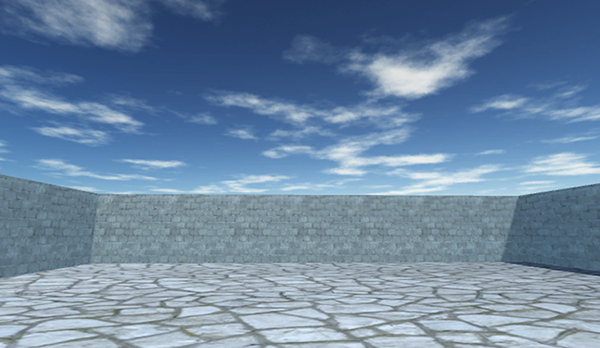

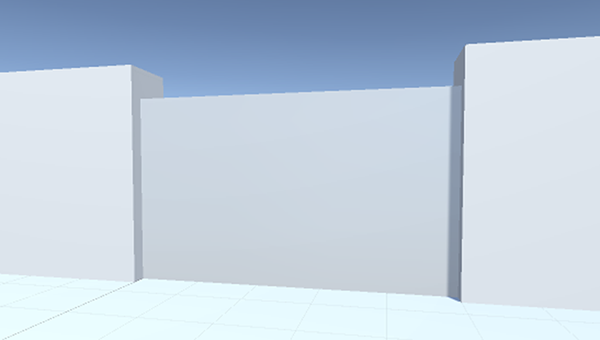

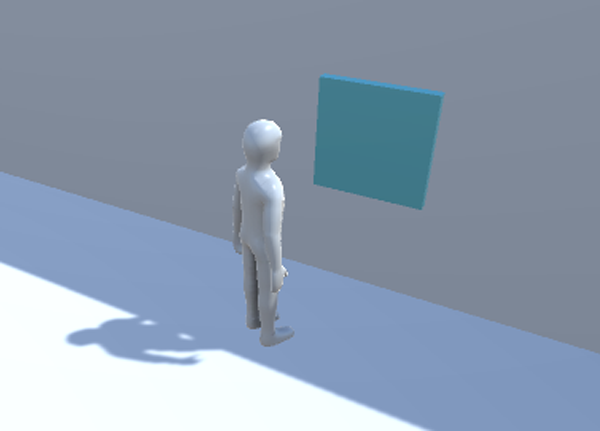

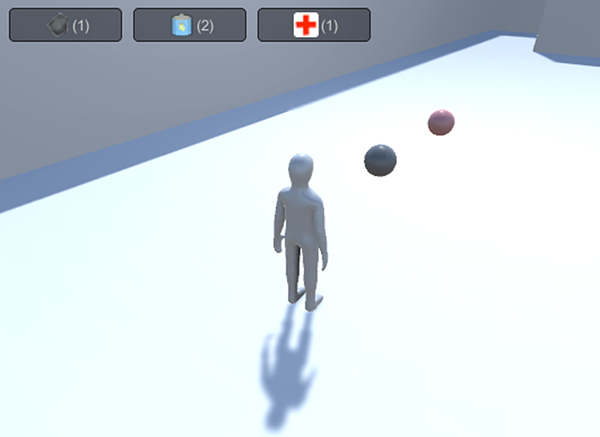

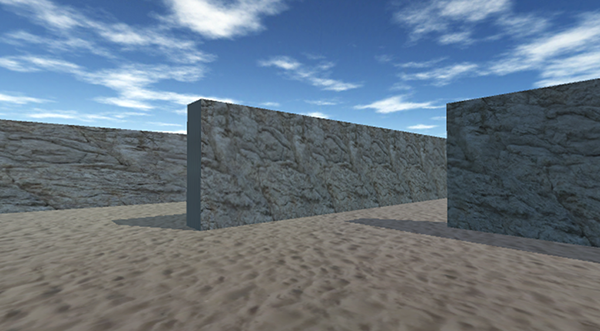

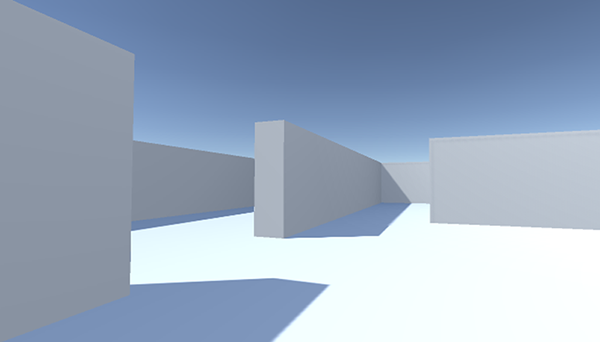

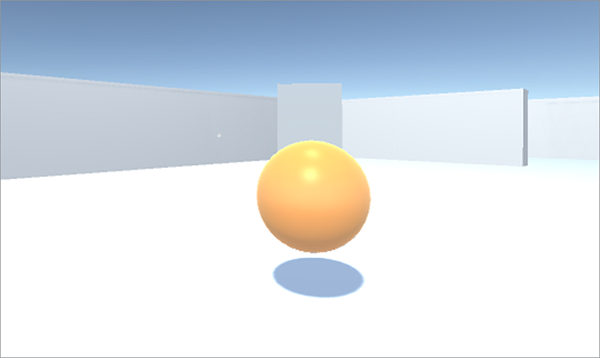

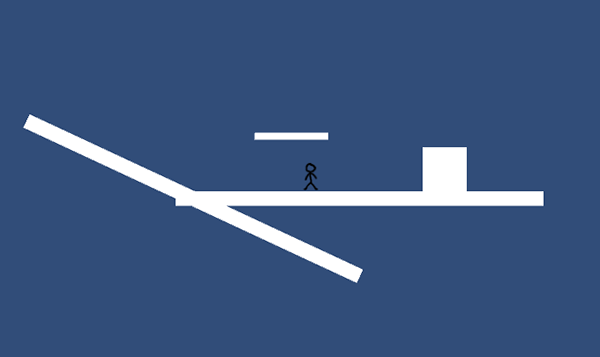

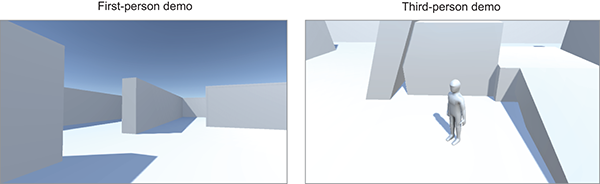

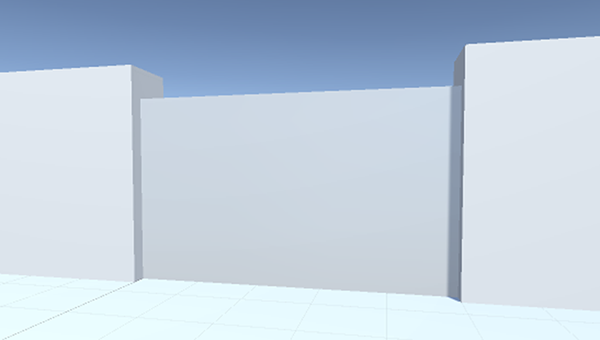

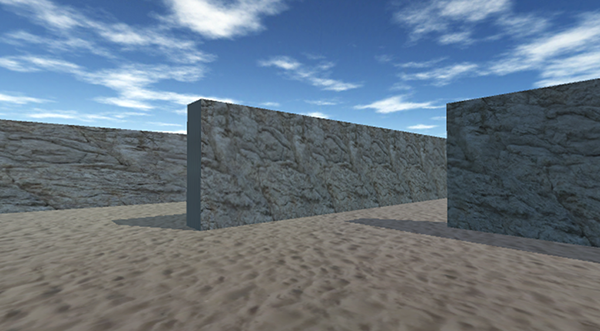

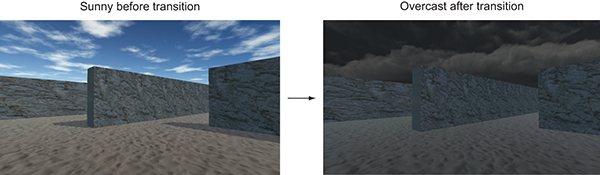

Chapter 1 concluded with the traditional “Hello World!” introduction to a new programming tool; now it’s time to dive into a nontrivial Unity project, a project with interactivity and graphics. You’ll put some objects into a scene and write code to enable a player to walk around that scene. Basically, it’ll be Doom without the monsters (something like what figure 2.1 depicts). The visual editor in Unity enables new users to start assembling a 3D prototype right away, without needing to write a lot of boilerplate code first (for things like initializing a 3D view or establishing a rendering loop).

It’s tempting to immediately start building the scene in Unity, especially with such a simple (in concept!) project. But it’s always a good idea to pause at the beginning and plan out what you’re going to do, and this is especially important right now because you’re new to the process.

Figure 2.1 Screenshot of the 3D demo (basically, Doom without the monsters)

Unity makes it easy for a newcomer to get started, but let’s go over a couple of points before you build the complete scene. Even when working with a tool as flexible as Unity, you do need to have some sense of the goal you’re working toward. You also need a grasp of how 3D coordinates operate, or you could get lost as soon as you try to position an object in the scene.

Before you start programming anything, you always want to pause and ask yourself, “So what am I building here?” Game design is a huge topic in itself, with many impressively large books focused on how to design a game. Fortunately for our purposes, you only need a brief outline of this simple demo in mind in order to develop a basic learning project. These initial projects won’t be terribly complex designs anyway, in order to avoid distracting you from learning programming concepts; you can (and should!) worry about higher-level design issues after you’ve mastered the fundamentals of game development.

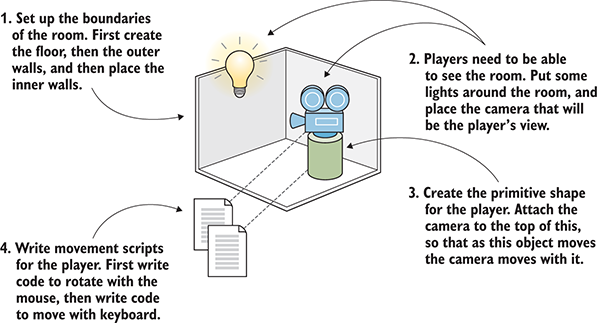

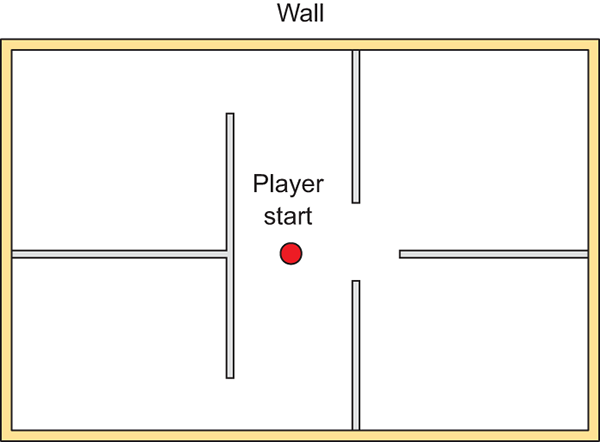

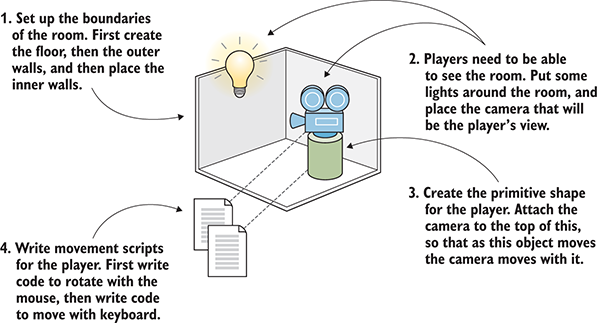

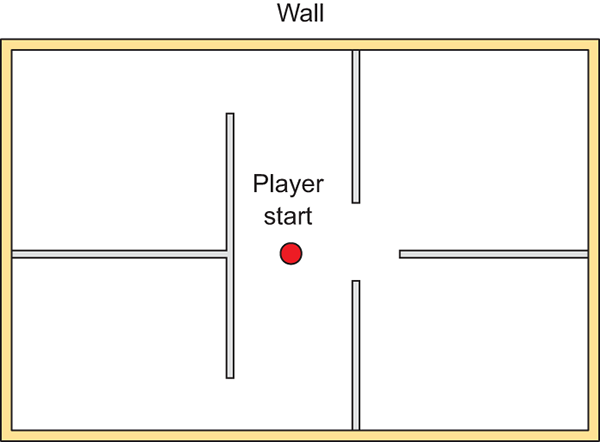

For this first project, you’ll build a basic FPS (first-person shooter) scene. There will be a room to navigate around, players will see the world from their character’s point of view, and the player can control the character using the mouse and keyboard. All the interesting complexity of a complete game can be stripped away for now in order to concentrate on the core mechanic: moving around in a 3D space. Figure 2.2 depicts the roadmap for this project, laying out the checklist I built in my head:

Figure 2.2 Roadmap for the 3D demo

Don’t be scared off by everything in this roadmap! It sounds like there’s a lot in this chapter, but Unity makes it easy. The upcoming sections about movement scripts are so extensive only because we’ll be going through every line in order to understand all the concepts in detail. This project is a first-person demo in order to keep the art requirements simple; because you can’t see yourself, it’s fine for “you” to be a cylindrical shape with a camera on top! Now you only need to understand how 3D coordinates work, and it will be easy to place everything in the visual editor.

If you think about the simple plan we’re starting with, there are three aspects to it: a room, a view, and controls. All of these items rely on you understanding how positions and movements are represented in 3D computer simulations, and if you’re new to working with 3D graphics you might not already know that stuff.

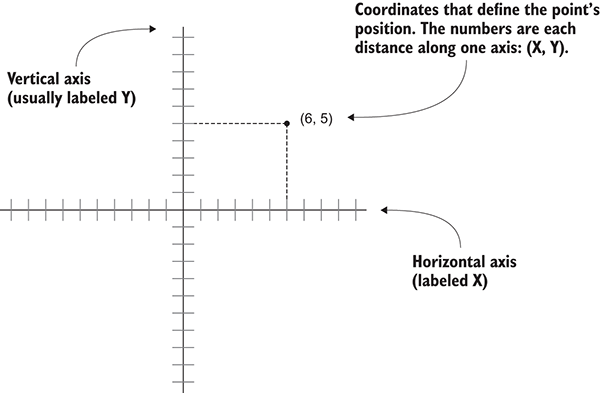

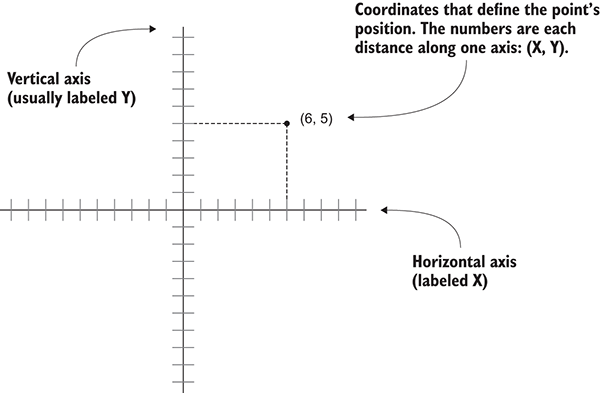

It all boils down to numbers that indicate points in space, and the way those numbers correlate to the space is through coordinate axes. If you think back to math class, you’ve probably seen and used X- and Y-axes (see figure 2.3) for assigning coordinates to points on the page, which is referred to as a Cartesian coordinate system.

Figure 2.3 Coordinates along the X- and Y-axes define a 2D point.

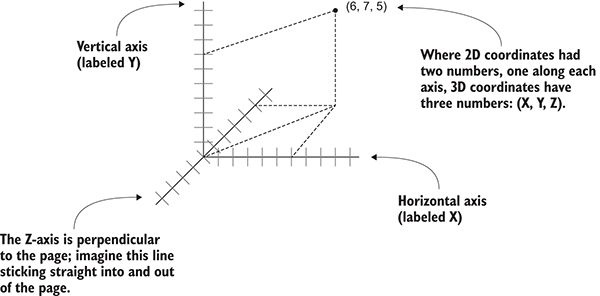

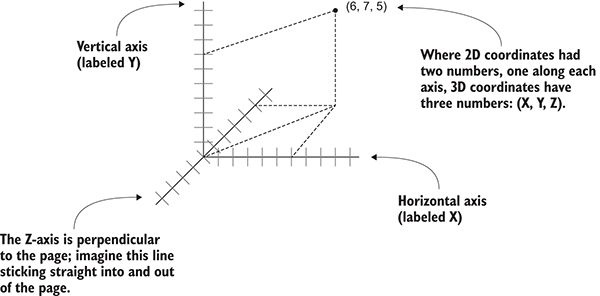

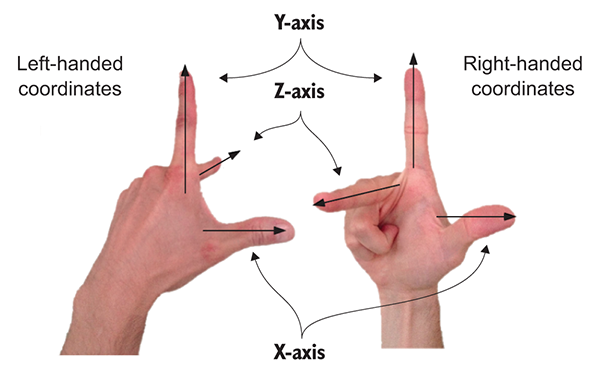

Two axes give you 2D coordinates, with all points in the same plane. Three axes are used to define 3D space. Because the X-axis goes along the page horizontally and the Y-axis goes along the page vertically, we now imagine a third axis that sticks straight into and out of the page, perpendicular to both the X- and Y- axes. Figure 2.4 depicts the X-, Y-, and Z-axes for 3D coordinate space. Everything that has a specific position in the scene will have XYZ coordinates: the position of the player, the placement of a wall, and so forth.

Figure 2.4 Coordinates along the X-, Y-, and Z-axes define a 3D point.

In Unity’s Scene view, you can see these three axes displayed, and in the Inspector you can type in the three numbers required to position an object. Not only will you write code to position objects using these three-number coordinates, but you can also define movements as a distance to move along each axis.

Now that you have a plan in mind for this project and you know how coordinates are used to position objects in 3D space, it’s time to start building the scene.

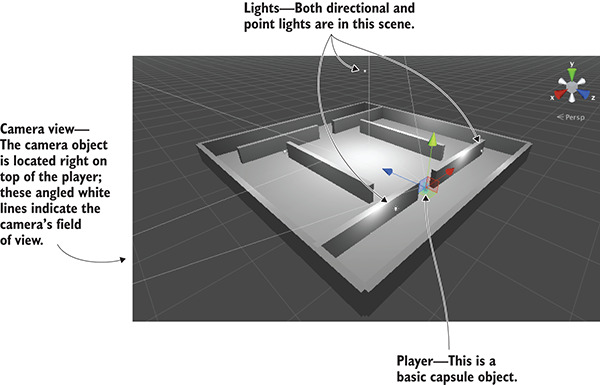

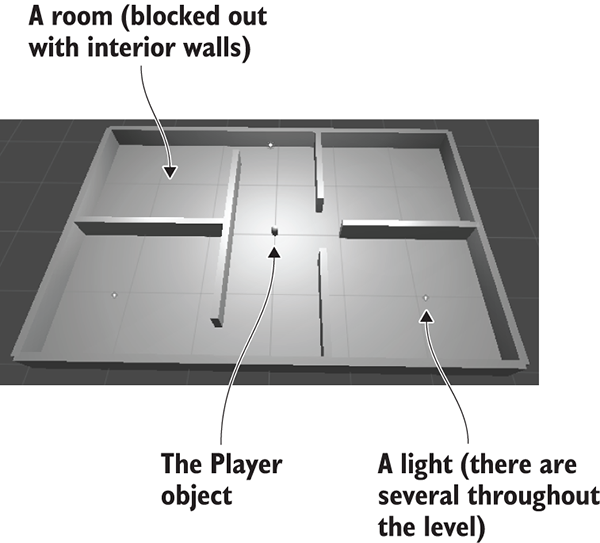

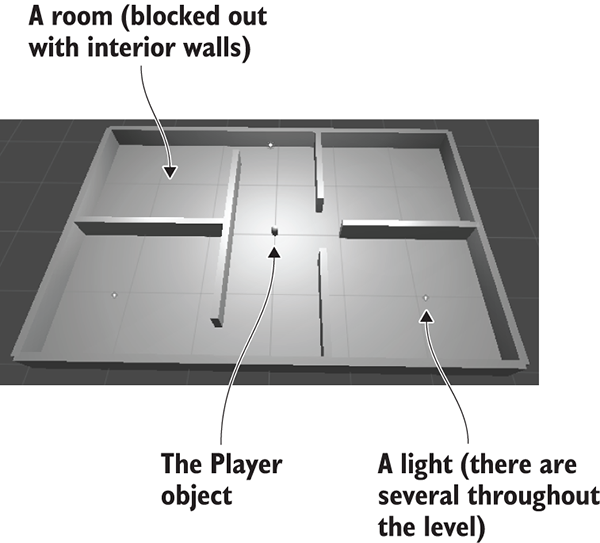

Let’s create and place objects in the scene. First, you’ll set up all the static scenery—the floor and walls. Then you’ll place lights around the scene and position the camera. Lastly, you’ll create the object that will be the player, the object to which you’ll attach scripts to walk around the scene. Figure 2.5 shows what the editor will look like with everything in place.

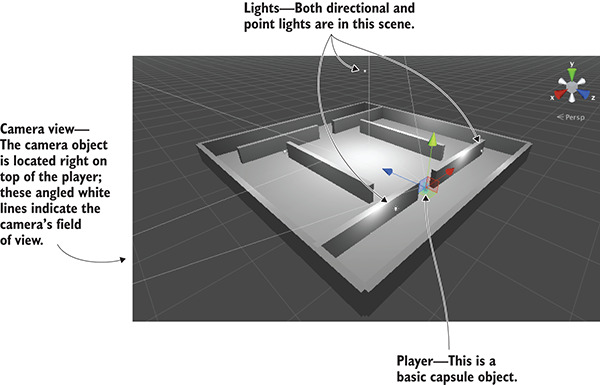

Figure 2.5 Scene in the Editor with floor, walls, lights, a camera, and the player

Chapter 1 showed how to create a new project in Unity, so you’ll do that now. Remember: Choose New (or File > New Project) and then name your new project in the window that pops up. After creating the new project, immediately save the current empty default scene, because the project doesn’t have any Scene files initially. The scene starts out empty, and the first objects to create are the most obvious ones.

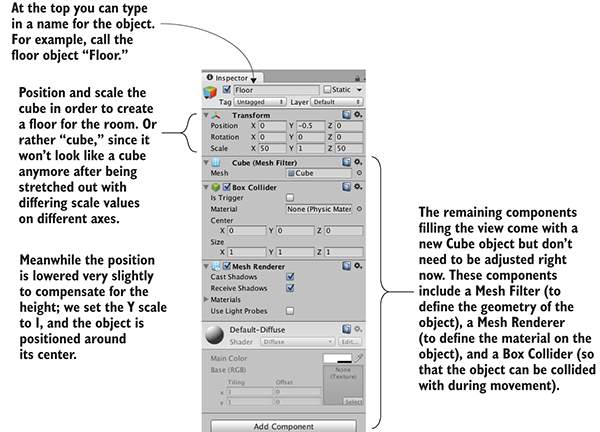

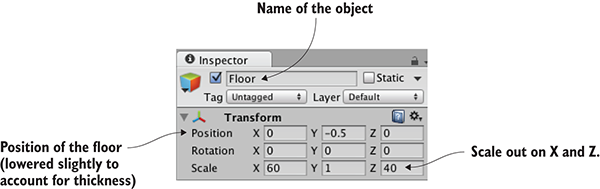

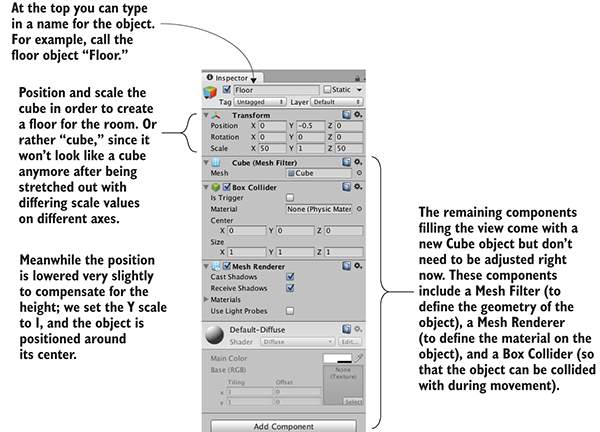

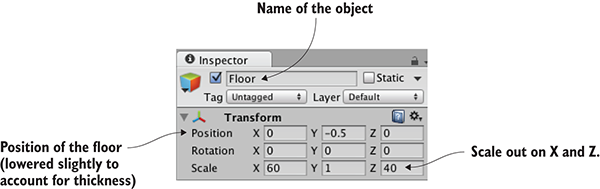

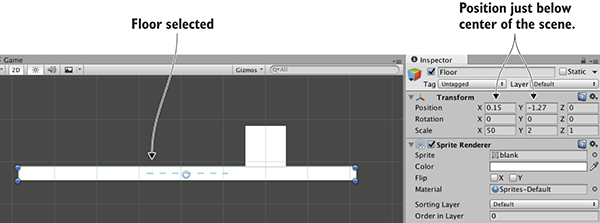

Select the GameObject menu at the top of the screen, and then hover over 3D Object to see that drop-down menu. Select Cube to create a new cube object in the scene (later, we’ll use other shapes, like Sphere and Capsule). Adjust the position and scale of this object, as well as its name, in order to make the floor; figure 2.6 shows which values the floor should be set to in the Inspector (it’s only a cube initially, before you stretch it out).

Repeat the same steps in order to create outer walls for the room. You can create new cubes each time, or you can copy and paste existing objects using the standard shortcuts. Move, rotate, and scale the walls to form a perimeter around the floor. Experiment with different numbers (for example, 1, 4, 50 for scale) or use the transform tools first seen in section 1.2.2 (remember that the mathematical term for moving and rotating in 3D space is transform).

Figure 2.6 Inspector view for the floor

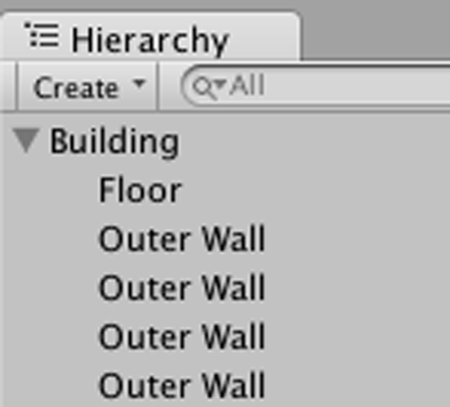

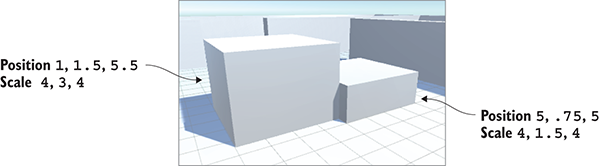

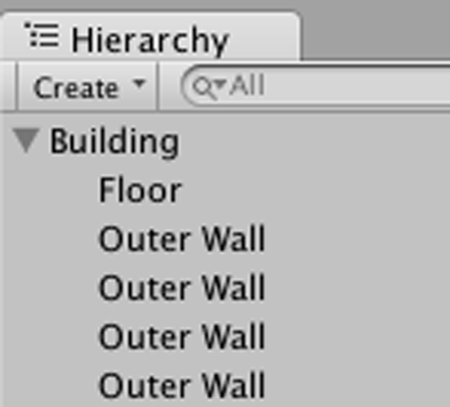

The exact transform values the walls end up with will vary depending on how you rotate and scale the cubes to fit, and on how the objects are linked together in the Hierarchy view. For example, in figure 2.7 the walls are all children of an empty root object, so that the Hierarchy list will look organized. If you need an example to copy working values from, download the sample project and refer to the walls there.

Figure 2.7 The Hierarchy view showing the walls and floor organized under an empty object

Once the outer walls are in place, create some inner walls to navigate around. Position the inner walls however you like; the idea is to create some hallways and obstacles to walk around once you write code for movement.

Now the scene has a room in it, but we still need to set up the lighting. Let’s take care of that next.

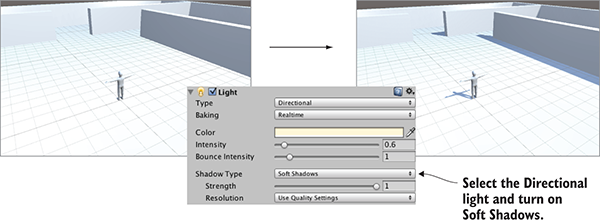

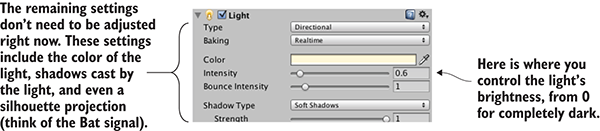

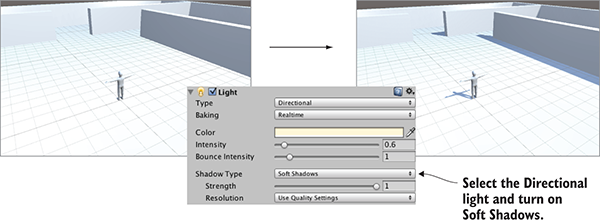

Typically, you light a 3D scene with a directional light and then a series of point lights. Start with a directional light; the scene probably already has one by default, but if not, then create one by choosing GameObject > Light and selecting Directional Light.

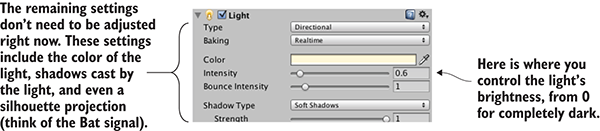

The position of a directional light doesn’t affect the light cast from it, only the rotation the light source is facing, so technically, you could place that light anywhere in the scene. I recommend placing it high above the room so that it intuitively feels like the sun and so that it’s out of the way when you’re manipulating the rest of the scene. Rotate this light and watch the effect on the room; I recommend rotating it slightly on both the X- and Y-axes to get a good effect. You will see an Intensity setting when you look in the Inspector (see figure 2.8). As the name implies, that setting controls the brightness of the light. If this were the only light, it’d have to be more intense, but because you’ll add a bunch of point lights as well, this directional light can be pretty dim, like 0.6 Intensity.

Figure 2.8 Directional light settings in the Inspector

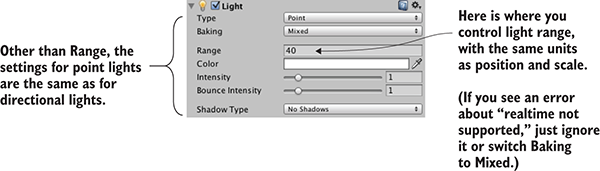

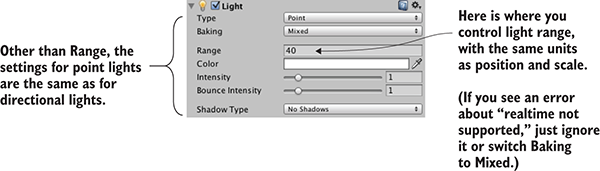

As for point lights, create several using the same menu and place them in dark spots around the room in order to make sure all the walls are lit. You don’t want too many (performance will degrade if the game has lots of lights), but one near each corner should be fine (I suggest raising them to the tops of the walls), plus one placed high above the scene (like a Y of 18) to give variety to the light in the room. Note that point lights have a setting for Range added to the Inspector (see figure 2.9). This controls how far away the light reaches; whereas directional lights cast light evenly throughout the entire scene, point lights are brighter when an object is closer. The point lights closer to the floor should have a range of around 18, but the light placed high up should have a range of around 40 in order to reach the entire room.

Figure 2.9 Point light settings in the Inspector

The other kind of object needed in order for the player to see the scene is a camera, but the “empty” scene came with a main camera, so you’ll use that. If you ever need to create new cameras (such as for split screen views in multiplayer games), Camera is another choice in the same GameObject menu as Cube and Lights. The camera will be positioned around the top of the player so that the view appears to be the player’s eyes.

For this project, a simple primitive shape will do to represent the player. In the GameObject menu (remember, hover over 3D Object to expand the menu), click Capsule. Unity creates a cylindrical shape with rounded ends; this primitive shape will represent the player. Position this object at 1.1 on the Y-axis (half the height of the object, plus a bit to avoid overlapping the floor). You can move the object along X and Z wherever you like, as long as it’s inside the room and not touching any walls. Name the object Player.

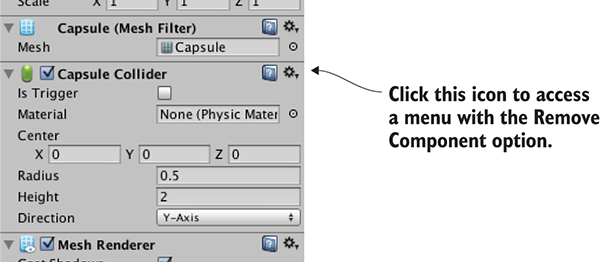

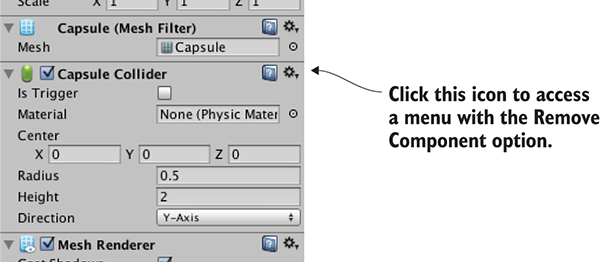

In the Inspector, you’ll notice that this object has a capsule collider assigned to it. That’s a logical default choice for a capsule object, just like cube objects had a box collider by default. But this particular object will be the player and thus needs a slightly different sort of component than most objects. Remove the capsule collider by clicking the gear icon toward the top-right of that component, shown in figure 2.10; that will display a menu that includes the option Remove Component. The collider is a green mesh surrounding the object, so you’ll see the green mesh disappear after deleting the capsule collider.

Figure 2.10 Removing a component in the Inspector

Instead of a capsule collider, we’re going to assign a character controller to this object. At the bottom of the Inspector, there’s a button labeled Add Component; click that button to open a menu of components that you can add. In the Physics section of this menu, you’ll find Character Controller; select that option. As the name implies, this component will allow the object to behave like a character.

You need to complete one last step to set up the player object: attaching the camera. As mentioned in the earlier section on floors and walls, objects can be dragged onto each other in the Hierarchy view. Drag the camera object onto the player capsule to attach the camera to the player. Now position the camera so that it’ll look like the player’s eyes (I suggest a position of 0, 0.5, 0). If necessary, reset the camera’s rotation to 0, 0, 0 (this will be off if you’ve rotated the capsule).

You’ve created all the objects needed for this scene. What remains is writing code to move the player object.

To have the player walk around the scene, you’ll write movement scripts attached to the player. Remember, components are modular bits of functionality that you add to objects, and scripts are a kind of component. Eventually, those scripts will respond to keyboard and mouse input, but first you'll make the player spin in place. This modest beginning will teach you how to apply transforms in code. Remember that the three transforms are Translate, Rotate, and Scale; spinning an object means changing the rotation. But there’s more to know about this task than only “this involves rotation.”

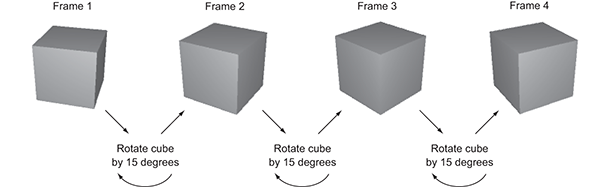

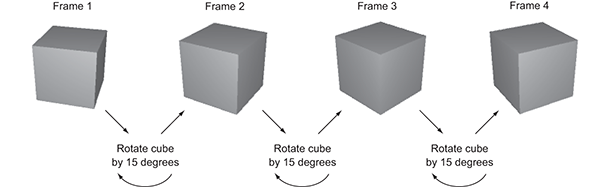

Animating an object (such as making it spin) boils down to moving it a small amount every frame, with the frames playing over and over. By themselves, transforms apply instantly, as opposed to visibly moving over time. But applying the transforms over and over causes the object to visibly move, like a series of still drawings in a flipbook. Figure 2.11 illustrates how this works.

Figure 2.11 The appearance of movement: cyclical process of transforming between still pictures

Recall that script components have an Update() method that runs every frame. To spin the cube, add code inside Update() that rotates the cube a small amount. This code will run over and over every frame. Sounds pretty simple, right?

Now let’s put in action the concepts we’ve just discussed. Create a new C# script (remember, it’s in the Create submenu of the Assets menu), name it Spin, and write in this code (don’t forget to save the file after typing in it!).

Listing 2.1 Making the object spin

using UnityEngine;

using System.Collections;

public class Spin : MonoBehaviour {

public float speed = 3.0f;

void Update() {

transform.Rotate(0, speed, 0);

}

}

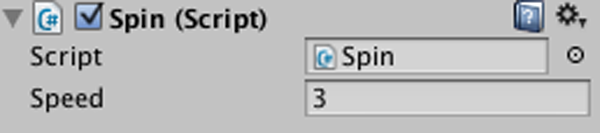

To add the script component to the player object, drag the script up from the Project view and drop it onto Player in the Hierarchy view. Now hit Play, and you’ll see the view spin around; you’ve written code to make an object move! This code is pretty much the default template for a new script plus two new added lines, so let’s examine what those two lines do.

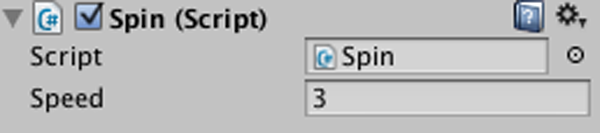

First, there’s the variable for speed added toward the top of the class definition (the f after the number tells the computer to treat this as a float value; otherwise, C# treats decimal numbers as a double). The rotation speed is defined as a variable rather than a constant because Unity does something handy with public variables in script components, as described in the following tip:

Figure 2.12 shows what the script component looks like in the Inspector. You can type in a new number, and then the script will use that value instead of the default value defined in the code. This is a handy way to adjust settings for the component on different objects, working within the visual editor instead of hardcoding every value.

Figure 2.12 The Inspector displaying a public variable declared in the script

The second line to examine from Listing 2.1 is the Rotate() method. That’s inside Update() so that the command runs every frame. Rotate() is a method of the Transform class, so it’s called with dot notation through the transform component of this object (as in most object-oriented languages, this.transform is implied if you type transform). The transform is rotated by speed degrees every frame, resulting in a smooth spinning movement. But why are the parameters to Rotate() listed as (0, speed, 0) as opposed to, say, (speed, 0, 0)?

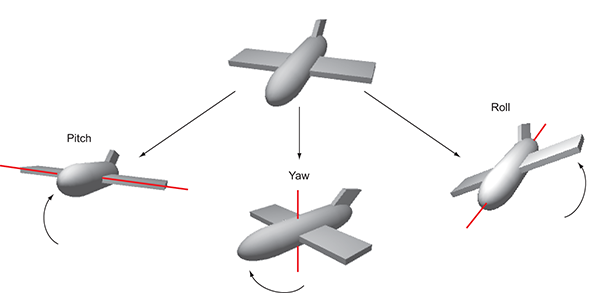

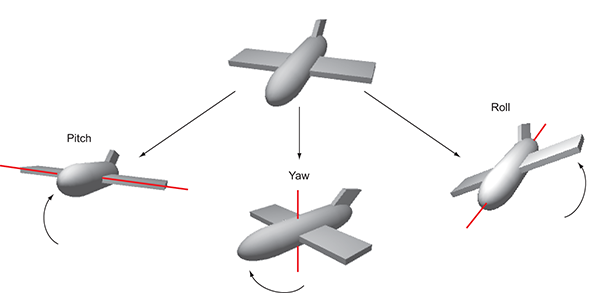

Recall that there are three axes in 3D space, labeled X, Y, and Z. It’s fairly intuitive to understand how these axes relate to positions and movements, but these axes can also be used to describe rotations. Aeronautics describes rotations in a similar way, so programmers working with 3D graphics often use a set of terms borrowed from aeronautics: pitch, yaw, and roll. Figure 2.13 illustrates what these terms mean; pitch is rotation around the X-axis, yaw is rotation around the Y-axis, and roll is rotation around the Z-axis.

Figure 2.13 Illustration of pitch, yaw, and roll rotation of an aircraft

Given that we can describe rotations around the X-, Y-, and Z-axes, that means the three parameters for Rotate() are X, Y, and Z rotation. Because we only want the player to spin around sideways, as opposed to tilting up and down, there should only be a number given for the Y rotation, and 0 for X and Z rotation. Hopefully you can guess what will happen if you change the parameters to (speed, 0, 0) and then play it. Try that now!

There’s one other subtle point to understand about rotations and 3D coordinate axes, embodied in an optional fourth parameter to the Rotate()method.

By default, the Rotate() method operates on what are called local coordinates. The other kind of coordinates you could use are global. You tell the method whether to use local or global coordinates using an optional fourth parameter by writing either Space.Self or Space.World like so:

Rotate(0, speed, 0, Space.World)

Refer to the explanation about 3D coordinate space earlier in this chapter, and ponder these questions: Where is (0, 0, 0) located? Which direction is the X-axis pointing in? Can the coordinate system itself move around?

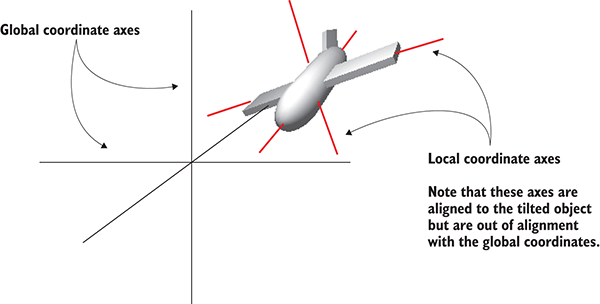

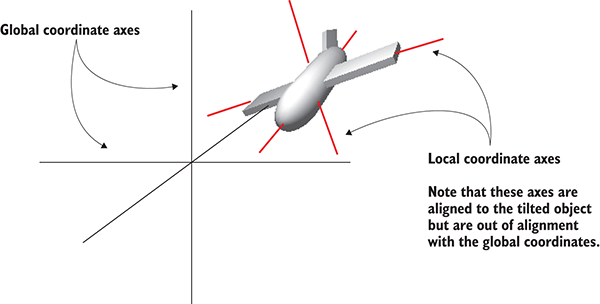

It turns out that every single object has its own origin point, as well as its own direction for the three axes, and this coordinate system moves around with the object. This is referred to as local coordinates. The overall 3D scene also has its own origin point and its own direction for the three axes, and this coordinate system never moves. This is referred to as global coordinates. Therefore, when you specify local or global for the Rotate() method, you’re telling it whose X-, Y-, and Z-axes to rotate around (see figure 2.14).

Figure 2.14 Local versus global coordinate axes

If you’re new to 3D graphics, this is somewhat of a mind-bending concept. The different axes are depicted in figure 2.14 (notice how “left” to the plane is a different direction than “left” to the world), but the easiest way to understand local and global is through an example.

Select the player object and then tilt it a bit (something like 30 for the X rotation). This will throw off the local coordinates so that local and global rotations will look different. Now try running the Spin script both with and without Space.World added to the parameters; if it’s too hard for you to visualize what’s happening, try removing the spin component from the player object and instead spin a tilted cube placed in front of the player. You’ll see the object rotating around different axes when you set the command to local or global coordinates.

Now you’ll make rotation respond to input from the mouse (that is, rotation of the object this script is attached to, which in this case will be the player). You’ll do this in several steps, progressively adding new movement abilities to the character. First, the player will only rotate side to side, and then the player will only rotate up and down. Eventually, the player will be able to look around in all directions (rotating horizontally and vertically at the same time), a behavior referred to as mouse-look.

Given that there will be three different types of rotation behavior (horizontal, vertical, and both), you’ll start by writing the framework for supporting all three. Create a new C# script, name it MouseLook, and write in this code.

Listing 2.2 MouseLook framework with enum for the Rotation setting

using UnityEngine;

using System.Collections;

public class MouseLook : MonoBehaviour {

public enum RotationAxes {

MouseXAndY = 0,

MouseX = 1,

MouseY = 2

}

public RotationAxes axes = RotationAxes.MouseXAndY;

void Update() {

if (axes == RotationAxes.MouseX) {

// horizontal rotation here

}

else if (axes == RotationAxes.MouseY) {

// vertical rotation here

}

else {

// both horizontal and vertical rotation here

}

}

}

Notice that an enum is used to choose horizontal or vertical rotation for the MouseLook script. Defining an enum data structure allows you to set values by name, rather than typing in numbers and trying to remember what each number means (is 0 horizontal rotation? Is it 1?). If you then declare a public variable typed to that enum, that will display in the Inspector as a drop-down menu (see figure 2.15), which is useful for selecting settings.

Figure 2.15 The Inspector displays public enum variables as a drop-down menu.

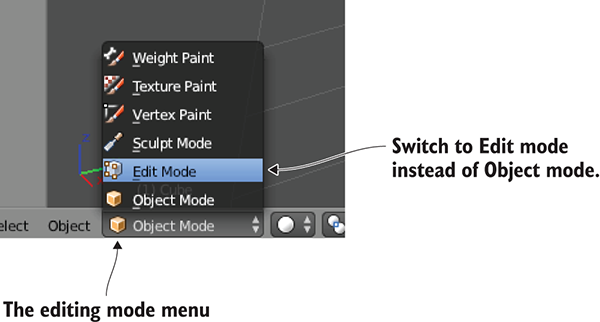

Remove the Spin component (the same way you removed the capsule collider earlier) and attach this new script to the player object instead. Use the Axes drop-down menu in the Inspector to switch the direction of rotation. With the horizontal/vertical rotation setting in place, you can fill in code for each branch of the conditional statement.

The first and simplest branch is horizontal rotation. Start by writing the same rotation command you used in Listing 2.1 to make the object spin. Don’t forget to declare a public variable for the rotation speed; declare the new variable after axes but before Update(), and call the variable sensitivityHor because speed is too generic a name once you have multiple rotations involved. Increase the value of the variable to 9 this time, because that value needs to be bigger once the code starts scaling it (which will be soon). The adjusted code should look like this.

Listing 2.3 Horizontal rotation, not yet responding to the mouse

...

public RotationAxes axes = RotationAxes.MouseXAndY;

public float sensitivityHor = 9.0f;

void Update() {

if (axes == RotationAxes.MouseX) {

transform.Rotate(0, sensitivityHor, 0);

}

...

Set the Axes menu of the MouseLook component to horizontal rotation and play the script; the view will spin as before. The next step is to make the rotation react to mouse movement, so let’s introduce a new method: Input.GetAxis(). The Input class has a bunch of methods for handling input devices (such as the mouse) and the GetAxis() method returns numbers correlated to the movement of the mouse (positive or negative, depending on the direction of movement). GetAxis() takes the name of the axis desired as a parameter, and the horizontal axis is called Mouse X.

If you multiply the rotation speed by the axis value, the rotation will respond to mouse movement. The speed will scale according to mouse movement, scaling down to zero or even reversing direction. The Rotate command now looks like this.

Listing 2.4 Rotate command adjusted to respond to the mouse

...

transform.Rotate(0, Input.GetAxis("Mouse X") * sensitivityHor, 0);

...

Hit Play and then move the mouse around. As you move the mouse from side to side, the view will rotate from side to side. That’s pretty cool! The next step is to rotate vertically instead of horizontally.

For horizontal rotation we’ve been using the Rotate() method, but we’ll take a different approach with vertical rotation. Although that method is convenient for applying transforms, it’s also kind of inflexible. It’s only useful for incrementing the rotation without limit, which was fine for horizontal rotation, but vertical rotation needs limits on how much the view can tilt up or down. This listing shows the vertical rotation code for MouseLook; a detailed explanation of the code will immediately follow.

Listing 2.5 Vertical rotation for MouseLook

...

public float sensitivityHor = 9.0f;

public float sensitivityVert = 9.0f;

public float minimumVert = -45.0f;

public float maximumVert = 45.0f;

private float _rotationX = 0;

void Update() {

if (axes == RotationAxes.MouseX) {

transform.Rotate(0, Input.GetAxis("Mouse X") * sensitivityHor, 0);

}

else if (axes == RotationAxes.MouseY) {

_rotationX -= Input.GetAxis("Mouse Y") * sensitivityVert;

_rotationX = Mathf.Clamp(_rotationX, minimumVert, maximumVert);

float rotationY = transform.localEulerAngles.y;

transform.localEulerAngles = new Vector3(_rotationX, rotationY, 0);

}

...

Set the Axes menu of the MouseLook component to vertical rotation and play the new script. Now the view won’t rotate sideways, but it’ll tilt up and down when you move the mouse up and down. The tilt stops at upper and lower limits.

There are several new concepts in this code that need to be explained. First off, we’re not using Rotate() this time, so we need a variable (called _rotationX here, because vertical rotation goes around the X-axis) in which to store the rotation angle. The Rotate() method increments the current rotation, whereas this code sets the rotation angle directly. It’s the difference between saying “add 5 to the angle” and “set the angle to 30.” We do still need to increment the rotation angle, but that’s why the code has the -= operator: to subtract a value from the rotation angle, rather than set the angle to that value. By not using Rotate() we can manipulate the rotation angle in various ways aside from only incrementing it. The rotation value is multiplied by Input.GetAxis(), like in the code for horizontal rotation, except now we ask for Mouse Y because that’s the vertical axis of the mouse.

The rotation angle is manipulated further on the next line. We use Mathf.Clamp() to keep the rotation angle between minimum and maximum limits. Those limits are public variables declared earlier in the code, and they ensure that the view can only tilt 45 degrees up or down. The Clamp() method isn’t specific to rotation, but is generally useful for keeping a number variable between limits. To see what happens, try commenting out the Clamp() line; now the tilt doesn’t stop at upper and lower limits, allowing you to even rotate completely upside down! Clearly, viewing the world upside down is undesirable, hence the limits.

Because the angles property of transform is a Vector3, we need to create a new Vector3 with the rotation angle passed in to the constructor. The Rotate() method was automating this process for us, incrementing the rotation angle and then creating a new vector.

There’s one more rotation setting for MouseLook that needs code: horizontal and vertical rotation at the same time.

This last chunk of code won’t use Rotate() either, for the same reason: The vertical rotation angle is clamped between limits after being incremented. That means the horizontal rotation needs to be calculated directly now. Remember, Rotate() was automating the process of incrementing the rotation angle, shown here.

Listing 2.6 Horizontal and vertical MouseLook

...

else {

_rotationX -= Input.GetAxis("Mouse Y") * sensitivityVert;

_rotationX = Mathf.Clamp(_rotationX, minimumVert, maximumVert);

float delta = Input.GetAxis("Mouse X") * sensitivityHor;

float rotationY = transform.localEulerAngles.y + delta;

transform.localEulerAngles = new Vector3(_rotationX, rotationY, 0);

}

...

The first couple of lines, dealing with _rotationX, are exactly the same as in Listing 2.5. Remember that rotating around the object’s X-axis is vertical rotation. Because horizontal rotation is no longer being handled using the Rotate() method, that’s what the delta and rotationY lines are doing. Delta is a common mathematical term for “the amount of change,” so our calculation of delta is the amount that rotation should change. That amount of change is then added to the current rotation angle to get the desired new rotation angle.

Finally, both angles, vertical and horizontal, are used to create a new vector that’s assigned to the transform component’s angle property.

In case you’ve gotten lost on where to make the various changes and additions we’ve gone over, this listing has the full finished script. Alternatively, download the example project.

Listing 2.7 The finished MouseLook script

using UnityEngine;

using System.Collections;

public class MouseLook : MonoBehaviour {

public enum RotationAxes {

MouseXAndY = 0,

MouseX = 1,

MouseY = 2

}

public RotationAxes axes = RotationAxes.MouseXAndY;

public float sensitivityHor = 9.0f;

public float sensitivityVert = 9.0f;

public float minimumVert = -45.0f;

public float maximumVert = 45.0f;

private float _rotationX = 0;

void Start() {

Rigidbody body = GetComponent<Rigidbody>();

if (body != null)

body.freezeRotation = true;

}

void Update() {

if (axes == RotationAxes.MouseX) {

transform.Rotate(0, Input.GetAxis("Mouse X") * sensitivityHor, 0);

}

else if (axes == RotationAxes.MouseY) {

_rotationX -= Input.GetAxis("Mouse Y") * sensitivityVert;

_rotationX = Mathf.Clamp(_rotationX, minimumVert, maximumVert);

float rotationY = transform.localEulerAngles.y;

transform.localEulerAngles = new Vector3(_rotationX, rotationY, 0);

}

else {

_rotationX -= Input.GetAxis("Mouse Y") * sensitivityVert;

_rotationX = Mathf.Clamp(_rotationX, minimumVert, maximumVert);

float delta = Input.GetAxis("Mouse X") * sensitivityHor;

float rotationY = transform.localEulerAngles.y + delta;

transform.localEulerAngles = new Vector3(_rotationX, rotationY, 0);

}

}

}

When you set the Axes menu and run the new code, you’re able to look around in all directions while moving the mouse. Great! But you’re still stuck in one place, looking around as if mounted on a turret. The next step is moving around the scene.

Looking around in response to mouse input is an important part of first-person controls, but you’re only halfway there. The player also needs to move in response to keyboard input. Let’s write a keyboard controls component to complement the mouse controls component; create a new C# script called FPSInput and attach that to the player (alongside the MouseLook script). For the moment, set the MouseLook component to horizontal rotation only.

TIP The keyboard and mouse controls explained here are split into separate scripts. You don’t have to structure the code this way, and you could have everything bundled into a single player controls script, but a component system (such as the one in Unity) tends to be most flexible and therefore most useful when you have functionality split into several smaller components.

The code you wrote in the previous section affected rotation only, but now we’ll change the object’s position instead. Refer to Listing 2.1; type that into FPSInput, but change Rotate() to Translate(). When you hit Play, the view slides up instead of spinning around. Try changing the parameter values to see how the movement changes (in particular, try swapping the first and second numbers); after experimenting with that for a bit, you can move on to adding keyboard input.

Listing 2.8 Spin code from the first listing, with a couple of minor changes

using UnityEngine;

using System.Collections;

public class FPSInput : MonoBehaviour {

public float speed = 6.0f; Not required, but you probably want to increase the speed.

void Update() {

transform.Translate(0, speed, 0); Change Rotate() to Translate().

}

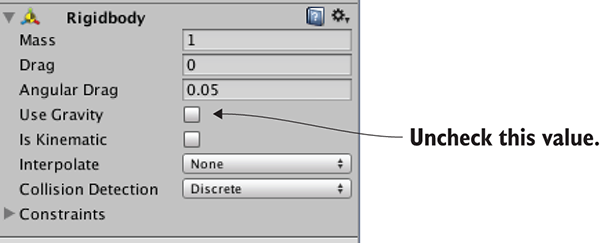

}