Third Edition

A Practical Guide to the Advanced Open Source Database

Copyright © 2018 Regina Obe, Leo Hsu. All rights reserved.

Printed in the United States of America.

Published by O’Reilly Media, Inc., 1005 Gravenstein Highway North, Sebastopol, CA 95472.

O’Reilly books may be purchased for educational, business, or sales promotional use. Online editions are also available for most titles (http://oreilly.com/safari). For more information, contact our corporate/institutional sales department: 800-998-9938 or corporate@oreilly.com.

See http://oreilly.com/catalog/errata.csp?isbn=9781491963418 for release details.

The O’Reilly logo is a registered trademark of O’Reilly Media, Inc. PostgreSQL: Up and Running, the cover image, and related trade dress are trademarks of O’Reilly Media, Inc.

While the publisher and the authors have used good faith efforts to ensure that the information and instructions contained in this work are accurate, the publisher and the authors disclaim all responsibility for errors or omissions, including without limitation responsibility for damages resulting from the use of or reliance on this work. Use of the information and instructions contained in this work is at your own risk. If any code samples or other technology this work contains or describes is subject to open source licenses or the intellectual property rights of others, it is your responsibility to ensure that your use thereof complies with such licenses and/or rights.

978-1-491-96341-8

[LSI]

PostgreSQL bills itself as the world’s most advanced open source database. We couldn’t agree more.

What we hope to accomplish in this book is to give you a firm grounding in the concepts and features that make PostgreSQL so impressive. Along the way, we should convince you that PostgreSQL does indeed stand up to its claim to fame. Because the database is advanced, no book short of the 3500 pages of documentation can bring out all its glory. But then again, most users don’t need to delve into the most abstruse features that PostgreSQL has to offer. So in our shorter 300-pager, we hope to get you, as the subtitle proclaims, Up and Running.

Each topic is presented with some context so you understand when to use it and what it offers. We assume you have prior experience with some other database so that we can jump right to the key points of PostgreSQL. We generously litter the pages of this book with links to references so you can dig deeper into topics of interest. These links lead to sections in the manual, to helpful articles, to blog posts of PostgreSQL vanguards. We also link to our own site at Postgres OnLine Journal, where we have collected many pieces that we have written on PostgreSQL and its interoperability with other applications.

This book focuses on PostgreSQL versions 9.5, 9.6, and 10, but we will cover some unique and advanced features that are also present in prior versions.

For migrants from other database engines, we’ll point out parallels that PostgreSQL shares with other leading products. Perhaps more importantly, we highlight feats you can achieve with PostgreSQL that are difficult or impossible to do in other databases.

We stop short of teaching you SQL, as you’ll find many excellent sources for that. SQL is much like chess—a few hours to learn, a lifetime to master. You have wisely chosen PostgreSQL. You’ll be greatly rewarded.

If you’re currently a savvy PostgreSQL user or a weather-beaten DBA, much of the material in this book should be familiar terrain, but you’ll be sure to pick up some pointers and shortcuts introduced in newer versions of PostgreSQL. Perhaps you’ll even find the hidden gem that eluded you. If nothing else, this book is at least ten times lighter than the PostgreSQL manual.

Not using PostgreSQL yet? This book is propaganda—the good kind. Each day you continue to use a database with limited SQL capabilities, you handicap yourself. Each day that you’re wedded to a proprietary system, you’re bleeding dollars.

Finally, if your work has nothing to do with databases or IT, or if you’ve just graduated from kindergarten, the cute picture of the elephant shrew on the cover should be worthy of the price alone.

PostgreSQL has a well-maintained set of online documentation: PostgreSQL manuals. We encourage you to bookmark it. The manual is available both as HTML and as a PDF. Hardcopy collector editions are available for purchase.

Other PostgreSQL resources include:

Planet PostgreSQL is an aggregator of PostgreSQL blogs. You’ll find PostgreSQL core developers and general users showcasing new features, novel ways to use existing ones, and reporting of bugs that have yet to be patched.

PostgreSQL Wiki provides tips and tricks for managing various facets of the database and migrating from other databases.

PostgreSQL Books is a list of books about PostgreSQL.

PostGIS in Action Books is the website for the books we’ve written on PostGIS, the spatial extender for PostgreSQL, and more recently pgRouting, another PostgreSQL extension that provides network routing capabilities useful for building driving apps.

For elements in parentheses, we gravitate toward placing the open parenthesis on the same line as the preceding element and the closing parenthesis on a line by itself. This is a classic C formatting style that we like because it cuts down on the number of blank lines:

function( Welcome to PostgreSQL );

We also remove gratuitous spaces in screen output, so if the formatting of your results doesn’t match ours exactly, don’t fret.

We omit the space after a serial comma for short elements. For example, ('a','b','c').

The SQL interpreter treats tabs, newlines, and carriage returns as whitespace. In our code, we generally use whitespaces for indentation, not tabs. Make sure that your editor doesn’t automatically remove tabs, newlines, and carriage returns or convert them to something other than spaces.

After copying and pasting, if you find your code not working, check the copied code to make sure it looks like what we have in the listing.

We use examples based on both Linux and Windows. Path notations

differ between the two, namely the use of solidus (/) versus

reverse solidus (\). While on Windows, use the Linux solidus,

always! /, not \. You may see a path such as

/postgresql_book/somefile.csv. These are always

relative to the root of your server. If you are on Windows, you must

include the drive letter:

C:/postgresql_book/somefile.csv.

The following typographical conventions are used in this book:

Indicates new terms, URLs, email addresses, filenames, and file extensions.

Constant widthUsed for program listings. Used within paragraphs, where needed for clarity, to refer to programming elements such as variables, functions, databases, data types, environment variables, statements, and keywords.

Constant width

boldShows commands or other text that should be typed literally by the user.

Constant width italicShows text that should be replaced with user-supplied values or by values determined by context.

This icon signifies a tip, suggestion, or general note.

This icon indicates a warning or caution.

Code and data examples are available for download at http://www.postgresonline.com/downloads/postgresql_book_3e.zip.

This book is here to help you get your job done. In general, you may use the code in this book in your programs and documentation. You do not need to contact us for permission unless you’re reproducing a significant portion of the code. For example, writing a program that uses several chunks of code from this book does not require permission. Selling or distributing a CD-ROM of examples from O’Reilly books does require permission. Answering a question by citing this book and quoting example code does not require permission. Incorporating a significant amount of example code from this book into your product’s documentation does require permission.

We appreciate, but do not require, attribution. An attribution usually includes the title, author, publisher, and ISBN. For example: “PostgreSQL: Up and Running, Third Edition by Regina Obe and Leo Hsu (O’Reilly). Copyright 2018 Regina Obe and Leo Hsu, 978-1-491-96341-8.”

If you feel your use of code examples falls outside fair use or the permission given above, feel free to contact us at permissions@oreilly.com.

Safari (formerly Safari Books Online) is a membership-based training and reference platform for enterprise, government, educators, and individuals.

Members have access to thousands of books, training videos, Learning Paths, interactive tutorials, and curated playlists from over 250 publishers, including O’Reilly Media, Harvard Business Review, Prentice Hall Professional, Addison-Wesley Professional, Microsoft Press, Sams, Que, Peachpit Press, Adobe, Focal Press, Cisco Press, John Wiley & Sons, Syngress, Morgan Kaufmann, IBM Redbooks, Packt, Adobe Press, FT Press, Apress, Manning, New Riders, McGraw-Hill, Jones & Bartlett, and Course Technology, among others.

For more information, please visit http://oreilly.com/safari.

Please address comments and questions concerning this book to the publisher:

Please submit errata using the book’s errata page.

The companion site for this book is at http://bit.ly/postgresql-up-and-running-3e.

To contact the authors, send email to lr@pcorp.us.

To comment or ask technical questions to the publisher, send email to bookquestions@oreilly.com.

For more information about our books, courses, conferences, and news, see our website at http://www.oreilly.com.

Find us on Facebook: http://facebook.com/oreilly

Follow us on Twitter: http://twitter.com/oreillymedia

Watch us on YouTube: http://www.youtube.com/oreillymedia

PostgreSQL is an extremely powerful piece of software that introduces features you may not have seen before. Some of the features are also present in other well-known database engines, but under different names. This chapter lays out the main concepts you should know when starting to attack PostgreSQL documentation, and mentions some related terms in other databases.

We begin by pointing you to resources for downloading and installing PostgreSQL. Next, we provide an overview of indispensable administration tools followed by a review of PostgreSQL nomenclature. PostgreSQL 10 was recently released. We’ll highlight some of the new features therein. We close with resources to turn to when you need additional guidance and to submit bug reports.

PostgreSQL is an enterprise-class relational database management system, on par with the very best proprietary database systems: Oracle, Microsoft SQL Server, and IBM DB2, just to name a few. PostgreSQL is special because it’s not just a database: it’s also an application platform, and an impressive one at that.

PostgreSQL is fast. In benchmarks, PostgreSQL either exceeds or matches the performance of many other databases, both open source and proprietary.

PostgreSQL invites you to write stored procedures and functions in numerous programming languages. In addition to the prepackaged languages of C, SQL, and PL/pgSQL, you can easily enable support for additional languages such as PL/Perl, PL/Python, PL/V8 (aka PL/JavaScript), PL/Ruby, and PL/R. This support for a wide variety of languages allows you to choose the language with constructs that can best solve the problem at hand. For instance, use R for statistics and graphing, Python for calling web services, the Python SciPy library for scientific computing, and PL/V8 for validating data, processing strings, and wrangling with JSON data. Easier yet, find a freely available function that you need, find out the language that it’s written in, enable that specific language in PostgreSQL, and copy the code. No one will think less of you.

Most database products limit you to a predefined set of data types: integers, texts, Booleans, etc. Not only does PostgreSQL come with a larger built-in set than most, but you can define additional data types to suit your needs. Need complex numbers? Create a composite type made up of two floats. Have a triangle fetish? Create a coordinate type, then create a triangle type made up of three coordinate pairs. A dozenal activist? Create your own duodecimal type. Innovative types are useful insofar as the operators and functions that support them. So once you’ve created your special number types, don’t forget to define basic arithmetic operations for them. Yes, PostgreSQL will let you customize the meaning of the symbols (+,-,/,*). Whenever you create a type, PostgreSQL automatically creates a companion array type for you. If you created a complex number type, arrays of complex numbers are available to you without additional work.

PostgreSQL also automatically creates types from any tables you define. For instance, create a table of dogs with columns such as breed, cuteness, and barkiness. Behind the scenes, PostgreSQL maintains a dogs data type for you. This amazingly useful bridge between the relational world and the object world means that you can treat data elements in a way that’s convenient for the task at hand. You can create functions that work on one object at a time or functions that work on sets of objects at a time. Many third-party extensions for PostgreSQL leverage custom types to achieve performance gains, provide domain-specific constructs for shorter and more maintainable code, and accomplish feats you can only fantasize about with other database products.

Our principal advice is this: don’t treat databases as dumb storage. A database such as PostgreSQL can be a full-fledged application platform. With a robust database, everything else is eye candy. Once you’re versant in SQL, you’ll be able to accomplish in seconds what would take a casual programmer hours, both in coding and running time.

In recent years, we’ve witnessed an upsurge of NoSQL movements (though much of it could be hype). Although PostgreSQL is fundamentally relational, you’ll find plenty of facilities to handle nonrelational data. The ltree extension to PostgreSQL has been around since time immemorial and provides support for graphs. The hstore extensions let you store key-value pairs. JSON and JSONB types allow storage of documents similar to MongoDb. In many ways, PostgreSQL accommodated NoSQL before the term was even coined!

PostgreSQL just celebrated its 20th birthday, dating from its christening to PostgreSQL from Postgres95. The beginnings of the PostgreSQL code-base began well before that in 1986. PostgreSQL is supported on all major operating systems: Linux, Unix, Windows, and Mac. Every year brings a new major release, offering enhanced performance along with features that push the envelope of what’s possible in a database offering.

Finally, PostgreSQL is open source with a generous licensing policy. PostgreSQL is supported by a community of developers and users where profit maximization is not the ultimate pursuit. If you want features, you’re free to contribute, or at least vocalize. If you want to customize and experiment, no one is going to sue you. You, the mighty user, make PostgreSQL what it is.

In the end, you will wonder why you ever used any other database, because PostgreSQL does everything you could hope for and does it for free. No more reading the licensing cost fineprint of those other databases to figure out how many dollars you need to spend if you have eight cores on your virtualized servers with X number of concurrent connections. No more fretting about how much more the next upgrade will cost you.

Given all the proselytizing thus far, it’s only fair that we point out situations when PostgreSQL might not be suitable.

The typical installation size of PostgreSQL without any extensions is more than 100 MB. This rules out PostgreSQL for a database on a small device or as a simple cache store. Many lightweight databases abound that could better serve your needs without the larger footprint.

Given its enterprise stature, PostgreSQL doesn’t take security lightly. If you’re developing lightweight applications where you’re managing security at the application level, PostgreSQL security with its sophisticated role and permission management could be overkill. You might consider a single-user database such as SQLite or a database such as Firebird that can be run either as a client server or in single-user embedded mode.

All that said, it is a common practice to combine PostgreSQL with other database types. One common combination you will find is using Redis or Memcache to cache PostgreSQL query results. As another example, SQLite can be used to store a disconnected set of data for offline querying when PostgreSQL is the main database backend for an application.

Finally, many hosting companies don’t offer PostgreSQL on a shared hosting environment, or they offer an outdated version. Most still gravitate toward the impotent MySQL. To a web designer, for whom the database is an afterthought, MySQL might suffice. But as soon as you learn to write any SQL beyond a single-table select and simple joins, you’ll begin to sense the shortcomings of MySQL. Since the first edition of this book, virtualization has resown the landscape of commerical hosting, so having your own dedicated server is no longer a luxury, but the norm. And when you have your own server, you’re free to choose what you wish to have installed. PostgreSQL bodes well with the popularity of cloud computing such as Platform as a Service (PaaS) and Database as a Service (DbaaS). Most of the major PaaS and DbaaS providers offer PostgreSQL, notably Heroku, Engine Yard, Red Hat OpenShift, Amazon RDS for PostgreSQL, Google Cloud SQL for PostgreSQL, Amazon Aurora for PostgreSQL, and Microsoft Azure for PostgreSQL.

Years ago, if you wanted PostgreSQL, you had to compile it from source. Thankfully, those days are long gone. Granted, you can still compile from source, but using packaged installers won’t make you any less cool. A few clicks or keystrokes, and you’re on your way.

If you’re installing PostgreSQL for the first time and have no existing database to upgrade, you should install the latest stable release version for your OS. The downloads page for the PostgreSQL core distribution maintains a listing of places where you can download PostgreSQL binaries for various OSes. In Appendix A, you’ll find useful installation instructions and links to additional custom distributions.

Four tools widely used with PostgreSQL are psql, pgAdmin, phpPgAdmin, and Adminer. PostgreSQL core developers actively maintain the first three; therefore, they tend to stay in sync with PostgreSQL releases. Adminer, while not specific to PostgreSQL, is useful if you also need to manage other relational databases: SQLite, MySQL, SQL Server, or Oracle. Beyond the four that we mentioned, you can find plenty of other excellent administration tools, both open source and proprietary.

psql is a command-line interface for running queries and is included in all distributions of PostgreSQL (see “psql Interactive Commands”). psql has some unusual features, such as an import and export command for delimited files (CSV or tab), and a minimalistic report writer that can generate HTML output. psql has been around since the introduction of PostgreSQL and is the tool of choice for many expert users, for people working in consoles without a GUI, or for running common tasks in shell scripts. Newer converts favor GUI tools and wonder why the older generation still clings to the command line.

pgAdmin is a popular, free GUI tool for PostgreSQL. Download it separately from PostgreSQL if it isn’t already packaged with your installer. pgAdmin runs on all OSes supported by PostgreSQL.

Even if your database lives on a console-only Linux server, go ahead and install pgAdmin on your workstation, and you’ll find yourself armed with a fantastic GUI tool.

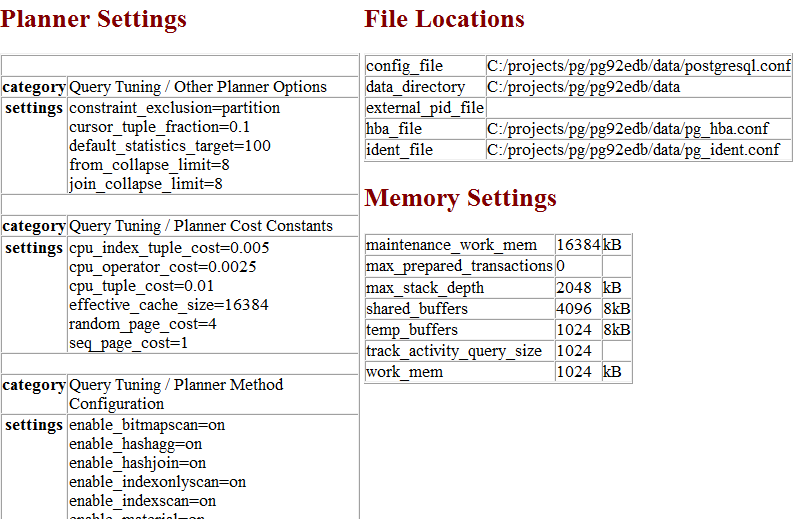

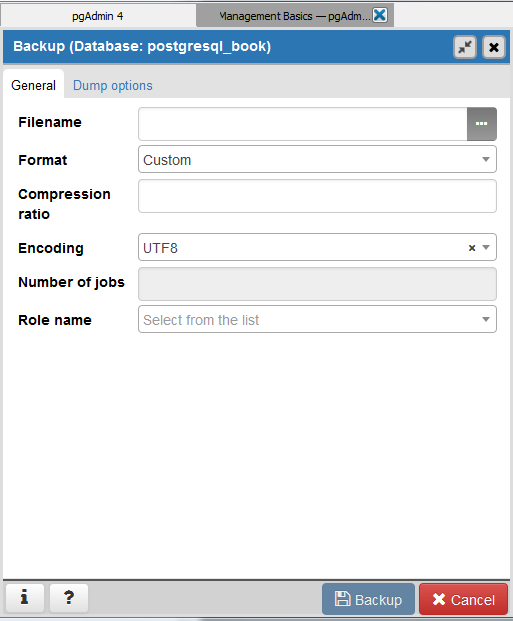

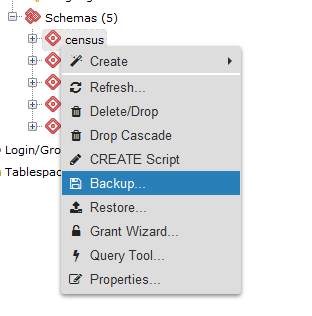

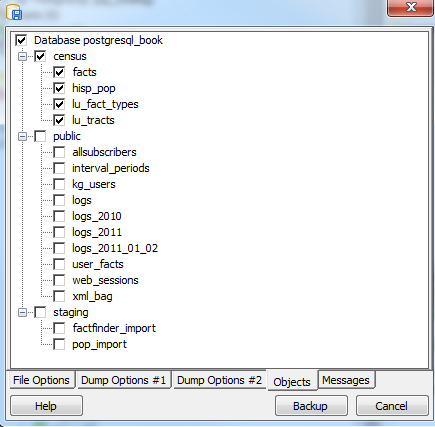

pgAdmin recently entered its fourth release, dubbed pgAdmin4. pgAdmin4 is a complete rewrite of pgAdmin3 that sports a desktop as well as a web server application version utilizing Python. pgAdmin4 is currently at version 1.5. It made its debut at the same time as PostgreSQL 9.6 and is available as part of several PostgreSQL distributions. You can run pgAdmin4 as a desktop application or via a browser interface.

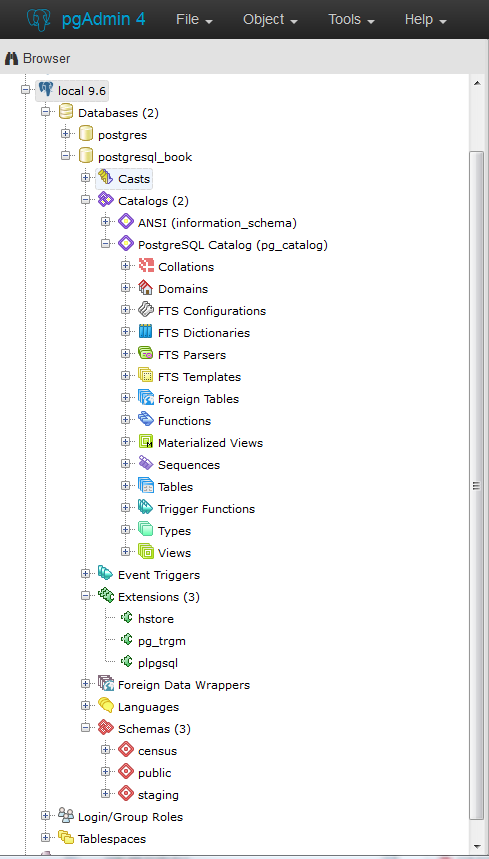

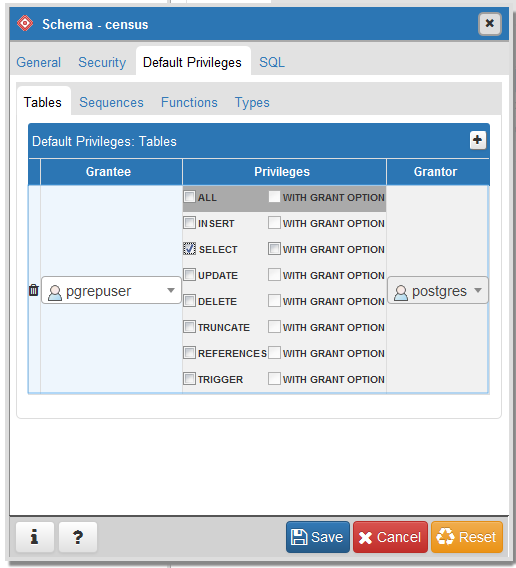

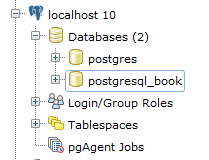

An example of pgAdmin4 appears in Figure 1-1.

If you’re unfamiliar with PostgreSQL, you should definitely start with pgAdmin. You’ll get a bird’s-eye view and appreciate the richness of PostgreSQL just by exploring everything you see in the main interface. If you’re deserting Microsoft SQL Server and are accustomed to Management Studio, you’ll feel right at home.

pgAdmin4 still has a couple of pain points compared to pgAdmin3, but its feature set is ramping up quickly and in some ways already surpasses pgAdmin3. That said, if you are a long-time user of pgAdmin3, you might want to go for the pgAdmin3 Long Time support (LTS) version supported and distributed by BigSQL, and spend a little time test-driving pgAdmin4 before you fully commit to it. But keep in mind that the pgAdmin project is fully committed to pgAdmin4 and no longer will make changes to pgAdmin3.

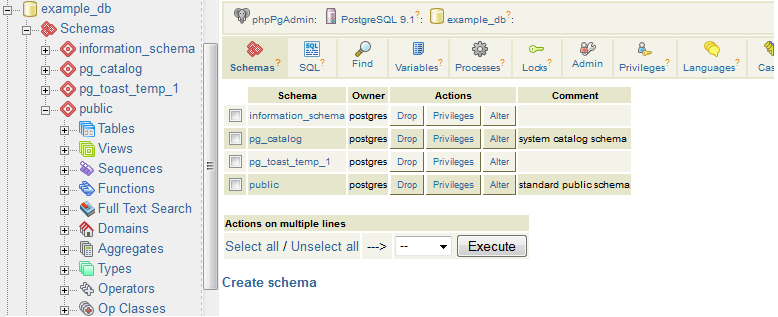

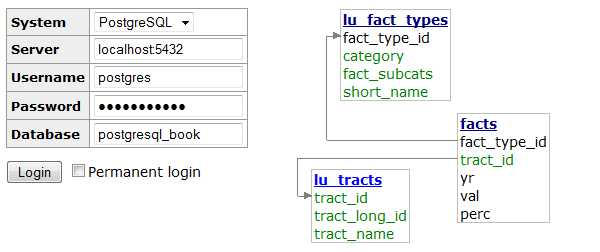

phpPgAdmin, pictured in Figure 1-2, is a free, web-based administration tool patterned after the popular phpMyAdmin. phpPgAdmin differs from phpMyAdmin by including ways to manage PostgreSQL objects such as schemas, procedural languages, casts, operators, and so on. If you’ve used phpMyAdmin, you’ll find phpPgAdmin to have the same look and feel.

If you manage other databases besides PostgreSQL and are looking for a unified tool, Adminer might fit the bill. Adminer is a lightweight, open source PHP application with options for PostgreSQL, MySQL, SQLite, SQL Server, and Oracle, all delivered through a single interface.

One unique feature of Adminer we’re impressed with is the relational diagrammer that can produce a schematic layout of your database schema, along with a linear representation of foreign key relationships. Another hassle-reducing feature is that you can deploy Adminer as a single PHP file.

Figure 1-3 is a screenshot of the login screen and a snippet from the diagrammer output. Many users stumble in the login screen of Adminer because it doesn’t include a separate text box for indicating the port number. If PostgreSQL is listening on the standard 5432 port, you need not worry. But if you use some other port, append the port number to the server name with a colon, as shown in Figure 1-3.

Adminer is sufficient for straightforward querying and editing, but because it’s tailored to the lowest common denominator among database products, you won’t find management applets that are specific to PostgreSQL for such tasks as creating new users, granting rights, or displaying permissions. Adminer also treats each schema as a separate database, which severely reduces the usefulness of the relational diagrammer if your relationships cross schema boundaries. If you’re a DBA, stick to pgAdmin or psql.

So you installed PostgreSQL, fired up pgAdmin, and expanded its browse tree. Before you is a bewildering display of database objects, some familiar and some completely foreign. PostgreSQL has more database objects than most other relational database products (and that’s before add-ons). You’ll probably never touch many of these objects, but if you dream up something new, more likely than not it’s already implemented using one of those esoteric objects. This book is not even going to attempt to describe all that you’ll find in a standard PostgreSQL install. With PostgreSQL churning out features at breakneck speed, we can’t imagine any book that could possibly do this. We limit our quick overview to those objects that you should be familiar with:

Schemas are part of the ANSI SQL standard. They are the immediate next level of organization within each database. If you think of the database as a country, schemas would be the individual states (or provinces, prefectures, or departments, depending on the country). Most database objects first belong to a schema, which belongs to a database. When you create a new database, PostgreSQL automatically creates a schema named public to store objects that you create. If you have few tables, using public would be fine. But if you have thousands of tables, you should organize them into different schemas.

Tables are the workhorses of any database. In PostgreSQL, tables are first citizens of their respective schemas, which in turn are citizens of the database.

PostgreSQL tables have two remarkable talents: first, they are inheritable. Table inheritance streamlines your database design and can save you endless lines of looping code when querying tables with nearly identical structures. Second, whenever you create a table, PostgreSQL automatically creates an accompanying custom data type.

Almost all relational database products offer views as a level of abstraction from tables. In a view, you can query multiple tables and present additional derived columns based on complex calculations. Views are generally read-only, but PostgreSQL allows you to update the underlying data by updating the view, provided that the view draws from a single table. To update data from views that join multiple tables, you need to create a trigger against the view. Version 9.3 introduced materialized views, which cache data to speed up commonly used queries at the sacrifice of having the most up-to-date data. See “Materialized Views”.

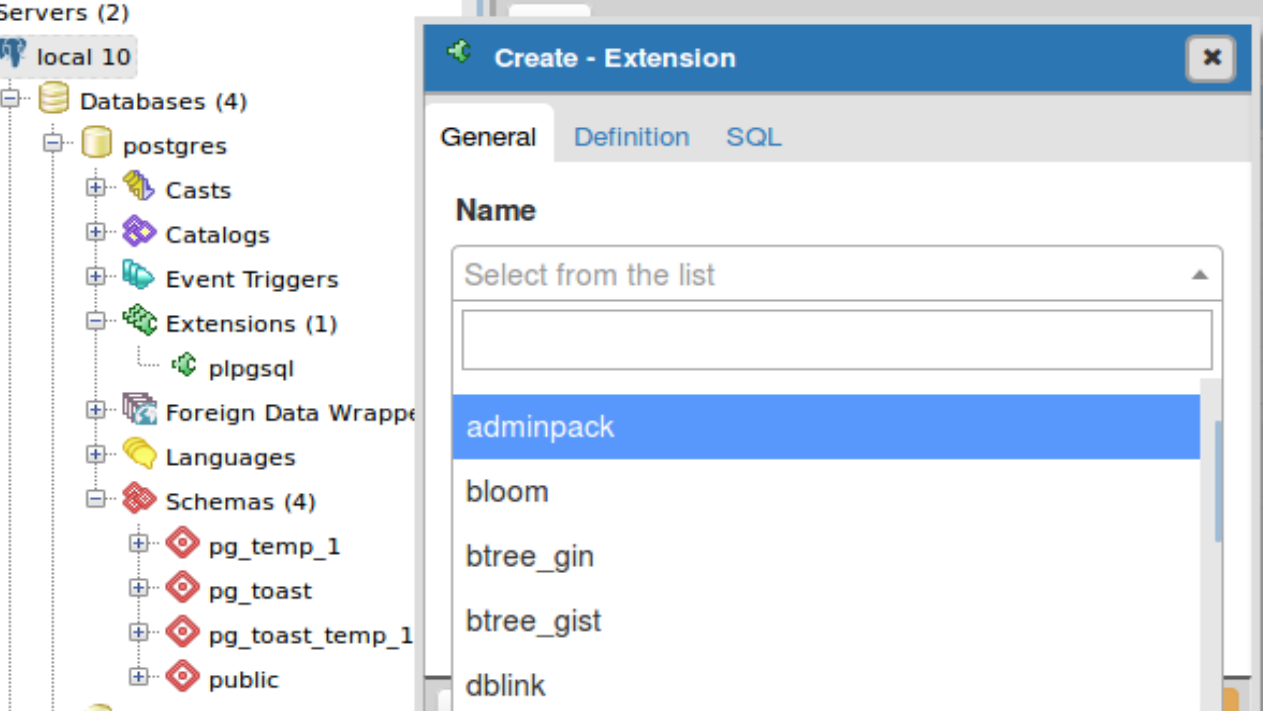

Extensions allow developers to package functions, data types, casts,

custom index types, tables, attribute variables, etc., for

installation or removal as a unit. Extensions are similar in concept

to Oracle packages and have been the preferred method for

distributing add-ons since PostgreSQL 9.1. You should follow the

developer’s instructions on how to install the extension files onto

your server, which usually involves copying binaries into your

PostgreSQL installation folders and then running a set of scripts.

Once done, you must enable the extension for each database

separately. You shouldn’t enable an extension in your database

unless you need it. For example, if you need advanced text search in

only one database, enable fuzzystrmatch for that one

only.

When you enable extensions, you choose the schemas where all constituent objects will reside. Accepting the default will place everything from the extension into the public schema, littering it with potentially thousands of new objects. We recommend that you create a separate schema that will house all extensions. For an extension with many objects, we suggest that you create a separate schema devoted entirely to it. Optionally, you can append the name of any schemas you add to the search_path variable of the database so you can refer to the function without having to prepend the schema name. Some extensions, especially ones that install a new procedural language (PL), will dictate the installation schema. For example, PL/V8 must be installed the pg_catalog schema.

Extensions may depend on other extensions. Prior to PostgreSQL

9.6, you had to know all dependent extensions and install them

first. With 9.6, you simply need to add the CASCADE

option and PostgreSQL will take care of the rest. For example:

CREATEEXTENSIONpostgis_tiger_geocoderCASCADE;

first installs the dependent extensions postgis and fuzzystrmatch, if not present.

You can program your own custom functions to handle data manipulation, perform complex calculations, or wrap similar functionality. Create functions using PLs. PostgreSQL comes stocked with thousands of functions, which you can view in the postgres database that is part of every install. PostgreSQL functions can return scalar values, arrays, single records, or sets of records. Other database products refer to functions that manipulate data as stored procedures. PostgreSQL does not make this distinction.

Create functions using a PL. PostgreSQL installs three by default: SQL,

PL/pgSQL, and C. You can easily install additional languages using

the extension framework or the CREATE PRODCEDURAL LANGUAGE command.

Languages currently in vogue are PL/Python, PL/V8 (JavaScript), and

PL/R. We’ll show you plenty of examples in Chapter 8.

Operators are nothing more than symbolically named aliases such as = or && for functions. In PostgreSQL, you can invent your own. This is often the case when you create custom data types. For example, if you create a custom data type of complex numbers, you’d probably want to also create addition operators (+,-,*,/) to handle arithmetic on them.

Foreign tables are virtual tables linked to data outside a PostgreSQL database. Once you’ve configured the link, you can query them like any other tables. Foreign tables can link to CSV files, a PostgreSQL table on another server, a table in a different product such as SQL Server or Oracle, a NoSQL database such as Redis, or even a web service such as Twitter or Salesforce.

Foreign data wrappers (FDWs) facilitate the magic handshake between PostgreSQL and external data sources. FDW implementations in PostgreSQL follow the SQL/Management of External Data (MED) standard.

Many charitable programmers have already developed FDWs for popular data sources. You can try your hand at creating your own FDWs as well. (Be sure to publicize your success so the community can reap the fruits of your toil.) Install FDWs using the extension framework. Once installed, pgAdmin lists them under a node called Foreign Data Wrappers.

You will find triggers in all enterprise-level databases; triggers detect data-change events. When PostgreSQL fires a trigger, you have the opportunity to execute trigger functions in response. A trigger can run in response to particular types of statements or in response to changes to particular rows, and can fire before or after a data-change event.

In pgAdmin, to see which table triggers, drill down to the table level. Pick the table of interest and look under triggers.

Create trigger functions to respond to firing of triggers. Trigger functions differ from regular functions in that they have access to special variables that store the data both before and after the triggering event. This allows you to reverse data changes made by the event during the execution of the trigger function. Because of this, trigger functions are often used to write complex validation routines that are beyond what can be implemented using check constraints.

Trigger technology is evolving rapidly in PostgreSQL. Starting in 9.0, a WITH clause lets you specify a boolean WHEN condition, which is tested to see whether the trigger should be fired. Version 9.0 also introduced the UPDATE OF clause, which allows you to specify which column(s) to monitor for changes. When data in monitored columns changes, the trigger fires. In 9.1, a data change in a view can fire a trigger. Since 9.3, data definition language (DDL) events can fire triggers. For a list of triggerable DDL events, refer to the Event Trigger Firing Matrix. pgAdmin lists DDL triggers under the Event Triggers branch. Finally, as of version 9.4, you may place triggers against foreign tables.

Catalogs are system schemas that store PostgreSQL builtin functions and metadata. Every database contains two catalogs: pg_catalog, which holds all functions, tables, system views, casts, and types packaged with PostgreSQL; and information_schema, which offers views exposing metadata in a format dictated by the ANSI SQL standard.

PostgreSQL practices what it preaches. You will find that PostgreSQL itself is built atop a self-replicating structure. All settings to finetune servers are kept in system tables that you’re free to query and modify. This gives PostgreSQL a level of extensibility (read hackability) impossible to attain by proprietary database products. Go ahead and take a close look inside the pg_catalog schema. You’ll get a sense of how PostgreSQL is put together. If you have superuser privileges, you are at liberty to make updates to the pg_catalog directly (and screw things up royally).

The information_schema catalog is one you’ll find in MySQL and SQL Server as well. The most commonly used views in the PostgreSQL information_schema are columns, which list all table columns in a database; tables, which list all tables (including views) in a database; and views, which list all views and the associated SQL to rebuild the view.

Type is short for data type. Every database product and every programming language has a set of types that it understands: integers, characters, arrays, blobs, etc. PostgreSQL has composite types, which are made up of other types. Think of complex numbers, polar coordinates, vectors, or tensors as examples.

Whenever you create a new table, PostgreSQL automatically creates a composite type based on the structure of the table. This allows you to treat table rows as objects in their own right. You’ll appreciate this automatic type creation when you write functions that loop through tables. pgAdmin doesn’t make the automatic type creation obvious because it does not list them under the types node, but rest assured that they are there.

Full text search (FTS) is a natural language–based search. This kind of search has some “intelligence” built in. Unlike regular expression search, FTS can match based on the semantics of an expression, not just its syntactical makeup. For example, if you’re searching for the word running in a long piece of text, you may end up with run, running, ran, runner, jog, sprint, dash, and so on. Three objects in PostgreSQL together support FTS: FTS configurations, FTS dictionaries, and FTS parsers. These objects exist to support the built-in Full Text Search engine packaged with PostgreSQL. For general use cases, the configurations, dictionaries, and parsers packaged with PostgreSQL are sufficient. But should you be working in a specific industry with specialized vocabulary and syntax rules such as pharmacology or organized crime, you can swap out the packaged FTS objects with your own. We cover FTS in detail in “Full Text Search”.

Casts prescribe how to convert from one data type to another. They are backed by functions that actually perform the conversion. In PostgreSQL, you can create your own casts and override or enhance the default casting behavior. For example, imagine you’re converting zip codes (which are five digits long in the US) to character from integer. You can define a custom cast that automatically prepends a zero when the zip is between 1000 and 9999.

Casting can be implicit or explicit. Implicit casts are automatic and usually expand from a more specific to a more generic type. When an implicit cast is not offered, you must cast explicitly.

A sequence controls the autoincrementation of a serial data type. PostgresSQL automatically creates sequences when you define a serial column, but you can easily change the initial value, step, and next available value. Because sequences are objects in their own right, more than one table can share the same sequence object. This allows you to create a unique key value that can span tables. Both SQL Server and Oracle have sequence objects, but you must create them manually.

Rules are instructions to rewrite an SQL prior to execution. We’re not going to cover rules as they’ve fallen out of favor because triggers can accomplish the same things.

For each object, PostgreSQL makes available many attribute variables that you can set. You can set variables at the server level, at the database level, at the function level, and so on. You may encounter the fancy term GUC, which stands for grand unified configuration, but it means nothing more than configuration settings in PostgreSQL.

Every September a new PostgreSQL is released. With each new release comes greater stability, heightened security, better performance—and avant-garde features. The upgrade process itself gets easier with each new version. The lesson here? Upgrade. Upgrade often. For a summary chart of key features added in each release, refer to the PostgreSQL Feature Matrix.

If you’re using PostgreSQL 9.1 or below, upgrade now! Version 9.1 retired to end-of-life (EOL) status in September 2016. Details about PostgreSQL EOL policy can be found here: PostgreSQL Release Support Policy. EOL is not where you want to be. New security updates and fixes to serious bugs will no longer be available. You’ll need to hire specialized PostgreSQL core consultants to patch problems or to implement workarounds—probably not a cheap proposition, assuming you can even locate someone willing to undertake the work.

Regardless of which major version you are running, you should always keep up with the latest micro versions. An upgrade from say, 9.1.17 to 9.1.21, requires no more than a file replacement and a restart. Micro versions only patch bugs. Nothing will stop working after a micro upgrade. Performing a micro upgrade can in fact save you much grief down the road.

PostgreSQL 10 is the latest stable release and was released in October 2017. Starting with PostgreSQL 10, the PostgreSQL project adopted a new versioning convention. In prior versions, major versions got a minor version number bump. For example, PostgreSQL 9.6 introduced some major new features that were not in its PostgreSQL 9.5 predecessor. In contrast, starting with PostgreSQL 10, major releases will have the first digit bumped. So major changes to PostgreSQL 10 will be called PostgreSQL 11. This is more in line with what other database vendors follow, such as SQLite, SQL Server, and Oracle.

Here are the key new features in 10:

There are new planner strategies for parallel queries: Parallel Bitmap Heap Scan, Parallel Index Scan, and others. These changes allow a wider range of queries to be parallelized for. See “Parallelized Queries”.

Prior versions of PostgreSQL had streaming replication that replicates the whole server cluster. Slaves in streaming replication were read-only and could be used only for queries that don’t change data. Nor could they have tables of their own. Logical replication provides two features that streaming replication did not have. You can now replicate just a table or a database (no need for the whole cluster); since you are replicating only part of the data, the slaves can have their own set of data that is not involved in replication.

In prior versions, to_tsvector would work only with plain text when generating a full text vector. Now to_tsvector can understand the json and jsonb types, ignoring the keys in JSON and including only the values in the vector. The ts_headline function for json and jsonb was also introduced. It highlights matches in a json document during a tsquery. Refer to “Full Text Support for JSON and JSONB”.

XMLTABLE provides a simpler way of deconstructing XML into a standard table structure. This feature has existed for some time in Oracle and IBM DB2 databases. Refer to Example 5-41.

The FDW API can now run aggregations such as COUNT(*) or SUM(*) on remote queries. postgres_fdw takes advantage of this new feature. Prior to postgres_fdw, any aggregation would require the local server to request all the data that needed aggregation and do the aggregation locally.

In prior versions, if you had a table you needed to partition but query as a single unit, you would utilize PostgreSQL table inheritance support. Using inheritance was cumbersome in that you had to write triggers to reroute data to a table PARTITION if adding to the parent table. PostgreSQL 10 introduces the PARTITION BY construct. PARTITION BY allows you to create a parent table with no data, but with a defined PARTITION formula. Now you can insert data into the parent table without the need to define triggers. Refer to “Partitioned Tables”.

Various speedups have been added.

New construct for creating statistics on multiple columns. Refer to Example 9-18.

A new IDENTITY qualifier in DDL table creation and ALTER statements provides a more standards-compliant way to designate a table column as an auto increment. Refer to Example 6-2.

PostgreSQL 9.6 was released in September 2016. PostgreSQL 9.6 is the last of the PostgreSQL 9+ series:

Up to now, PostgreSQL could not take advantage of multiple processor cores. In 9.6, the PostgreSQL engine can distribute certain types of queries across multiple cores and processers. Qualified queries include those with sequential scans, some joins, and some aggregates. However, queries that involve changing data such as deletes, inserts, and updates are not parallelizable. Parallelization is a work in progress with the eventual hope that all queries will take advantage of multiple processor cores. See “Parallelized Queries”.

Use the distance operator <-> in a full

text search query to indicate how far two words can be apart from

each other and still be considered a match. In prior versions you

could indicate only which words should be searched; now you can

control the sequence of the words. See “Full Text Search”.

\gexec optionsThese read an SQL statement from a query and execute it. See “Dynamic SQL Execution”.

Updates, inserts, and deletes are all much faster for simple cases. See Depesz: Directly Modify Foreign Table for details.

This is now supported by some FDWs. postgres_fdw supports this feature. When you join foreign tables, instead of retrieving the data from the foreign server and performing the join locally, FDW will perform the join remotely if foreign tables involved in the join are from the same foreign server and then retrieve the result set. This could lower the number of rows that have to come over from the foreign server, dramatically improving performance when joins eliminate many rows.

Version 9.5 came out in January of 2016. Notable new features are as follows:

A new IMPORT FOREIGN SCHEMA command allows for bulk creation of foreign tables from a

foreign server. Foreign table inheritance means that a local table

can inherit from foreign tables; foreign tables can inherit from

local tables; and foreign tables can inherit from other foreign

tables. You can also add constraints to foreign tables. See “Foreign Data Wrappers” and “Querying Other PostgreSQL Servers”.

The downside is that unlogged tables would get truncated

during a crash. In prior versions, promoting an unlogged table to

a logged table could not be done without creating a new table and

repopulating the records. In 9.5, just use the ALTER TABLE ... SET UNLOGGED

command.

The array_agg function accepts a set of values and combines them into a single array. Prior to 9.5, passing in arrays would throw an error. With 9.5, array_agg is smart enough to automatically construct multidimensional arrays for you. See Example 5-17.

A new kind of index with smaller footprint than B-Tree and GIN. Under some circumstances BRIN can outperform the former two. See “Indexes”.

This feature is used in conjunction with aggregate queries to return additional subtotal rows. See “GROUPING SETS, CUBE, ROLLUP” for examples.

Prior to 9.5, any inserts or updates that conflicted with primary key and check constraints would automatically fail. Now you have an opportunity to catch the exception and offer an alternative course, or to skip the records causing the conflict. See “UPSERTs: INSERT ON CONFLICT UPDATE”.

If you want to select and lock rows with the intent of updating the data,

you can use SELECT ... FOR UPDATE. If you’re unable

to obtain the lock, prior to 9.5, you’d receive an error. With

9.5, you can add the SKIP LOCKED option to bypass

rows for which you’re unable to obtain locks.

You now have the ability to set visibility and updatability on rows of a table using policies. This is especially useful for multitenant databases or situations where security cannot be easily isolated by segmenting data into different tables.

Version 9.4 came out in September 2014. Notable new features are as follows:

In 9.3, materialized views are inaccessible during a refresh, which could be a long time. This makes their deployment in a production undesirable. 9.4 eliminated the lock provided for materizalized views with a unique index.

percentile_disc (percentile discrete) and percentile_cont (percentile continuous) were added. They must be

used with the special WITHIN GROUP (ORDER BY ...)

construct. PostgreSQL vanguard Hubert Lubaczewski described their use in

Ordered Set Within

Group Aggregates. If you’ve ever looked for an

aggregate median function in PostgreSQL, you didn’t find it.

Recall from your introduction to medians that the algorithm has an

extra tie-breaker step at the end, making it difficult to program

as an aggregate function. The new percentile functions approximate

the true median with a “fast” median. We cover these two functions

in more detail in “Percentiles and Mode”.

WITH CHECK OPTION clause added to the CREATE VIEW statement

will block, update, or insert on the view if the resulting data

would no longer be visible in the view. We demonstrate this

feature in Example 7-3.

JSONBThe JavaScript object notation binary type allows you to index a full JSON document and expedite retrieval of subelements. For details, see “JSON” and check out these blog posts: Introduce jsonb: A Structured Format for Storing JSON and JSONB: Wildcard Query.

GIN was designed with FTS, trigrams, hstores, and JSONB in mind. Under many circumstances, you may choose GIN with its smaller footprint over B-Tree without loss in performance. Version 9.5 improved its query speed. Check out GIN as a Substitute for Bitmap Indexes.

These are json_build_array, json_build_object, json_object, json_to_record, and json_to_recordset.

You can now move all database objects from one tablespace to

another by using the syntax ALTER TABLESPACE old_space MOVE ALL TO

new_space;.

You can add a row number for set-returning functions with the system column ordinality. This is particularly handy when converting denormalized data stored in arrays, hstores, and composite types to records. Here is an example using hstore:

SELECTordinality,key,valueFROMEACH('breed=>pug,cuteness=>high'::hstore)WITHordinality;

The ALTER system SET ... construct allows you to set global system settings without

editing the postgresql.conf, as detailed in “The postgresql.conf File”. This also means you can now

programmatically change system settings, but keep in mind that

PostgreSQL may require a restart for new settings to take

effect.

The unnest function predictably allocates arrays of different sizes into columns. Prior to 9.4, unnesting arrays of different sizes resulted in shuffling of columns in unexpected ways.

ROWS FROMThis construct allows the use of multiple set-returning functions in a series, even if they have an unbalanced number of elements in each set:

SELECT*FROMROWSFROM(jsonb_each('{"a":"foo1","b":"bar"}'::jsonb),jsonb_each('{"c":"foo2"}'::jsonb))x(a1,a1_val,a2,a2_val);

You can code these in C to do work that is not available through SQL or functions. A trivial example is available in the 9.4 source code in the contrib/worker_spi directory.

Chances are that you’re not using PostgreSQL in a vacuum. You need a database driver to interact with applications and other databases. PostgreSQL works with free drivers for many programming languages and tools. Moreover, various commercial organizations provide drivers with extra bells and whistles at modest prices. Here are some of the notable open source drivers:

PHP is a popular language for web development, and most PHP distributions include at least one PostgreSQL driver: the old pgsql driver or the newer pdo_pgsql. You may need to enable them in your php.ini.

For Java developers, the JDBC driver keeps up with latest PostgreSQL versions. Download it from PostgreSQL.

For .NET (both Microsoft or Mono), you can use the Npgsql driver. Both the source code and the binary are available for .NET Framework, Microsoft Entity Framework, and Mono.NET.

If you need to connect from Microsoft Access, Excel, or any other products that support Open Database Connectivity (ODBC), download drivers from the PostgreSQL ODBC drivers site. You’ll have your choice of 32-bit or 64-bit.

LibreOffice 3.5 and later comes packaged with a native PostgreSQL driver. For OpenOffice and older versions of LibreOffice, you can use the JDBC driver or the SDBC driver. Learn more details from our article OO Base and PostgreSQL.

Python has support for PostgreSQL via many database drivers. At the moment, psycopg2 is the most popular. Rich support for PostgreSQL is also available in the Django web framework. If you are looking for an object-relational mapper, SQL Alchemy is the most popular and is used internally by the Multicorn Foreign Data Wrapper.

If you use Ruby, connect to PostgreSQL using rubygems pg.

You’ll find Perl’s connectivity to PostgreSQL in the DBI and the DBD::Pg drivers. Alternatively, there’s the pure Perl DBD::PgPP driver from CPAN.

Node.js is a JavaScript framework for running scalable network programs. There are two PostgreSQL drivers currently: Node Postgres with optional native libpq bindings and pure JS (no compilation required) and Node-DBI.

There will come a day when you need help. That day always arrives early; we want to point you to some resources now rather than later. Our favorite is the lively mailing list designed for helping new and old users with technical issues. First, visit PostgreSQL Help Mailing Lists. If you are new to PostgreSQL, the best list to start with is the PGSQL General Mailing List. If you run into what appears to be a bug in PostgreSQL, report it at PostgreSQL Bug Reporting.

The MIT/BSD-style licensing of PostgreSQL makes it a great candidate for forking. Various groups have done exactly that over the years. Some have contributed their changes back to the original project or funded PostgreSQL work. For list of forks, refer to PostgreSQL-derived databases.

Many popular forks are proprietary and closed source. Netezza, a popular database choice for data warehousing, was a PostgreSQL fork at inception. Similarly, the Amazon Redshift data warehouse is a fork of a fork of PostgreSQL. Amazon has two other offerings that are closer to standard PostgreSQL: Amazon RDS for PostgreSQL and Amazon Aurora for PostgreSQL. These stay in line with PostgreSQL versions in SQL syntax but with more management and speed features.

PostgreSQL Advanced Plus by EnterpriseDB is a fork that adds Oracle syntax and compatibility features to woo Oracle users. EnterpriseDB ploughs funding and development support back to the PostgreSQL community. For this, we’re grateful. Its Postgres Plus Advanced Server is fairly close to the most recent stable version of PostgreSQL.

Postgres-X2, Postgres-XL, and GreenPlum are three budding forks with open source licensing (although GreenPlum was closed source for a period). These three target large-scale data analytics and replication.

Part of the reason for forking is to advance ahead of the PostgreSQL release cycle and try out new features that may or may not be of general interest. Many of the new features developed this way do find their way back into a later PostgreSQL core release. Such is the case with the multi-master bi-directional replication (BDR) fork developed by 2nd Quadrant. Pieces of BDR, such as the logical replication support, are beefing up the built-in replication functionality in PostgreSQL proper. Some of the parallelization work of Postgres-XL will also likely make it into future versions of PostgreSQL.

Citus is a project that started as a fork of PostgreSQL to support real-time big data and parallel queries. It has since been incorporated back and can be installed in PostgreSQL 9.5 as an extension.

Google Cloud SQL for PostgreSQL is a fairly recent addition by Google and is currently in beta.

This chapter covers what we consider basic administration of a PostgreSQL server: managing roles and permissions, creating databases, installing extensions, and backing up and restoring data. Before continuing, you should have already installed PostgreSQL and have administration tools at your disposal.

Three main configuration files control operations of a PostgreSQL server:

Controls general settings, such as memory allocation, default storage location for new databases, the IP addresses that PostgreSQL listens on, location of logs, and plenty more.

Controls access to the server, dictating which users can log in to which databases, which IP addresses can connect, and which authentication scheme to accept.

If present, this file maps an authenticated OS login to a PostgreSQL user. People sometimes map the OS root account to the PostgresSQL superuser account, postgres.

PostgreSQL officially refers to users as roles. Not all roles need to have login privileges. For example, group roles often do not. We use the term user to refer to a role with login privileges.

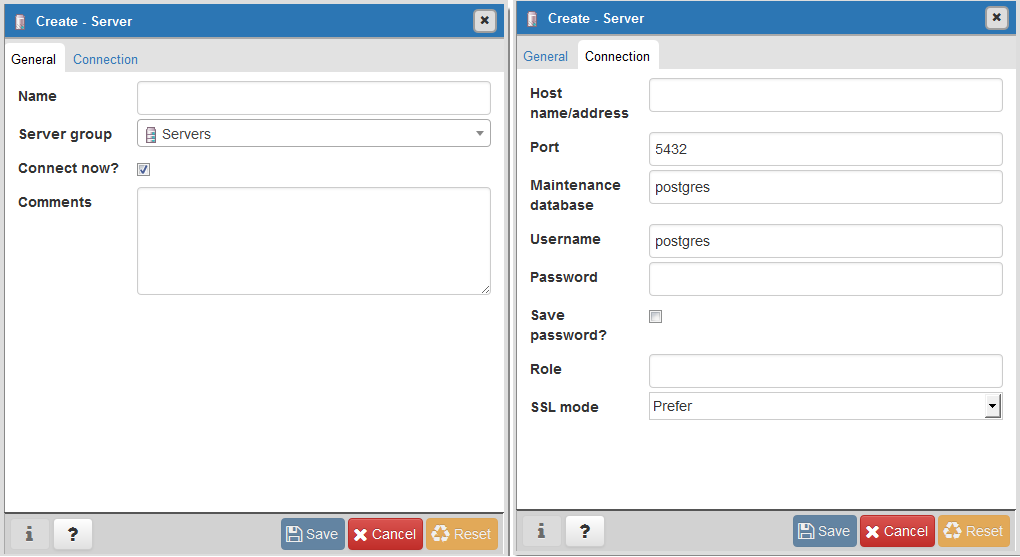

If you accepted default installation options, you will find these configuration files in the main PostgreSQL data folder. You can edit them using any text editor or the Admin Pack in pgAdmin. Instructions for editing with pgAdmin are in “Editing postgresql.conf and pg_hba.conf from pgAdmin3”. If you are unable to find the physical location of these files, run the Example 2-1 query as a superuser while connected to any database.

SELECTname,settingFROMpg_settingsWHEREcategory='File Locations';

name | setting -------------------+------------------------------------------ config_file | /etc/postgresql/9.6/main/postgresql.conf data_directory | /var/lib/postgresql/9.6/main external_pid_file | /var/run/postgresql/9.6-main.pid hba_file | /etc/postgresql/9.6/main/pg_hba.conf ident_file | /etc/postgresql/9.6/main/pg_ident.conf (5 rows)

Some configuration changes require a PostgreSQL service

restart, which closes any active connections from clients. Other changes

require just a reload. New users connecting after a reload will receive

the new setting. Extant users with active connections will not be

affected during a reload. If you’re not sure whether a configuration

change requires a reload or restart, look under the context setting

associated with a configuration. If the context is

postmaster, you’ll need a restart. If the context is

user, a reload will suffice.

A reload can be done in several ways. One way is to open a console window and run this command:

pg_ctl reload -D your_data_directory_hereIf you have PostgreSQL installed as a service in RedHat Enterprise Linux, CentOS, or Ubuntu, enter instead:

service postgresql-9.5 reloadpostgresql-9.5 is the name of your

service. (For older versions of PostgreSQL, the service is sometimes

called postgresql sans version number.)

You can also log in as a superuser to any database and execute the following SQL:

SELECTpg_reload_conf();

Finally, you can reload from pgAdmin; see “Editing postgresql.conf and pg_hba.conf from pgAdmin3”.

More fundamental configuration changes require a restart. You can perform a restart by stopping and restarting the postgres service (daemon). Yes, power cycling will do the trick as well.

You can’t restart with a PostgreSQL command, but you can trigger a restart from the operating system shell. On Linux/Unix with a service, enter:

service postgresql-9.6 restartFor any PostgreSQL instance not installed as a service:

pg_ctl restart -D your_data_directory_hereOn Windows you can also just click Restart on the PostgreSQL service in the Services Manager.

postgresql.conf controls the life-sustaining settings of the PostgreSQL server. You can override many settings at the database, role, session, and even function levels. You’ll find many details on how to finetune your server by tweaking settings in the article Tuning Your PostgreSQL Server.

Version 9.4 introduced an important change: instead of editing postgresql.conf directly, you should override settings using an additional file called postgresql.auto.conf. We further recommend that you don’t touch the postgresql.conf and place any custom settings in postgresql.auto.conf.

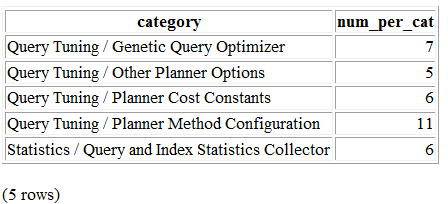

An easy way to read the current settings without opening the configuration files is to query the view named pg_settings. We demonstrate in Example 2-2.

SELECTname,context,unit,setting,boot_val,reset_valFROMpg_settingsWHEREnameIN('listen_addresses','deadlock_timeout','shared_buffers','effective_cache_size','work_mem','maintenance_work_mem')ORDERBYcontext,name;

name | context | unit | setting | boot_val | reset_val ---------------------+------------+------+-------- +-----------+---------- listen_addresses | postmaster | | * | localhost | * shared_buffers | postmaster | 8kB | 131584 | 1024 | 131584 deadlock_timeout | superuser | ms | 1000 | 1000 | 1000 effective_cache_size | user | 8kB | 16384 | 16384 | 16384 maintenance_work_mem | user | kB | 16384 | 16384 | 16384 work_mem | user | kB | 5120 | 1024 | 5120

The context is the scope of the setting. Some settings have a wider effect than others, depending on their context.

User settings can be changed by each user to affect just that user’s sessions. If set by the superuser, the setting becomes a default for all users who connect after a reload.

Superuser settings can be changed only by a superuser, and will apply to all users who connect after a reload. Users cannot individually override the setting.

Postmaster settings affect the entire server (postmaster represents the PostgreSQL service) and take effect only after a restart.

Settings with user or superuser context can be set for a specific database, user, session, and function level. For example, you might want to set work_mem higher for an SQL guru-level user who writes mind-boggling queries. Similarly, if you have one function that is sort-intensive, you could raise work_mem just for it. Settings set at database, user, session, and function levels do not require a reload. Settings set at the database level take effect on the next connect to the database. Settings set for the session or function take effect right away.

Be careful checking the units of measurement used for memory. As you can see in Example 2-2, some are reported in 8-KB blocks and some just in kilobytes. Regardless of how a setting displays, you can use any unit of choice when setting; 128 MB is a versatile choice for most memory settings.

Showing units as 8 KB is annoying at best and is

destabilizing at worst. The SHOW command in SQL offers display

settings in labeled and more intuitive units. For example,

running:

SHOWshared_buffers;

returns 1028MB. Similarly, running:

SHOWdeadlock_timeout;

returns 1s. If you want to see the units for all

settings, enter SHOW ALL.

setting is the current setting;

boot_val is the default setting;

reset_val is the new setting if you were to restart

or reload the server. Make sure that setting and

reset_val match after you make a change. If not, the

server needs a restart or reload.

New in version 9.5 is a system view called pg_file_settings, which

you can use to query settings. Its output lists the source file where

the settings can be found. The applied tells you

whether the setting is in effect; if the setting has an f in that column you need to reload or

restart to make it take effect. In cases where a particular setting is

present in both postgresql.conf and

postgresql.auto.conf, the

postgresql.auto.conf one will take precedent and

you’ll see the other files with applied set to false (f). The applied

is shown in Example 2-3.

SELECTname,sourcefile,sourceline,setting,appliedFROMpg_file_settingsWHEREnameIN('listen_addresses','deadlock_timeout','shared_buffers','effective_cache_size','work_mem','maintenance_work_mem')ORDERBYname;

name | sourcefile | sourceline | setting | applied ---------------------+-------------------------------+------------+---------+-------- effective_cache_size | E:/data96/postgresql.auto.conf| 11 | 8GB | t listen_addresses | E:/data96/postgresql.conf | 59 | * | t maintenance_work_mem | E:/data96/postgresql.auto.conf| 3 | 16MB | t shared_buffers | E:/data96/postgresql.conf | 115 | 128MB | f shared_buffers | E:/data96/postgresql.auto.conf| 5 | 131584 | t

Pay special attention to the following network settings in postgresql.conf or postgresql.auto.conf, because an incorrect entry here will prevent clients from connecting. Changing their values requires a service restart:

Informs PostgreSQL which IP addresses to listen on. This usually defaults to local (meaning a socket on the local system), or localhost, meaning the IPv6 or IPv4 localhost IP address. But many people change the setting to *, meaning all available IP addresses.

Defaults to 5432. You may wish to change this well-known port to something else for security or if you are running multiple PostgreSQL services on the same server.

This setting is somewhat a misnomer. It specifies the format of the logfiles rather than their physical location. The default is stderr. If you intend to perform extensive analysis on your logs, we suggest changing it to csvlog, which is easier to export to third-party analytic tools. Make sure you have the logging_collection set to on if you want logging.

The following settings affect performance. Defaults are rarely the optimal value for your installation. As soon as you gain enough confidence to tweak configuration settings, you should tune these values:

Allocated amount of memory shared among all connections to store recently accessed pages. This setting profoundly affects the speed of your queries. You want this setting to be fairly high, probably as much as 25% of your RAM. However, you’ll generally see diminishing returns after more than 8 GB. Changes require a restart.

An estimate of how much memory PostgreSQL expects the operating system to devote to it. This setting has no effect on actual allocation, but the query planner figures in this setting to guess whether intermediate steps and query output would fit in RAM. If you set this much lower than available RAM, the planner may forgo using indexes. With a dedicated server, setting the value to half of your RAM is a good starting point. Changes require a reload.

Controls the maximum amount of memory allocated for each operation such as sorting, hash join, and table scans. The optimal setting depends on how you’re using the database, how much memory you have to spare, and whether your server is dedicated to PostgreSQL. If you have many users running simple queries, you want this setting to be relatively low to be democratic; otherwise, the first user may hog all the memory. How high you set this also depends on how much RAM you have to begin with. A good article to read for guidance is Understanding work_mem. Changes require a reload.

The total memory allocated for housekeeping activities such as vacuuming (pruning records marked for deletion). You shouldn’t set it higher than about 1 GB. Reload after changes.

This is a new setting introduced in 9.6 for parallelism. The setting determines

the maximum parallel worker threads that can be spawned for each

gather operation. The default setting is 0, which means

parallelism is completely turned off. If you have more than one

CPU core, you will want to elevate this. Parallel processing is

new in version 9.6, so you may have to experiment with this

setting to find what works best for your server. Also note that

the number you have here should be less than

max_worker_processes, which defaults to 8

because the parallel background worker processes are a subset of

the maximum allowed processes.

In version 10, there is an additional setting called max_parallel_workers,

which controls the subset of max_worker_processes allocated for

parallelization.

PostgreSQL 9.4 introduced the ability to change settings using the ALTER SYSTEM SQL command. For example, to set the work_mem globally, enter the following:

ALTERSYSTEMSETwork_mem='500MB';

This command is wise enough to not directly edit postgres.conf but will make the change in postgres.auto.conf.

Depending on the particular setting changed, you may need to restart the service. If you just need to reload it, here’s a convenient command:

SELECTpg_reload_conf();

If you have to track many settings, consider organizing them into multiple configuration files and then linking them back using the include or include_if_exists directive within the postgresql.conf. The exact syntax is as follows:

include 'filename'The filename argument can be an absolute path or a relative path from the postgresql.conf file.

The easiest way to figure out what you screwed up is to look at the logfile, located at the root of the data folder, or in the pg_log subfolder. Open the latest file and read what the last line says. The error raised is usually self-explanatory.

A common culprit is setting shared_buffers too high. Another suspect is an old postmaster.pid left over from a failed shutdown. You can safely delete this file, located in the data cluster folder, and try restarting again.

The pg_hba.conf file controls which IP addresses and users can connect to the database. Furthermore, it dictates the authentication protocol that the client must follow. Changes to the file require at least a reload to take effect. A typical pg_hba.conf looks like Example 2-4.

# TYPE DATABASE USER ADDRESS METHOD host all all 127.0.0.1/32 identhost all all ::1/128 trust

host all all 192.168.54.0/24 md5

hostssl all all 0.0.0.0/0 md5

# TYPE DATABASE USER ADDRESS METHOD # Allow replication connections from localhost, # by a user with replication privilege.

#host replication postgres 127.0.0.1/32 trust #host replication postgres ::1/128 trust

Authentication method. The usual choices are ident, trust, md5, peer, and password.

IPv6 syntax for defining network range. This applies only to servers with IPv6 support and may prevent pg_hba.conf from loading if you add this section without actually having IPv6 networking enabled on the server.

IPv4 syntax for defining network range. The first part is the network address followed by the bit mask; for instance: 192.168.54.0/24. PostgreSQL will accept connection requests from any IP address within the range.

SSL connection rule. In our example, we allow anyone to connect to our server outside of the allowed IP range as long as they can connect using SSL.

SSL configuration settings can be found in postgres.conf or postgres.auto.conf:

ssl, ssl_cert_file,

ssl_key_file. Once the server confirms that the

client is able to support SSL, it will honor the connection

request and all transmissions will be encrypted using the key

information.

Range of IP addresses allowed to replicate with this server.

For each connection request, pg_hba.conf is checked from the top down. As

soon as a rule granting access is encountered, a connection is allowed

and the server reads no further in the file. As soon as a rule rejecting

access is encountered, the connection is denied and the server reads no

further in the file. If the end of the file is reached without any

matching rules, the connection is denied. A common mistake people make

is to put the rules in the wrong order. For example, if you added

0.0.0.0/0 reject before 127.0.0.1/32 trust,

local users won’t be able to connect, even though a rule is in place

allowing them to.

New in version 10 is the pg_hba_file_rules

system view that lists all the contents of the pg_hba.conf file.

Don’t worry. This happens quite often, but is easy to recover from. This error is generally caused by typos or by adding an unavailable authentication scheme. When the postgres service can’t parse pg_hba.conf, it blocks all access just to be safe. Sometimes, it won’t even start up. The easiest way to figure out what you did wrong is to read the logfile located in the root of the data folder or in the pg_log subfolder. Open the latest file and read the last line. The error message is usually self-explanatory. If you’re prone to slippery fingers, back up the file prior to editing.

PostgreSQL gives you many choices for authenticating users—probably more than any other database product. Most people are content with the popular ones: trust, peer, ident, md5, and password. And don’t forget about reject, which immediately denies access. Also keep in mind that pg_hba.conf offers settings at many other levels as the gatekeeper to the entire PostgreSQL server. Users or devices must still satisfy role and database access restrictions after being admitted by pg_hba.conf.

We describe the common authentication methods here:

This is the least secure authentication, essentially no password is needed. As long as the user and database exist in the system and the request comes from an IP within the allowed range, the user can connect. You should implement trust only for local connections or private network connections. Even then it’s possible for someone to spoof IP addresses, so the more security-minded among us discourage its use entirely. Nevertheless, it’s the most common for PostgreSQL installed on a desktop for single-user local access where security is not a concern.

Uses pg_ident.conf to check whether the OS account of the user trying to connect has a mapping to a PostgreSQL account. The password is not checked. ident is not available on Windows.

Uses the OS name of the user from the kernel. It is available only for Linux, BSD, macOS, and Solaris, and only for local connections on these systems.

Stipulates that connections use SSL. The client must have a registered certificate. cert uses an ident file such as pg_ident to map the certificate to a PostgreSQL user and is available on all platforms where SSL connection is enabled.

More esoteric options abound, such as gss, radius, ldap, and pam. Some may not always be installed by default.

You can elect more than one authentication method, even for the same database. Keep in mind that pg_hba.conf is processed from top to bottom.

More often than not, someone else (never you, of course) will execute an inefficient query that ends up hogging resources. They could also run a query that’s taking much longer than what they have patience for. Cancelling the query, terminating the connection, or both will put an end to the offending query.

Cancelling and terminating are far from graceful and should be used sparingly. Your client application should prevent queries from going haywire in the first place. Out of politeness, you probably should alert the connected role that you’re about to terminate its connection, or wait until after hours to do the dirty deed.

There are few scenarios where you should cancel all active update queries: before backing up the database and before restoring the database.

To cancel running queries and terminate connections, follow these steps:

Retrieve a listing of recent connections and process IDs (PIDs):

SELECT*FROMpg_stat_activity;

pg_stat_activity is a view that lists the last query running on each connection, the connected user (usename), the database (datname) in use, and the start times of the queries. Review the list to identify the PIDs of connections you wish to terminate.

Cancel active queries on a connection with PID

1234:

SELECT pg_cancel_backend(1234);This does not terminate the connection itself, though.

Terminate the connection:

SELECT pg_terminate_backend(1234);You may need to take the additional step of terminating the client connection. This is especially important prior to a database restore. If you don’t terminate the connection, the client may immediately reconnect after restore and run the offending query anew. If you did not already cancel the queries on the connection, terminating the connection will cancel all of its queries.

PostgreSQL lets you embed functions within a regular SELECT statement. Even though pg_terminate_backend and pg_cancel_backend act on only one connection at a time, you can kill multiple connections by wrapping them in a SELECT. For example, let’s suppose you want to kill all connections belonging to a role with a single blow. Run this SQL command:

SELECT pg_terminate_backend(pid) FROM pg_stat_activity

WHERE usename = 'some_role';You can set certain operational parameters at the server, database, user, session, or function level. Any queries that exceed the parameter will automatically be cancelled by the server. Setting a parameter to 0 disables the parameter:

This is the amount of time a deadlocked query should wait before giving up. This defaults to 1000 ms. If your application performs a lot of updates, you may want to increase this value to minimize contention.

Instead of relying on this setting, you can include a NOWAIT

clause in your update SQL: SELECT FOR UPDATE NOWAIT ...

.

The query will be automatically cancelled upon encountering a deadlock.

In PostgreSQL 9.5, you have another choice: SELECT FOR UPDATE SKIP

LOCKED will skip over locked rows.

This is the amount of time a query can run before it is forced to cancel. This defaults to 0, meaning no time limit. If you have long-running functions that you want cancelled if they exceed a certain time, set this value in the definition of the function rather than globally. Cancelling a function cancels the query and the transaction that’s calling it.

This is the amount of time a query should wait for a lock before giving up, and is most applicable to update queries. Before data updates, the query must obtain an exclusive lock on affected records. The default is 0, meaning that the query will wait infinitely. This setting is generally used at the function or session level. lock_timeout should be lower than statement_timeout, otherwise statement_timeout will always occur first, making lock_timeout irrelevant.

This is the amount of time a transaction can stay in an idle state before it is terminated. This defaults to 0, meaning it can stay alive infinitely. This setting is new in PostgreSQL 9.6. It’s useful for preventing queries from holding on to locks on data indefinitely or eating up a connection.

The pg_stat_activity view has changed considerably since version 9.1 with the

renaming, dropping, and addition of new columns. Starting from version

9.2, procpid was renamed to pid.

pg_stat_activity changed in PostgreSQL 9.6 to provide

more detail about waiting queries. In prior versions of PostgreSQL,

there was a field called waiting that could take the

value true or false. true denoted

a query that was being blocked waiting some resource, but the resource

being waited for was never stated. In PostgreSQL 9.6,

waiting was removed and replaced with

wait_event_type and wait_event to

provide more information about what resource a query was waiting for.

Therefore, prior to PostgreSQL 9.6, use waiting = true to

determine what queries are being blocked. In PostgreSQL 9.6 or higher,

use wait_event IS NOT NULL.

In addition to the change in structure, PostgreSQL 9.6 will now

track additional wait locks that did not get set to

waiting=true in prior versions. As a result, you may find

lighter lock waits being listed for queries than you saw in prior

versions. For a list of different wait_event types, refer to PostgreSQL

Manual: wait_event names and types.

PostgreSQL handles credentialing using roles. Roles that can log in are called login roles. Roles can also be members of other roles; the roles that contain other roles are called group roles. (And yes, group roles can be members of other group roles and so on, but don’t go there unless you have a knack for hierarchical thinking.) Group roles that can log in are called group login roles. However, for security, group roles generally cannot log in. A role can be designated as a superuser. These roles have unfettered access to the PostgreSQL service and should be assigned with discretion.

Recent versions of PostgreSQL no longer use the terms users and groups. You will still run into these terms; just know that they mean login roles and group roles, respectively. For backward compatibility, CREATE USER and CREATE GROUP still work in current versions, but shun them and use CREATE ROLE instead.

When you initialize the data cluster during setup, PostgreSQL

creates a single login role with the name postgres.

(PostgreSQL also creates a namesake database called postgres.) You can

bypass the password setting by mapping an OS root user to the new role

and using ident, peer, or

trust for authentication. After you’ve installed

PostgreSQL, before you do anything else, you should log in as postgres

and create other roles. pgAdmin has a graphical section

for creating user roles, but if you want to create one using SQL,

execute an SQL command like the one shown in Example 2-5.

CREATEROLEleoLOGINPASSWORD'king'VALIDUNTIL'infinity'CREATEDB;

Specifying VALID UNTIL is optional. If omitted, the role remains active indefinitely. CREATEDB grants database creation privilege to the new role.

To create a user with superuser privileges, follow Example 2-6. Naturally, you must be a superuser to create other superusers.

CREATEROLEreginaLOGINPASSWORD'queen'VALIDUNTIL'2020-1-1 00:00'SUPERUSER;

Both of the previous examples create roles that can log in. To create roles that cannot log in, omit the LOGIN PASSWORD clause.

Group roles generally cannot log in. Rather, they serve as containers for other roles. This is merely a best-practice suggestion. Nothing stops you from creating a role that can log in as well as contain other roles.

Create a group role using the following SQL:

CREATEROLEroyaltyINHERIT;